All documentation links

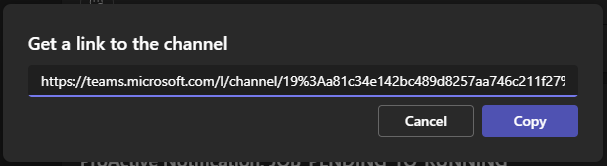

ProActive Workflows & Scheduling (PWS)

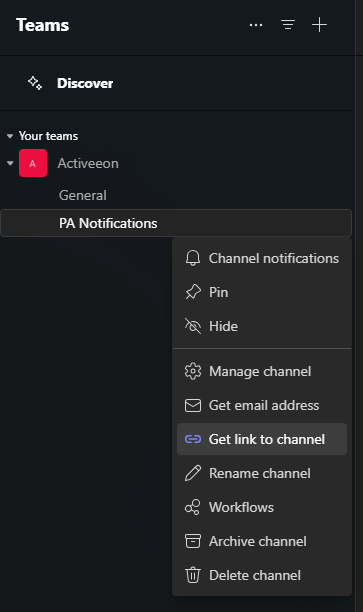

-

PWS User Guide

(Workflows, Workload automation, Jobs, Tasks, Catalog, Resource Management, Big Data/ETL, …) PWS Modules

Job Planner

(Time-based Scheduling)Event Orchestration

(Event-based Scheduling)Service Automation

(PaaS On-Demand, Service deployment and management)

PWS Admin Guide

(Installation, Infrastructure & Nodes setup, Agents,…)

ProActive AI Orchestration (PAIO)

PAIO User Guide

(a complete Data Science and Machine Learning platform, with Studio & MLOps)

1. Overview

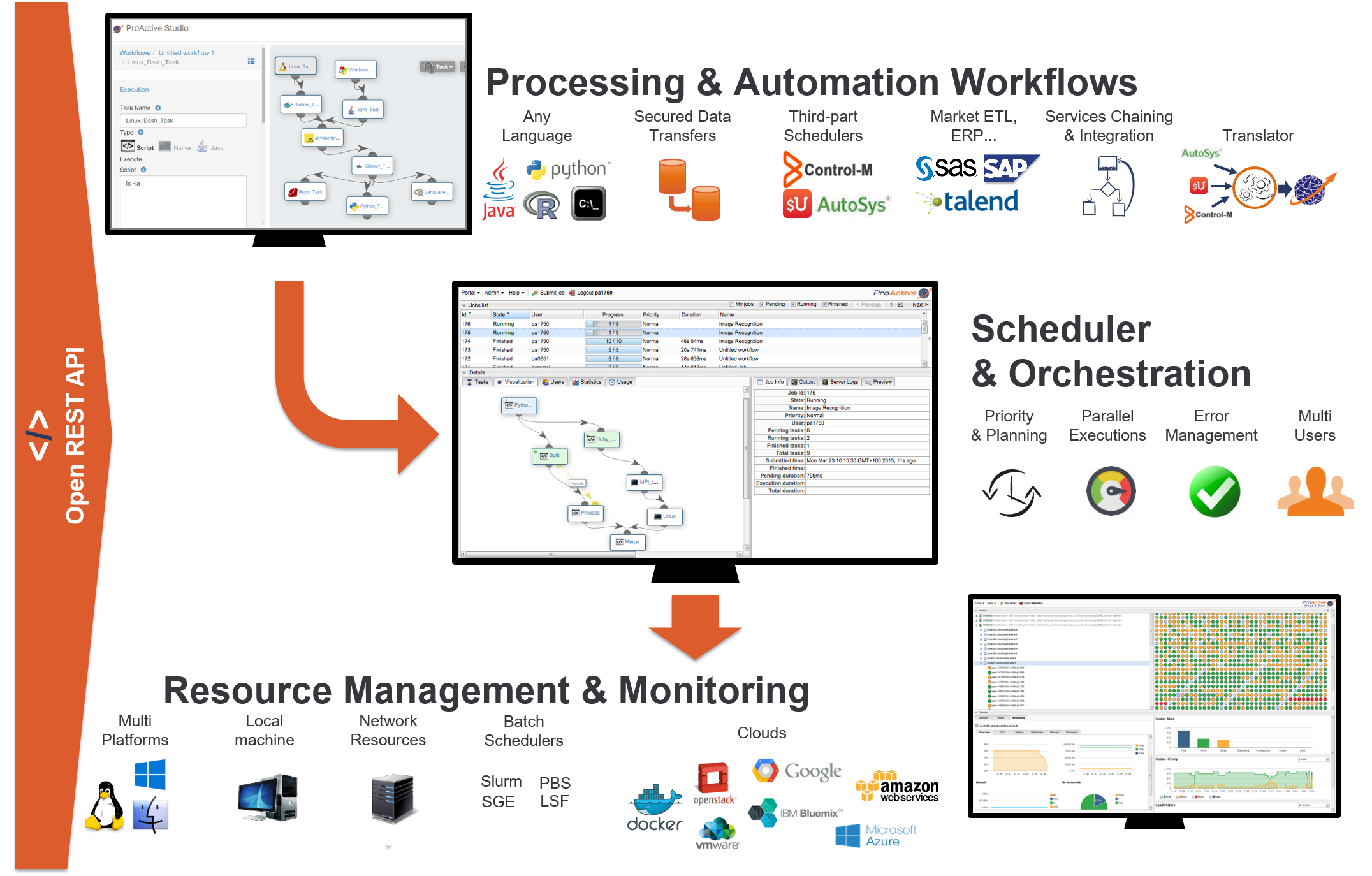

ProActive Scheduler is a comprehensive Open Source job scheduler and Orchestrator, also featuring Workflows and Resource Management. The user specifies the computation in terms of a series of computation steps along with their execution and data dependencies. The Scheduler executes this computation on a cluster of computation resources, each step on the best-fit resource and in parallel wherever its possible.

On the top left there is the Studio interface which allows you to build Workflows. It can be interactively configured to address specific domains, for instance Finance, Big Data, IoT, Artificial Intelligence (AI). See for instance the Documentation of ProActive AI Orchestration here, and try it online here. In the middle there is the Scheduler which enables an enterprise to orchestrate and automate Multi-users, Multi-application Jobs. Finally, at the bottom right is the Resource manager interface which manages and automates resource provisioning on any Public Cloud, on any virtualization software, on any container system, and on any Physical Machine of any OS. All the components you see come with fully Open and modern REST APIs.

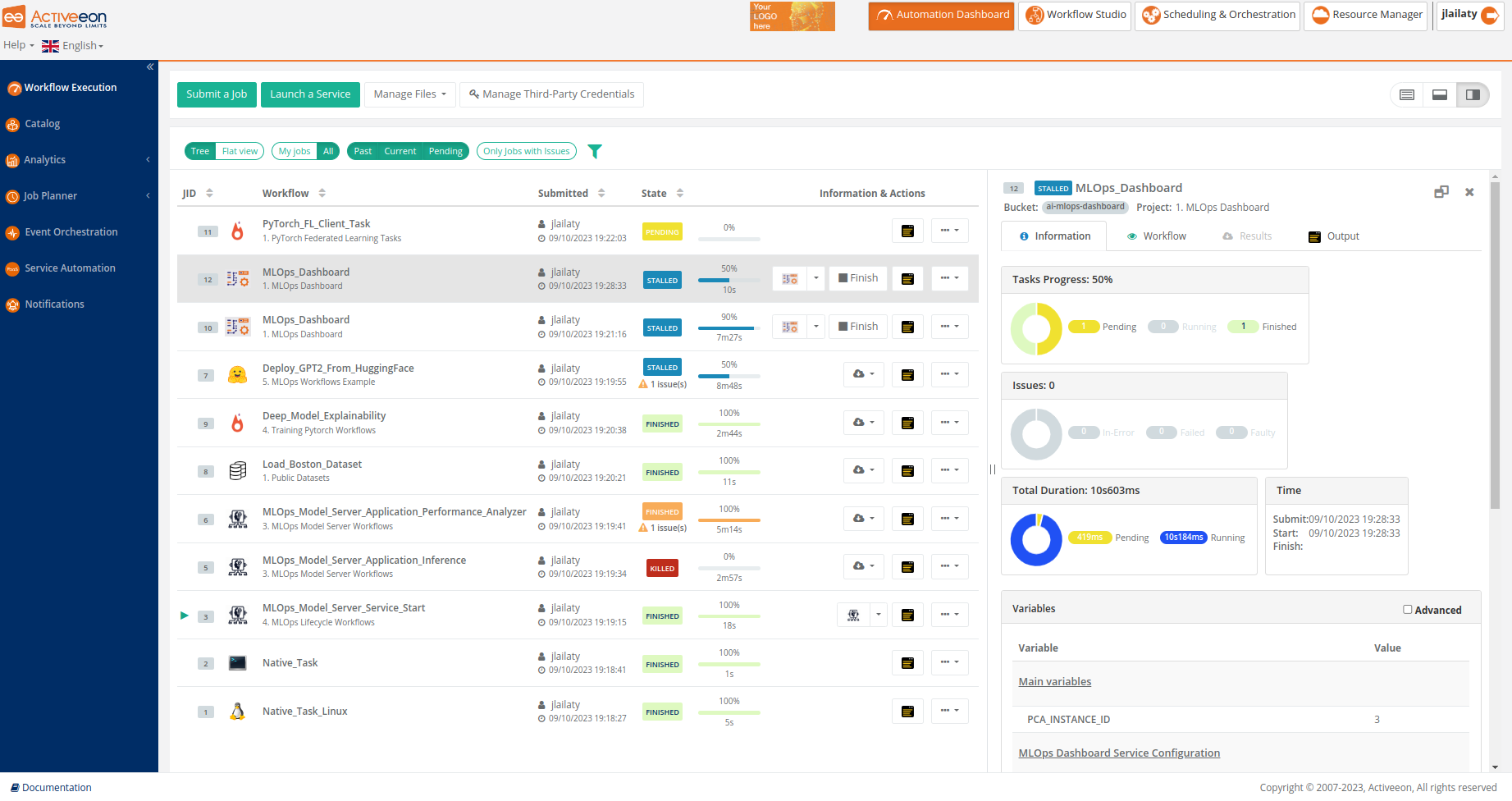

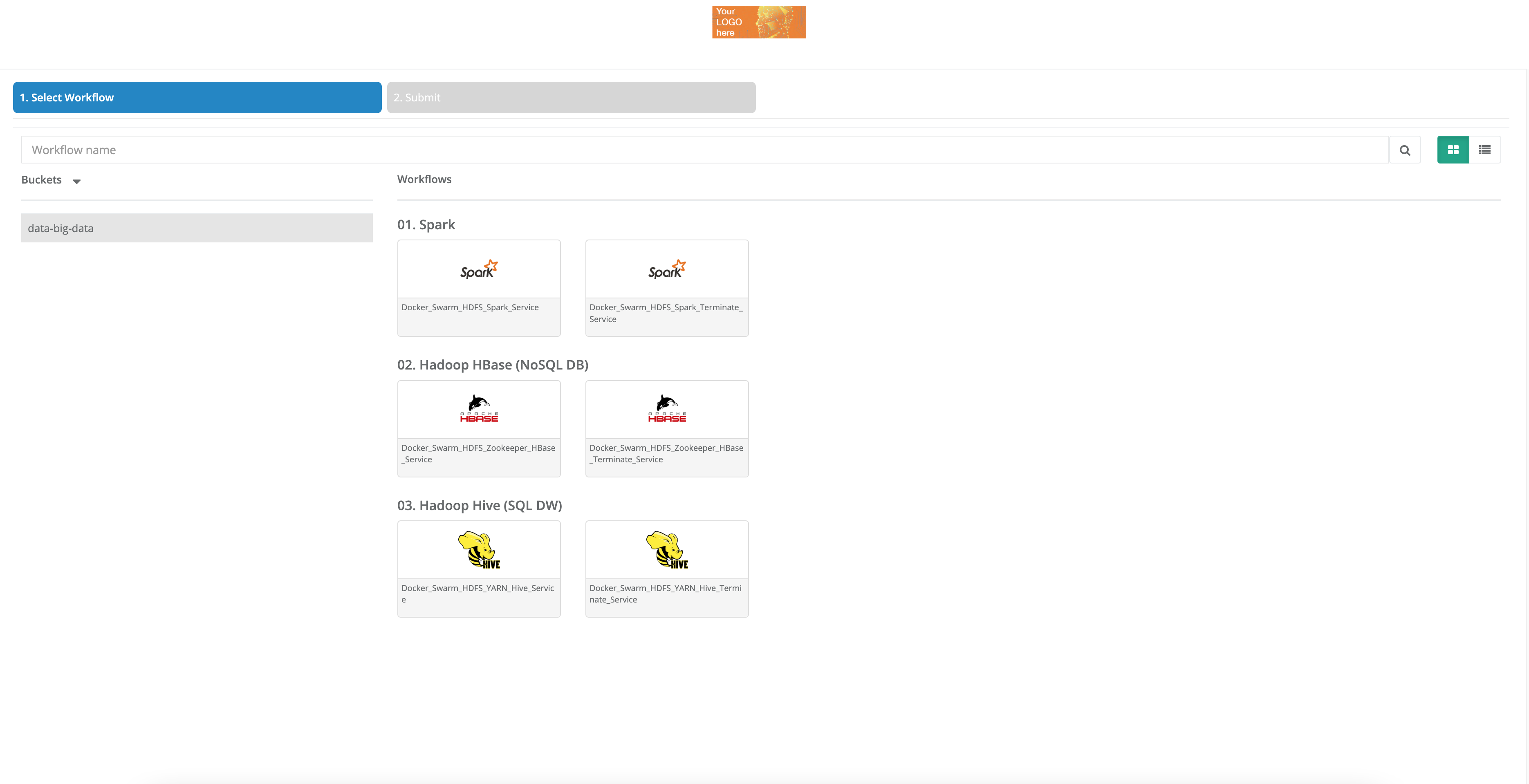

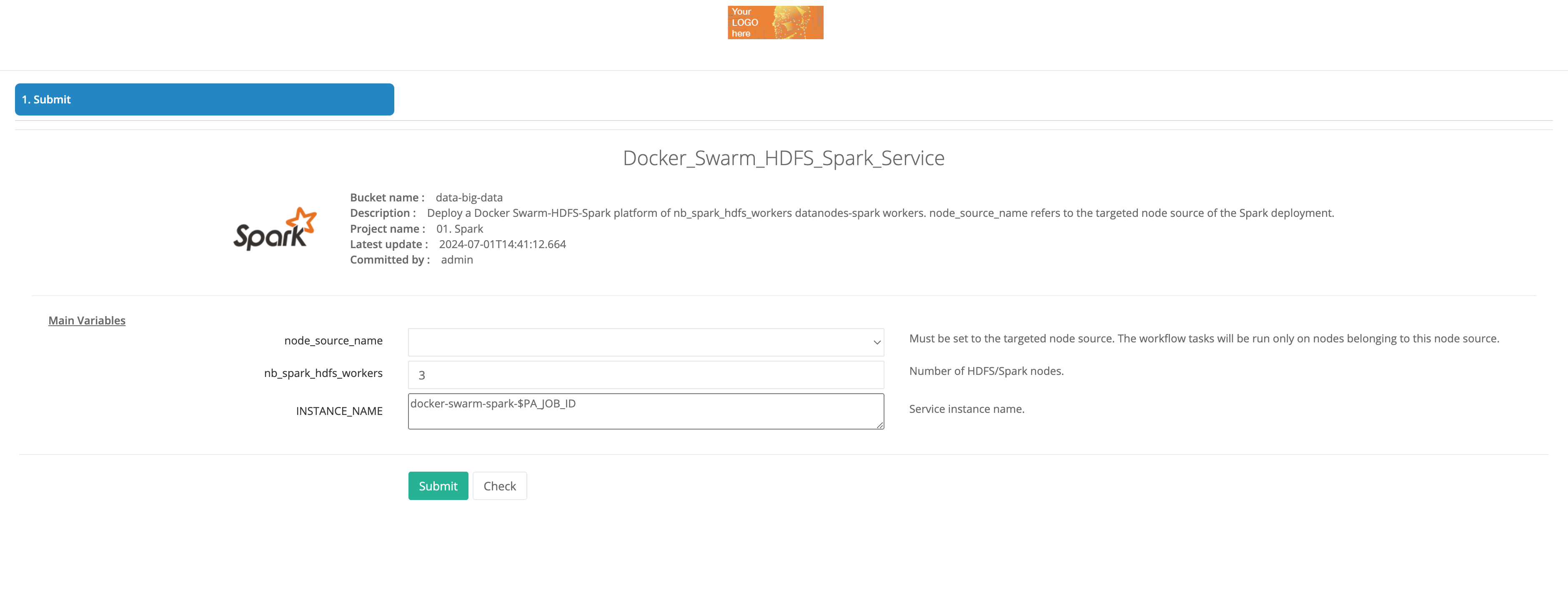

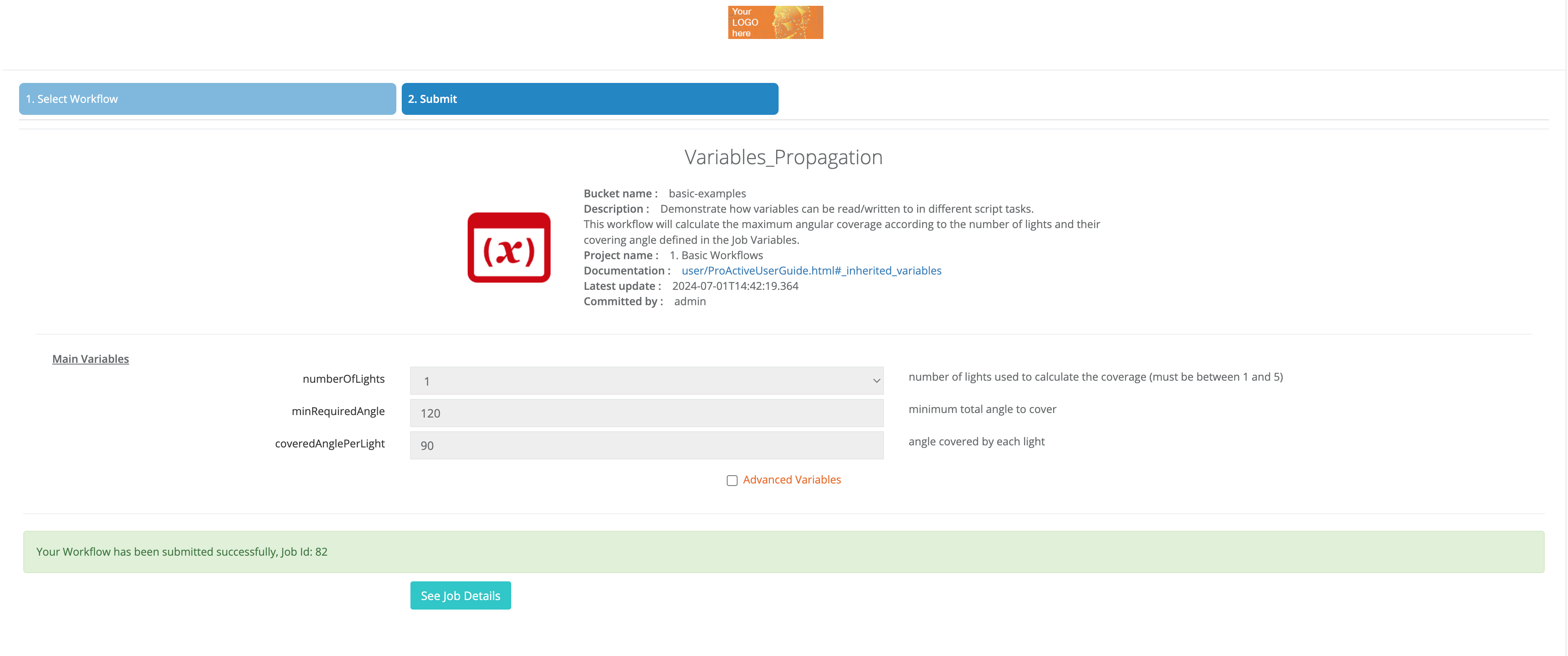

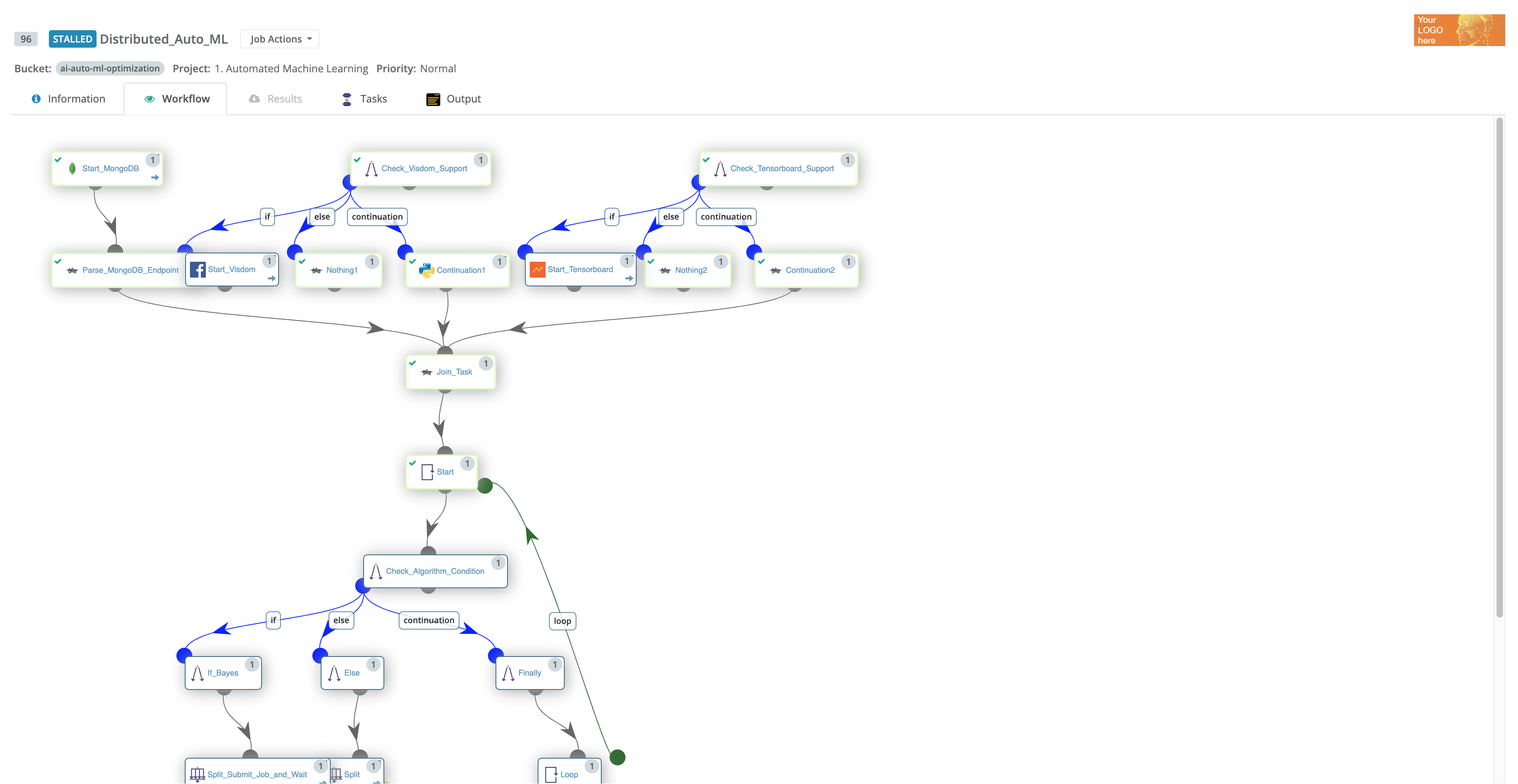

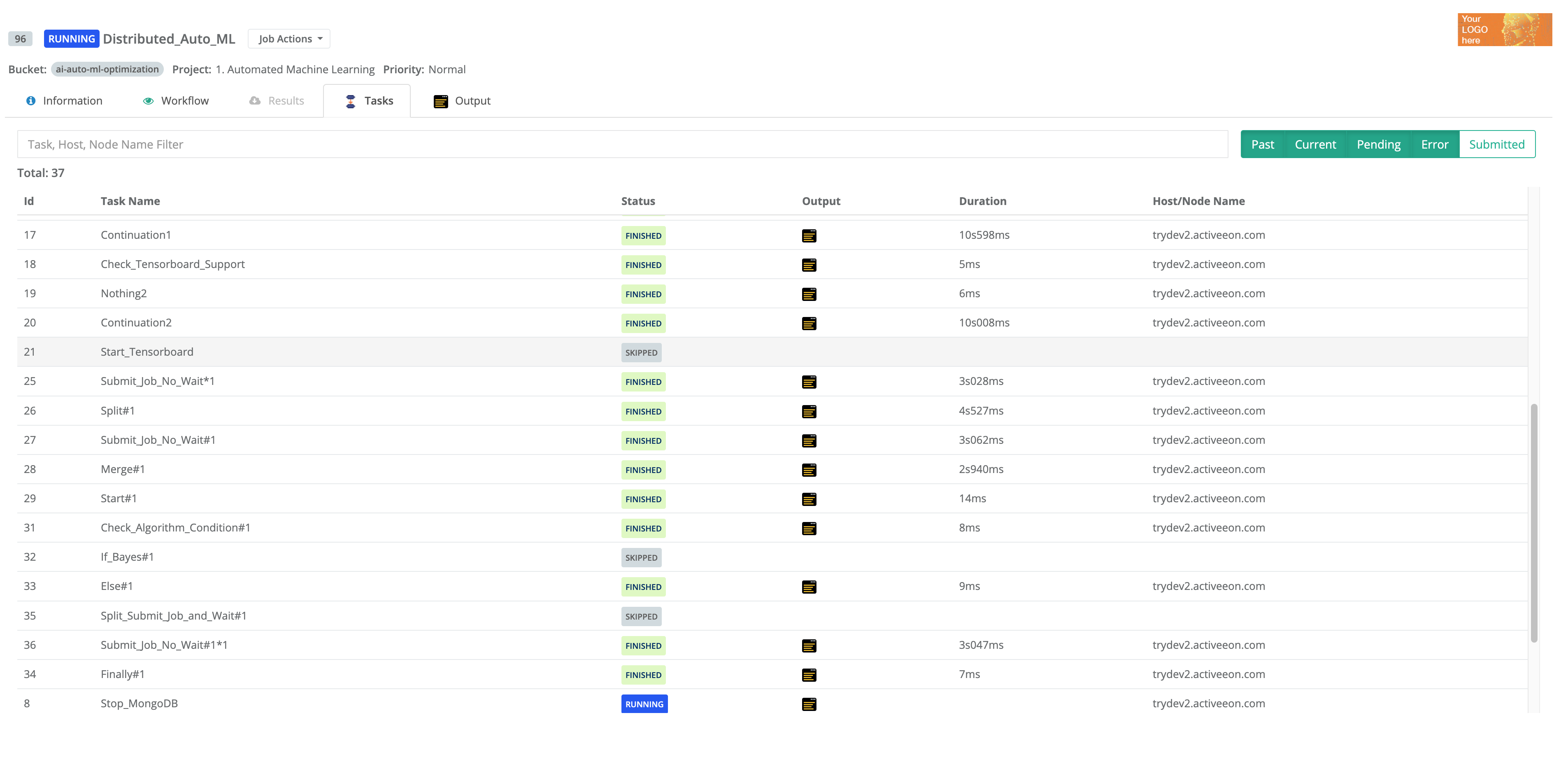

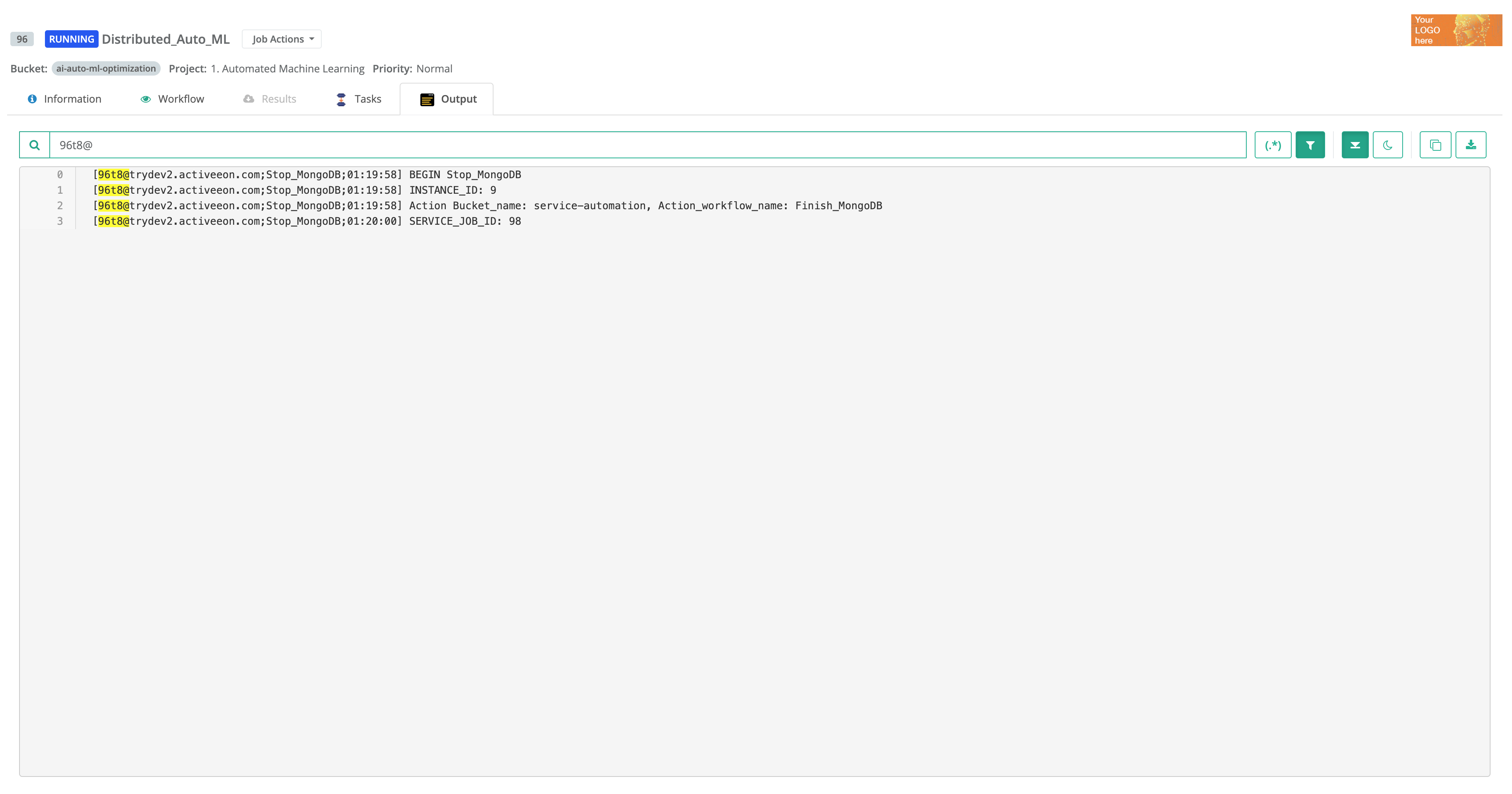

The screenshot above shows the Workflow Execution Portal, which is the main portal of ProActive Workflows & Scheduling and the entry point for end-users to submit workflows manually, monitor their executions and access job outputs, results, services endpoints, etc.

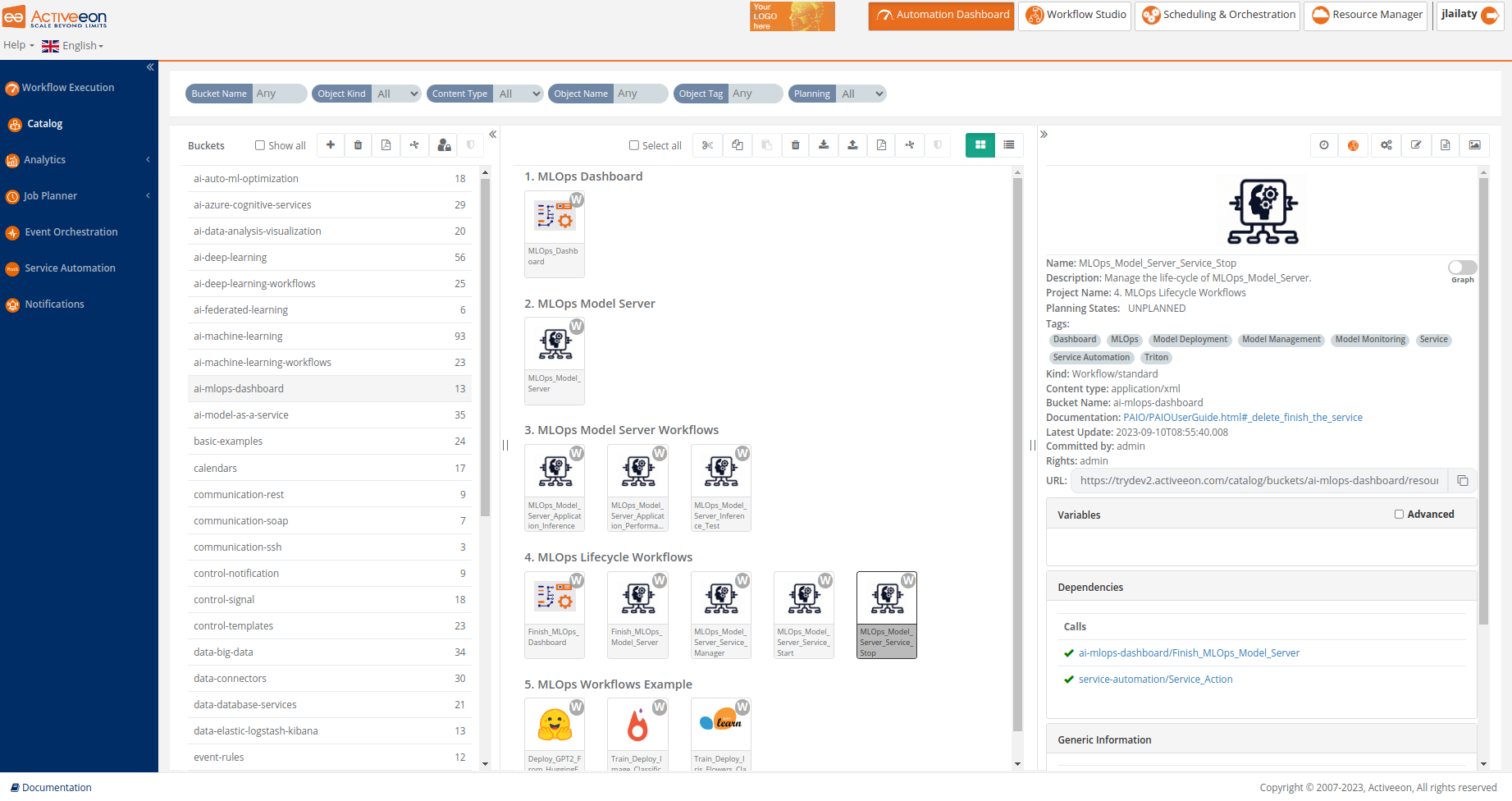

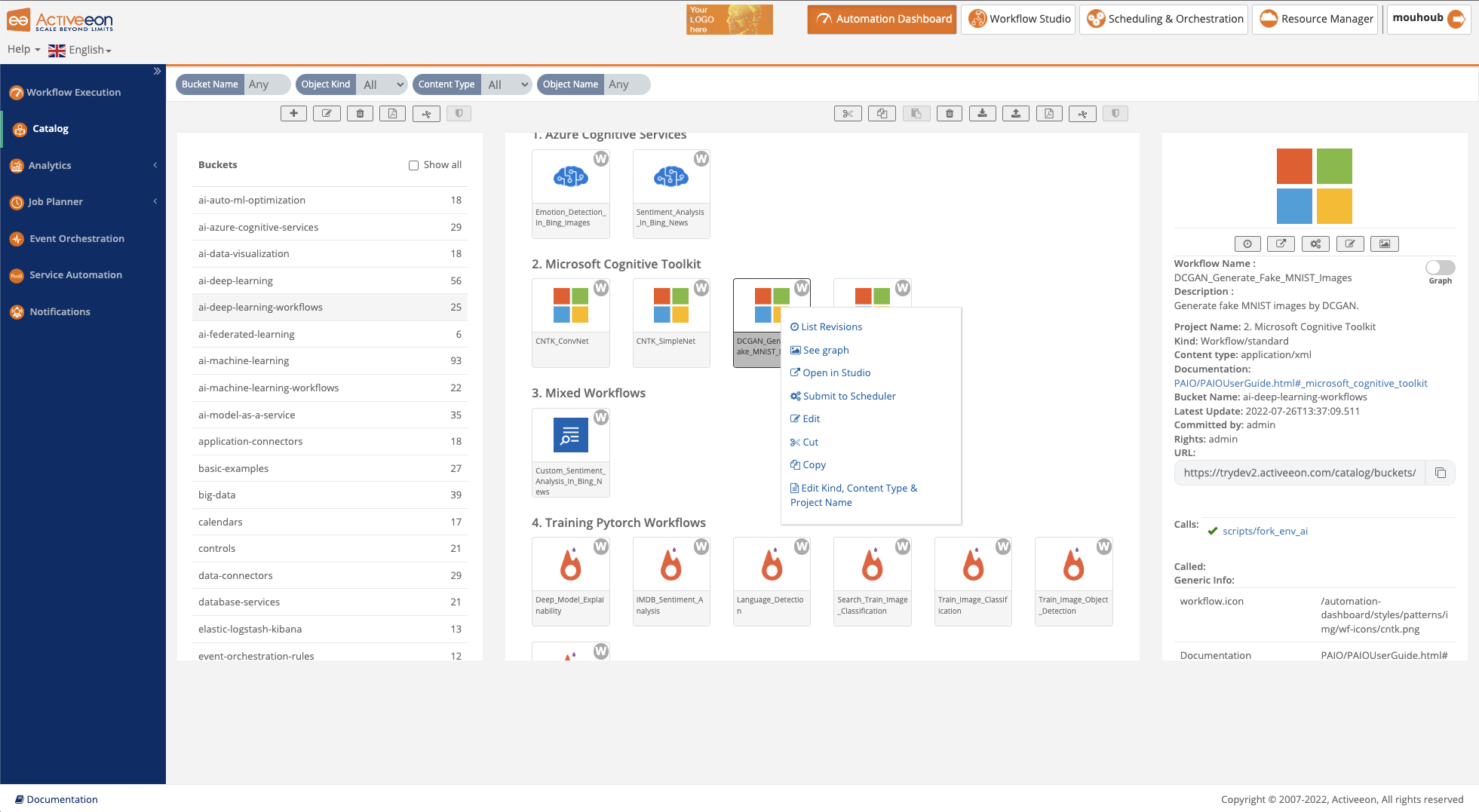

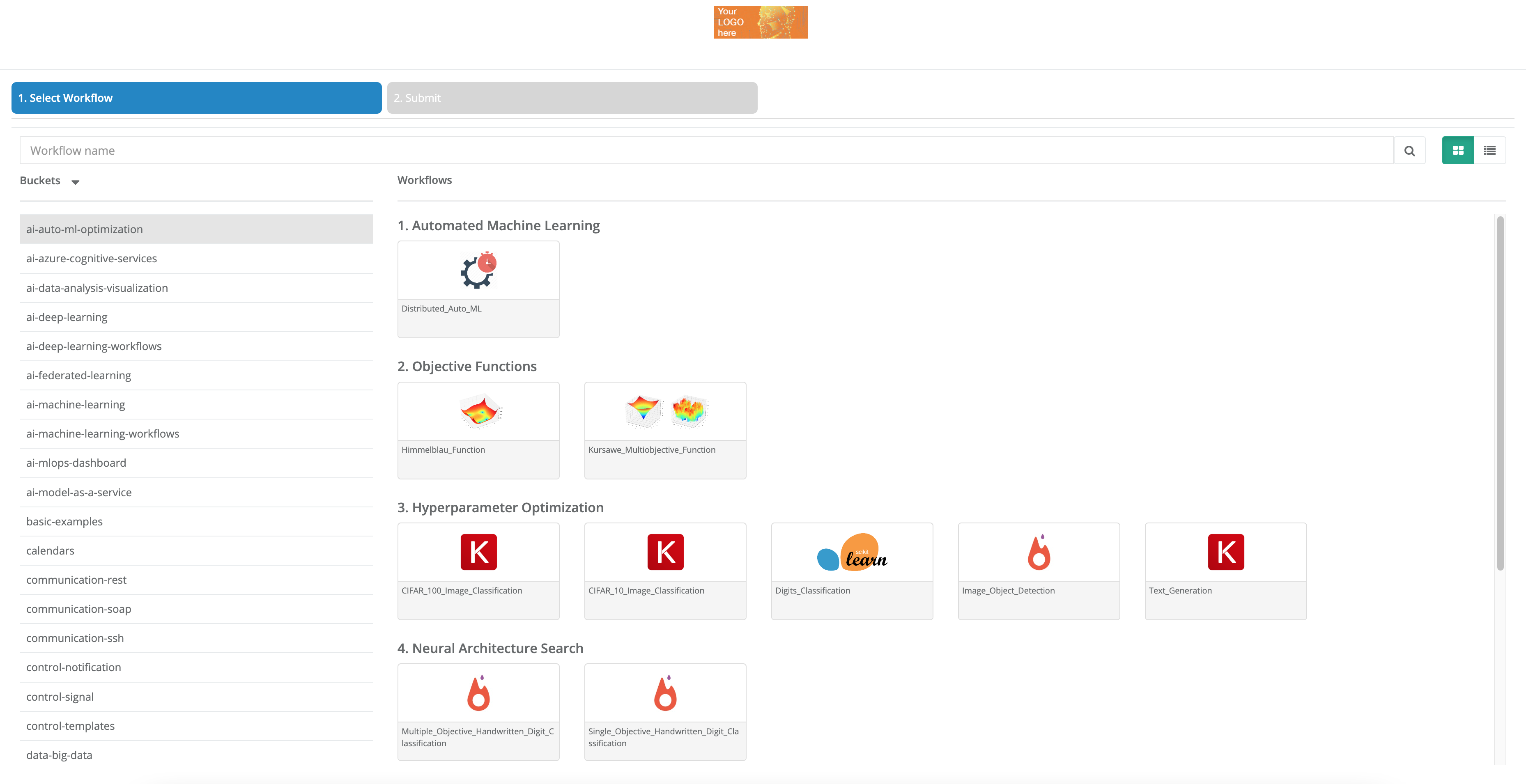

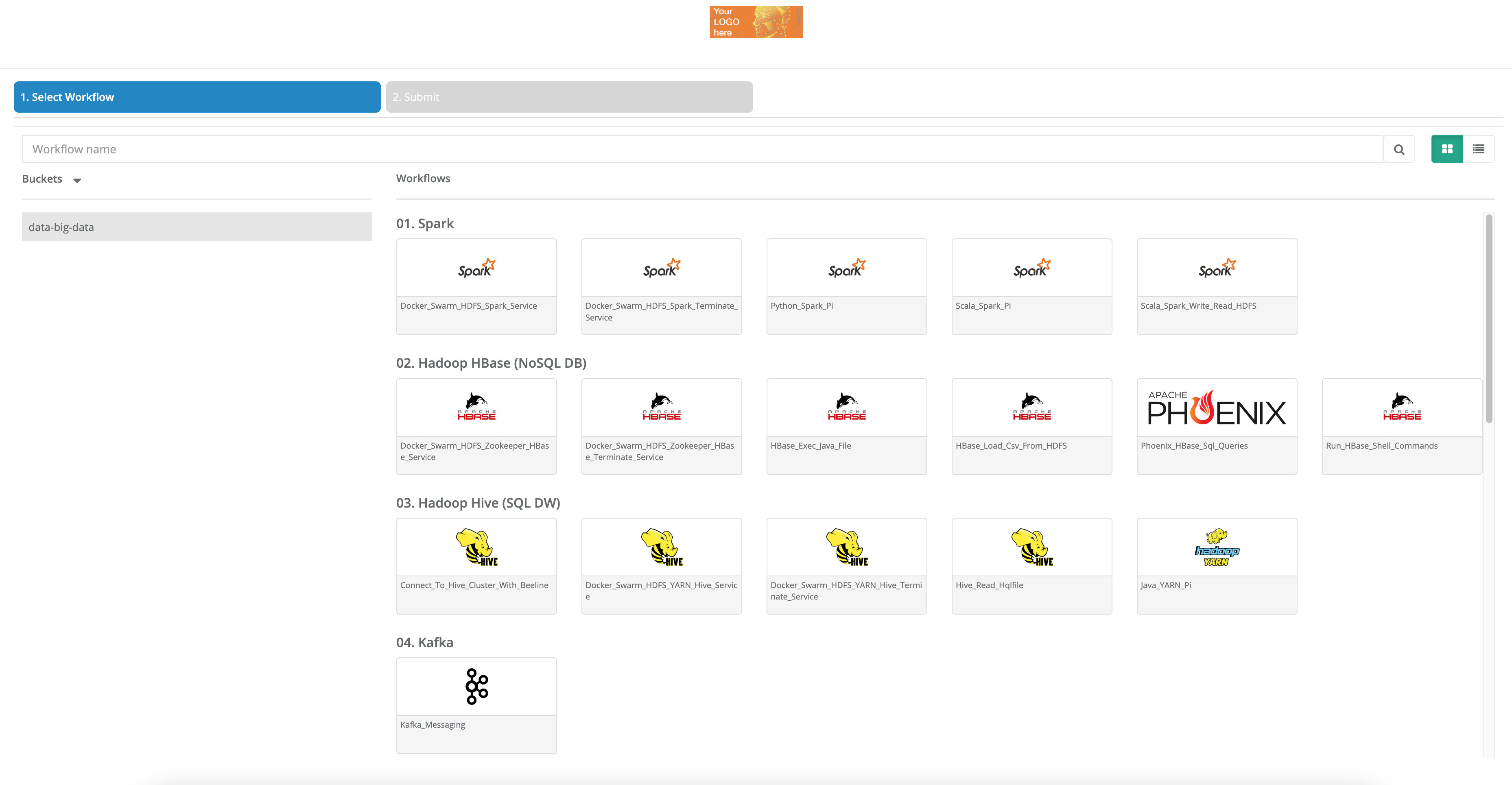

The screenshot above shows the Catalog Portal, where one can store Workflows, Calendars, Scripts, etc. Powerful versioning together with full access control (RBAC) is supported, and users can share easily Workflows and templates between teams, and various environments (Dev, Test, Staging and Prod).

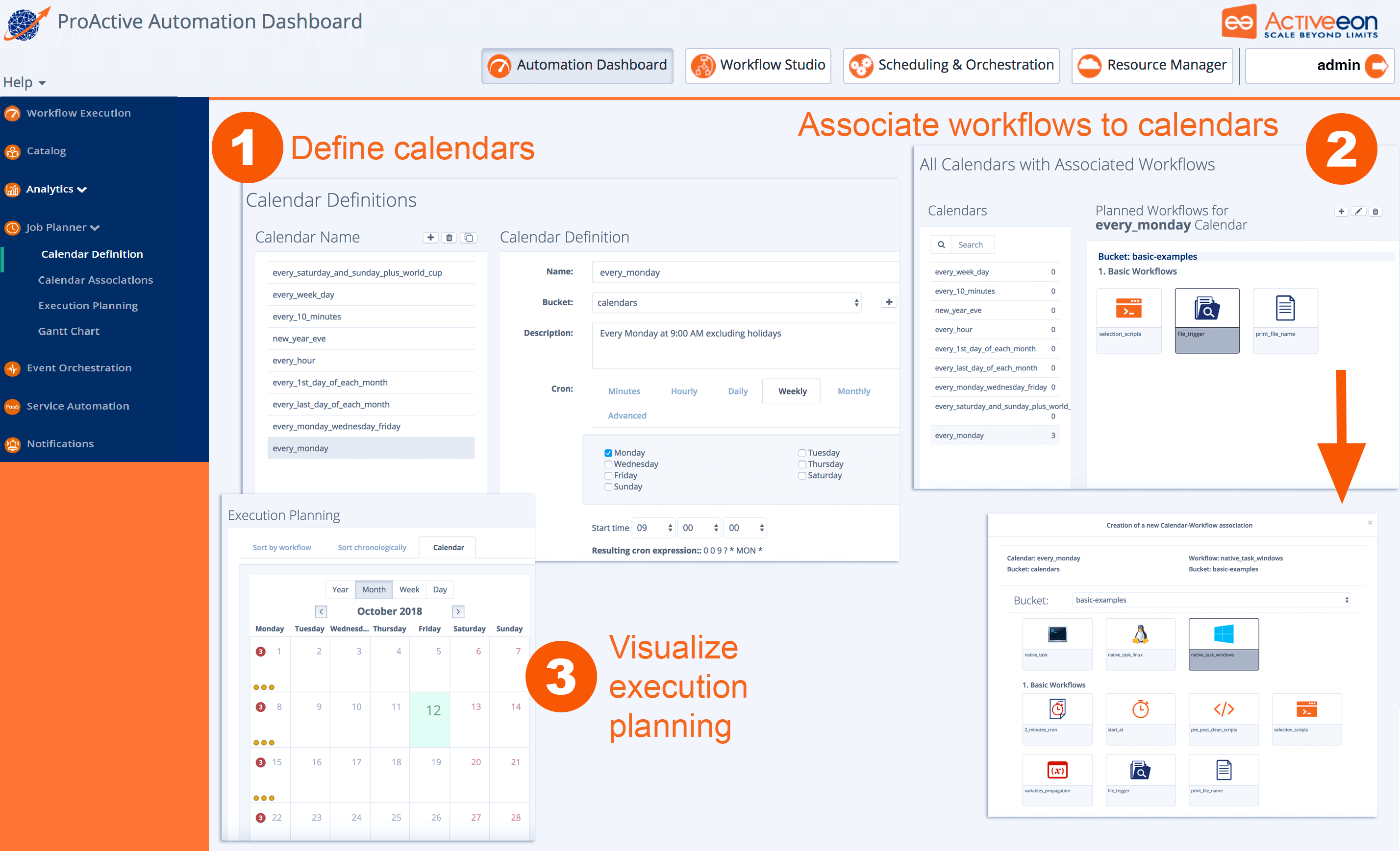

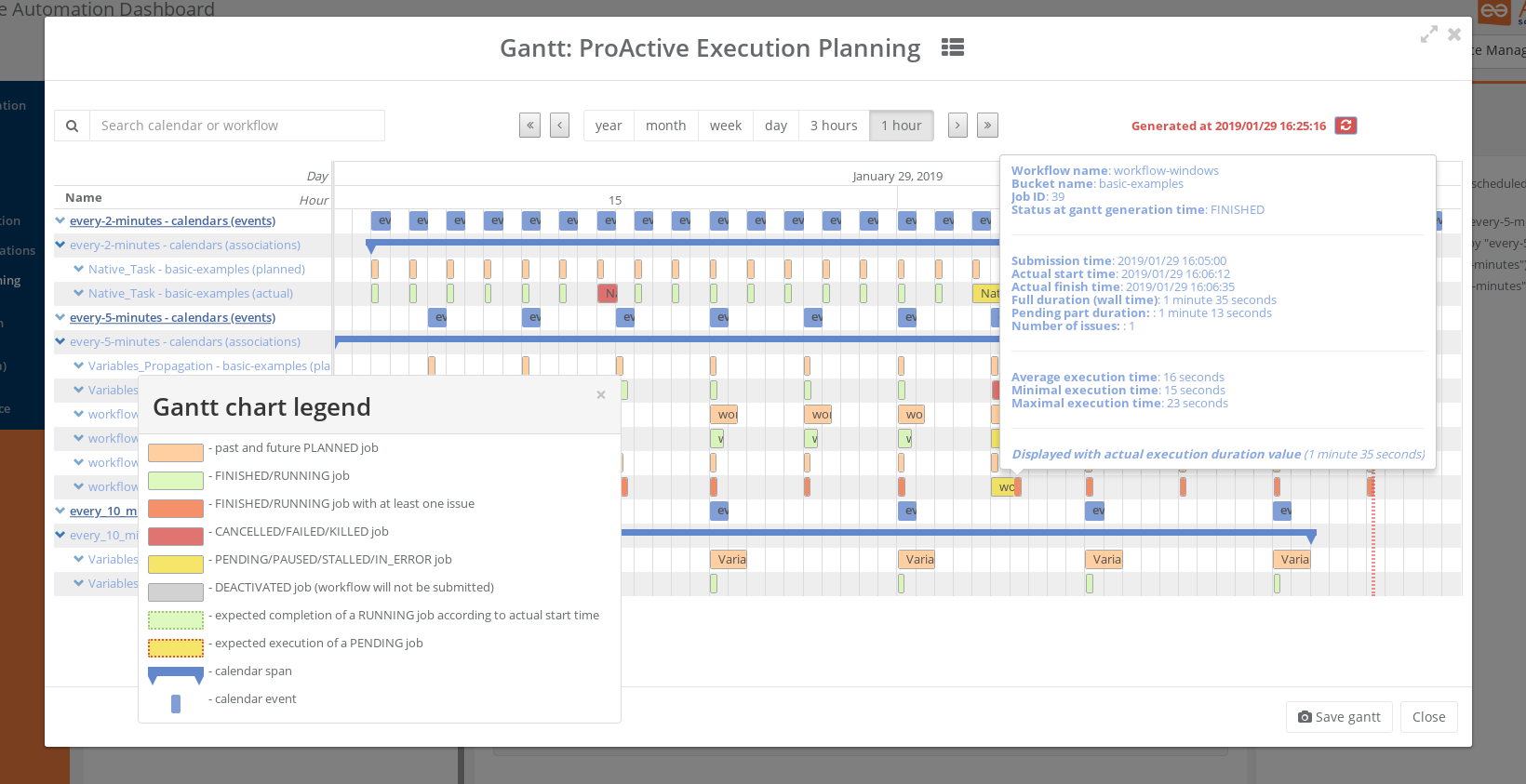

The screenshot above shows the Job Planner Portal, allowing to automate and schedule recurring Jobs. From left to right, you can define and use Calendar Definitions , associate Workflows to calendars, visualize the execution planning for the future, as well as actual executions of the past.

The Gantt screenshot above shows the Gantt View of Job Planner, featuring past job history, current Job being executed, and future Jobs that will be submitted, all in a comprehensive interactive view. You easily see the potential differences between Planned Submission Time and Actual Start Time of the Jobs, get estimations of the Finished Time, visualize the Job that stayed PENDING for some time (in Yellow) and the Jobs that had issues and got KILLED, CANCELLED, or FAILED (in red).

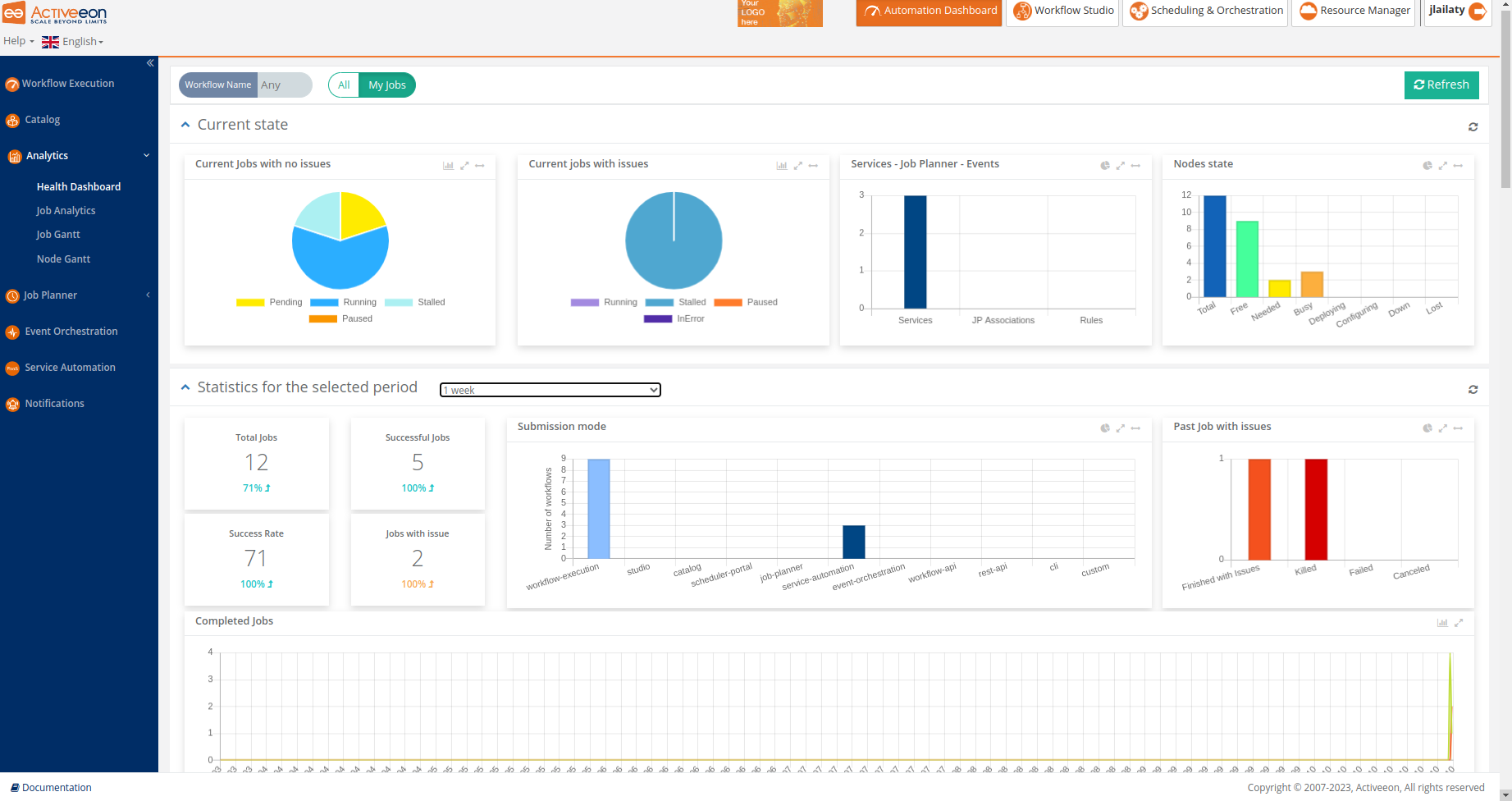

The screenshots above show the Health Dashboard Portal, which is a part of the Analytics service, that displays global statistics and current state of the ProActive server. Using this portal, users can monitor the status of critical resources, detect issues, and take proactive measures to ensure the efficient operation of workflows and services.

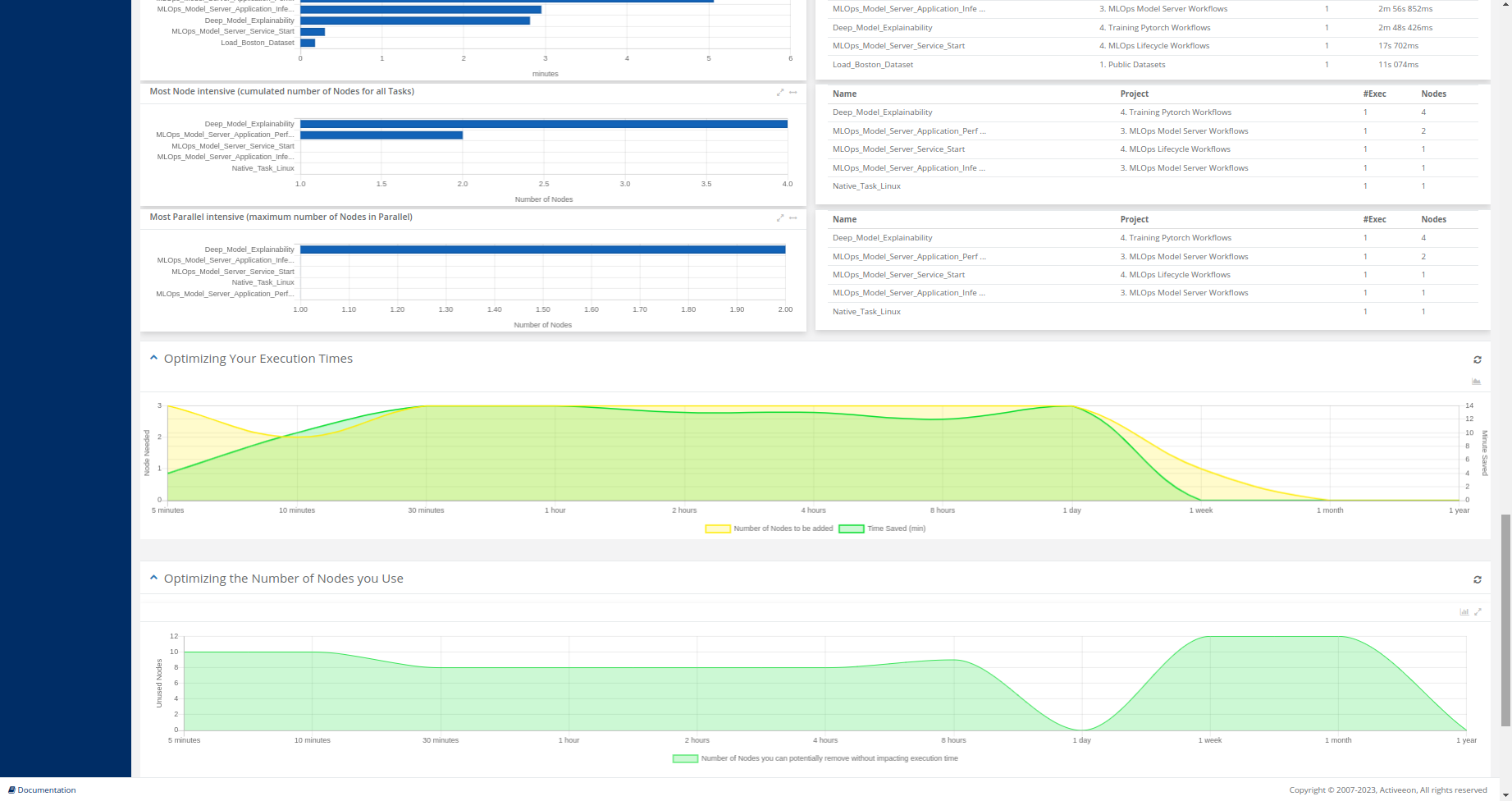

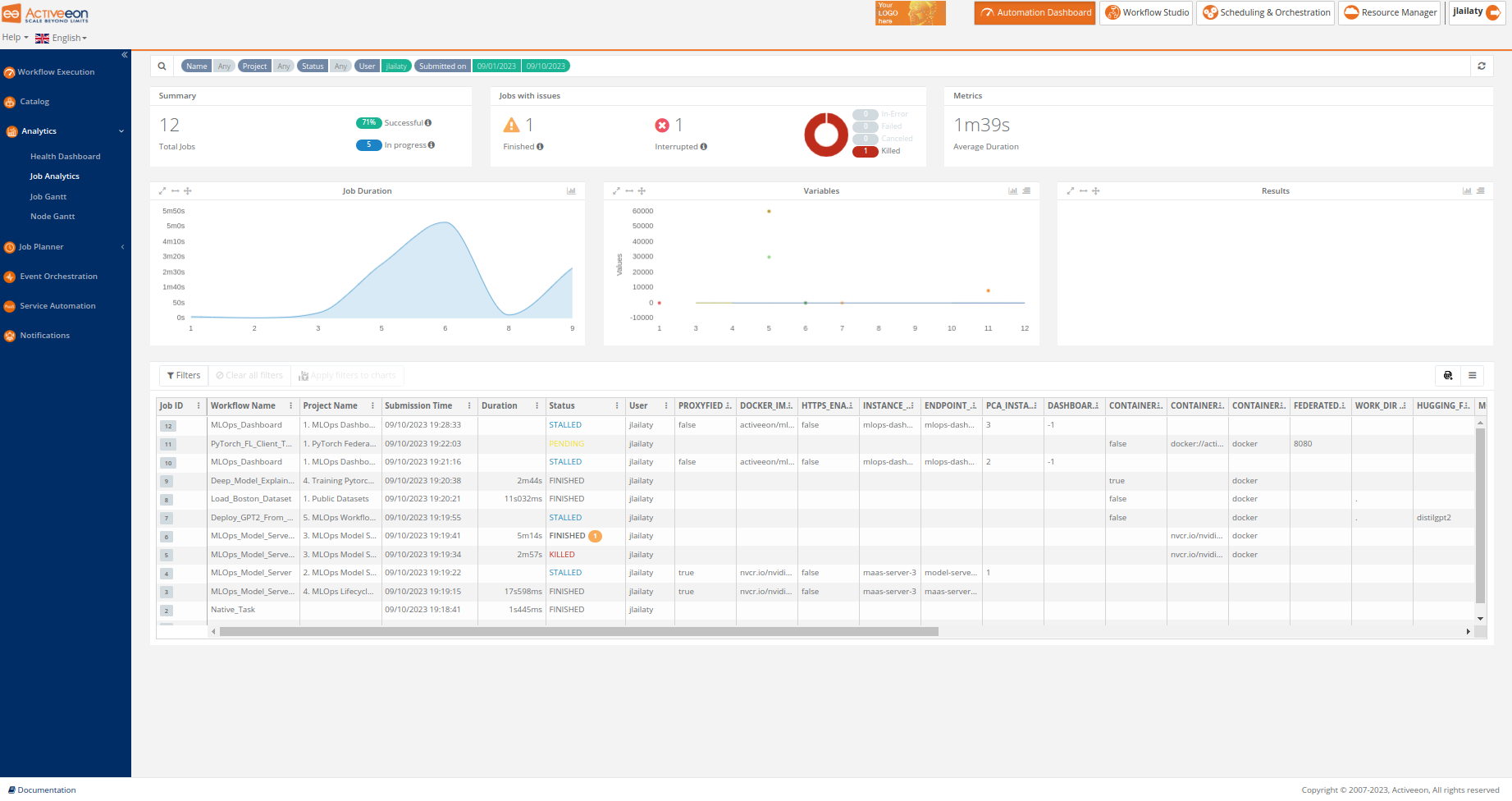

The screenshot above shows the Job Analytics Portal, which is a ProActive component, part of the Analytics service, that provides an overview of executed workflows along with their input variables and results.

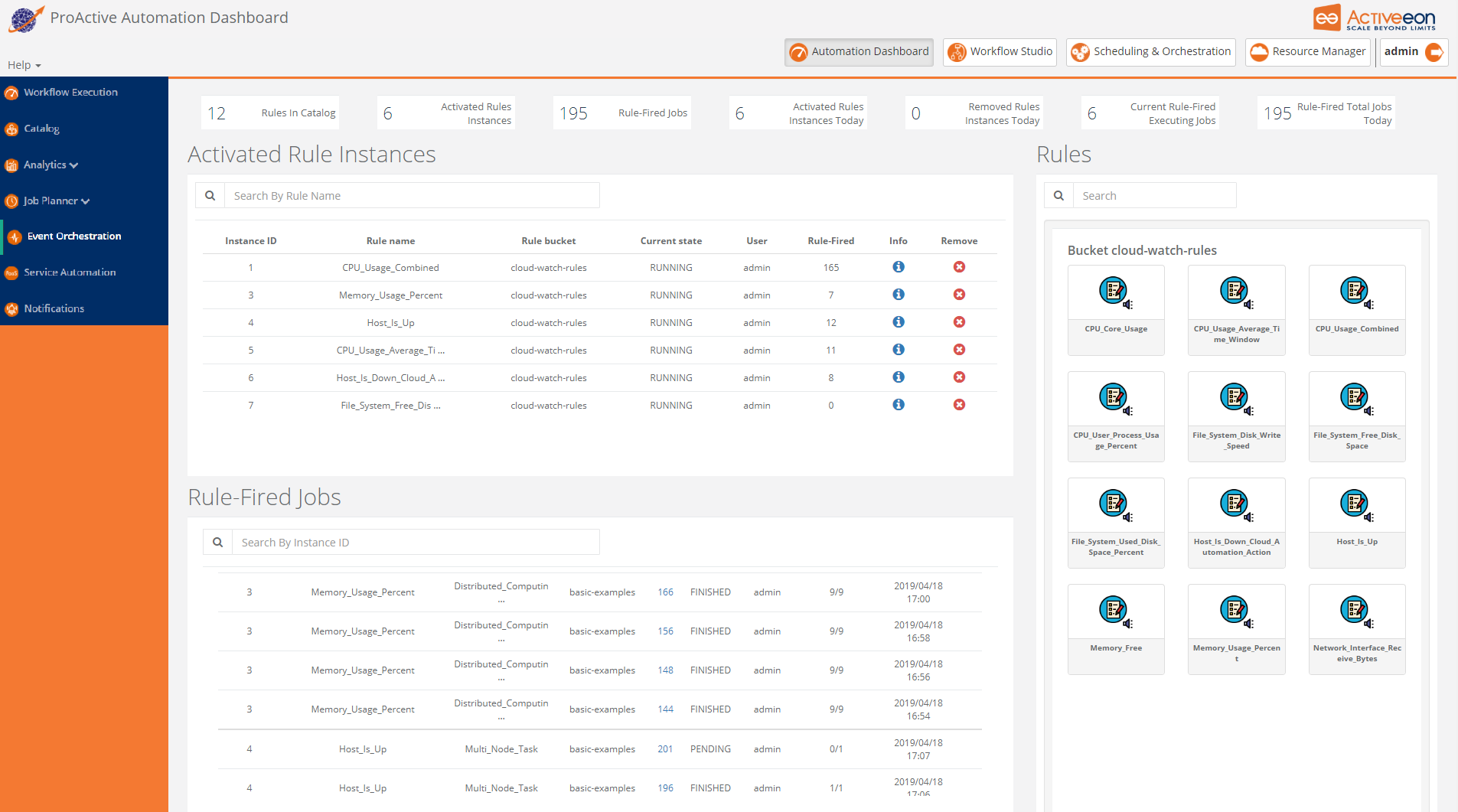

The screenshot above shows the Event Orchestration Portal, where a user can manage and benefit from Event Orchestration - smart monitoring system. This ProActive component detects complex events and then triggers user-specified actions according to predefined set of rules.

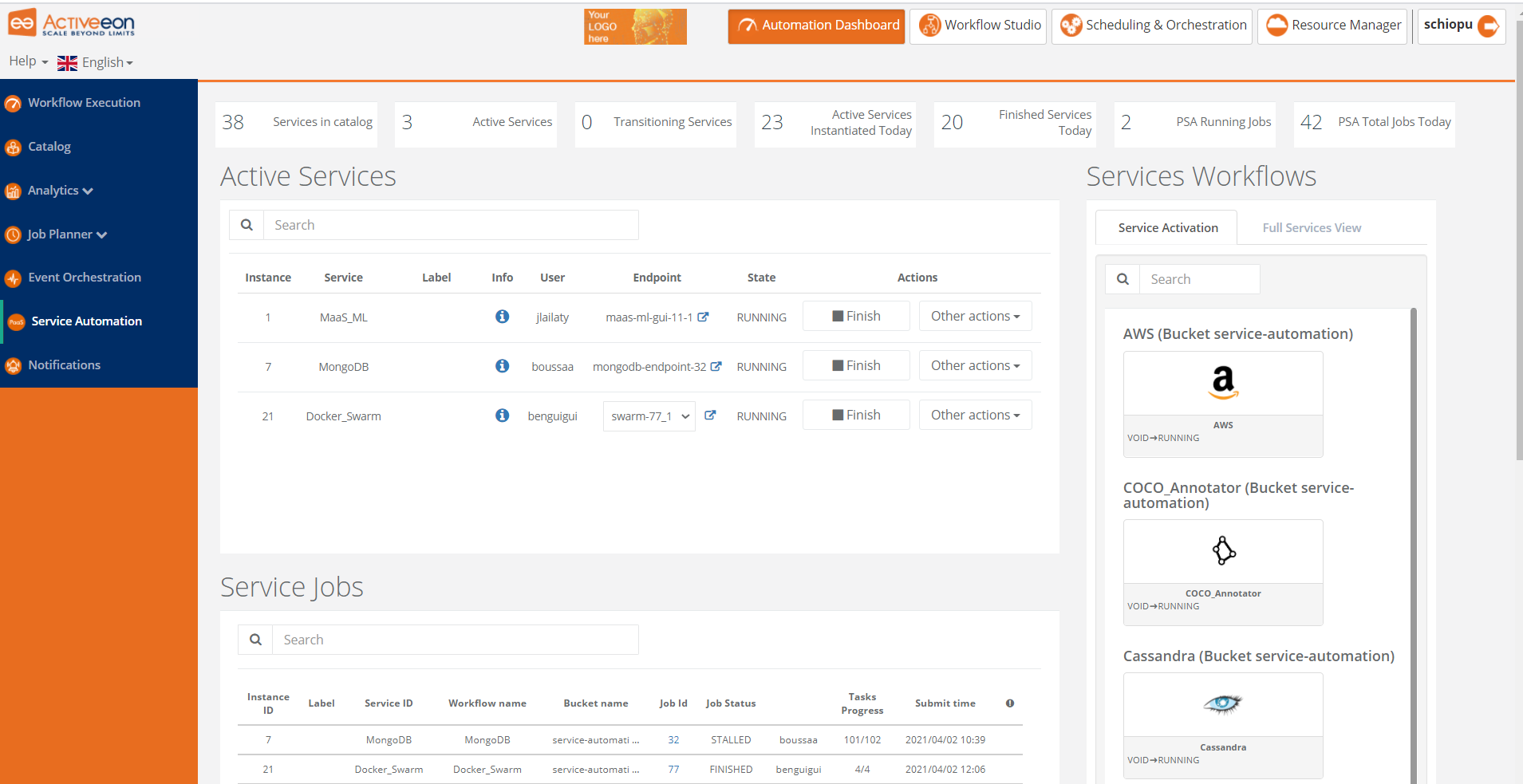

The screenshot above shows the Service Automation Portal which is actually a PaaS automation tool. It allows you to easily manage any On-Demand Services with full Life-Cycle Management (create, deploy, suspend, resume and terminate).

The screenshots above taken from the Resource Manager Portal shows that you can configure ProActive Scheduler to dynamically scale up and down the infrastructure being used (e.g. the number of VMs you buy on Clouds) according to the actual workload to execute.

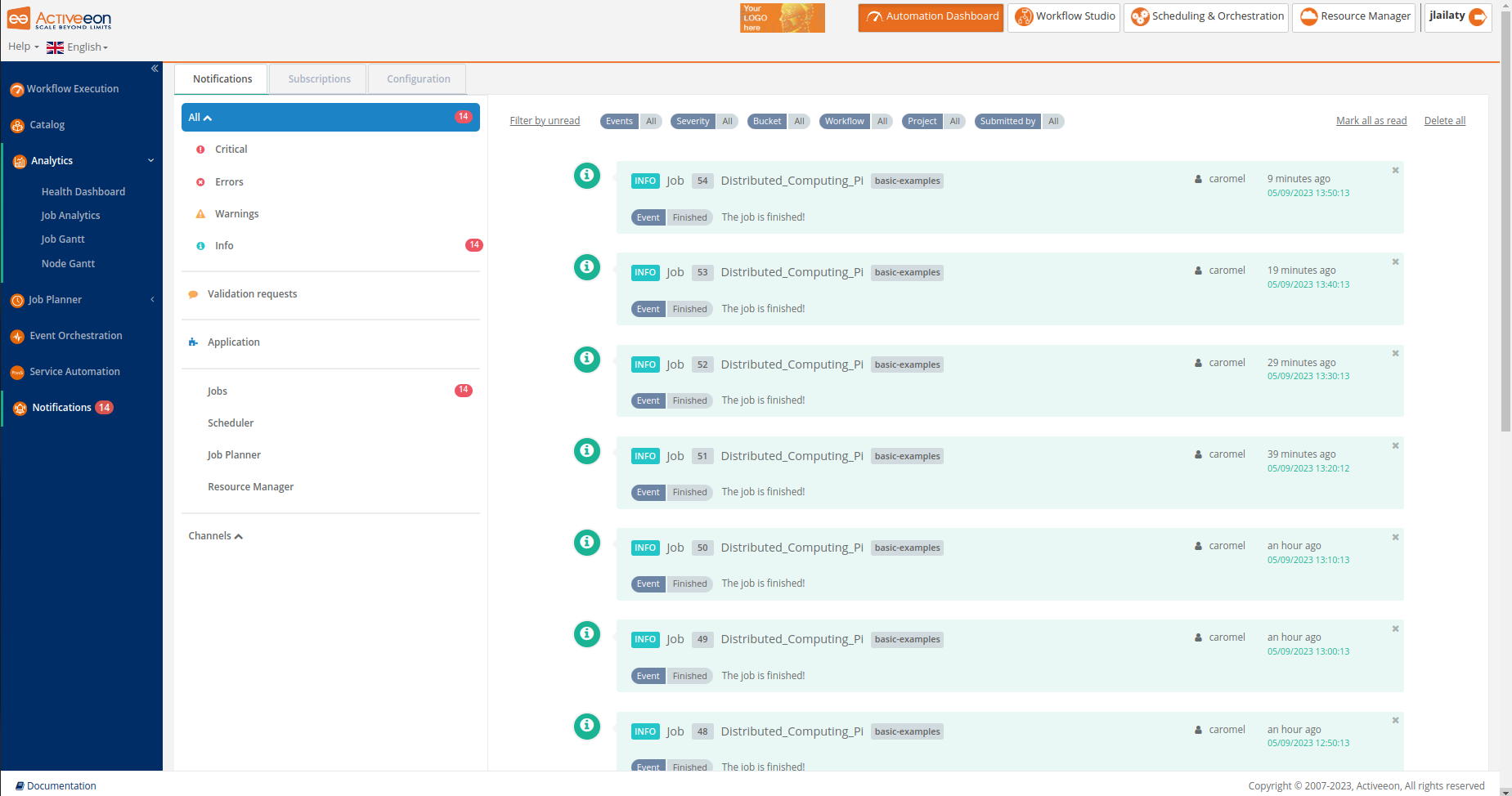

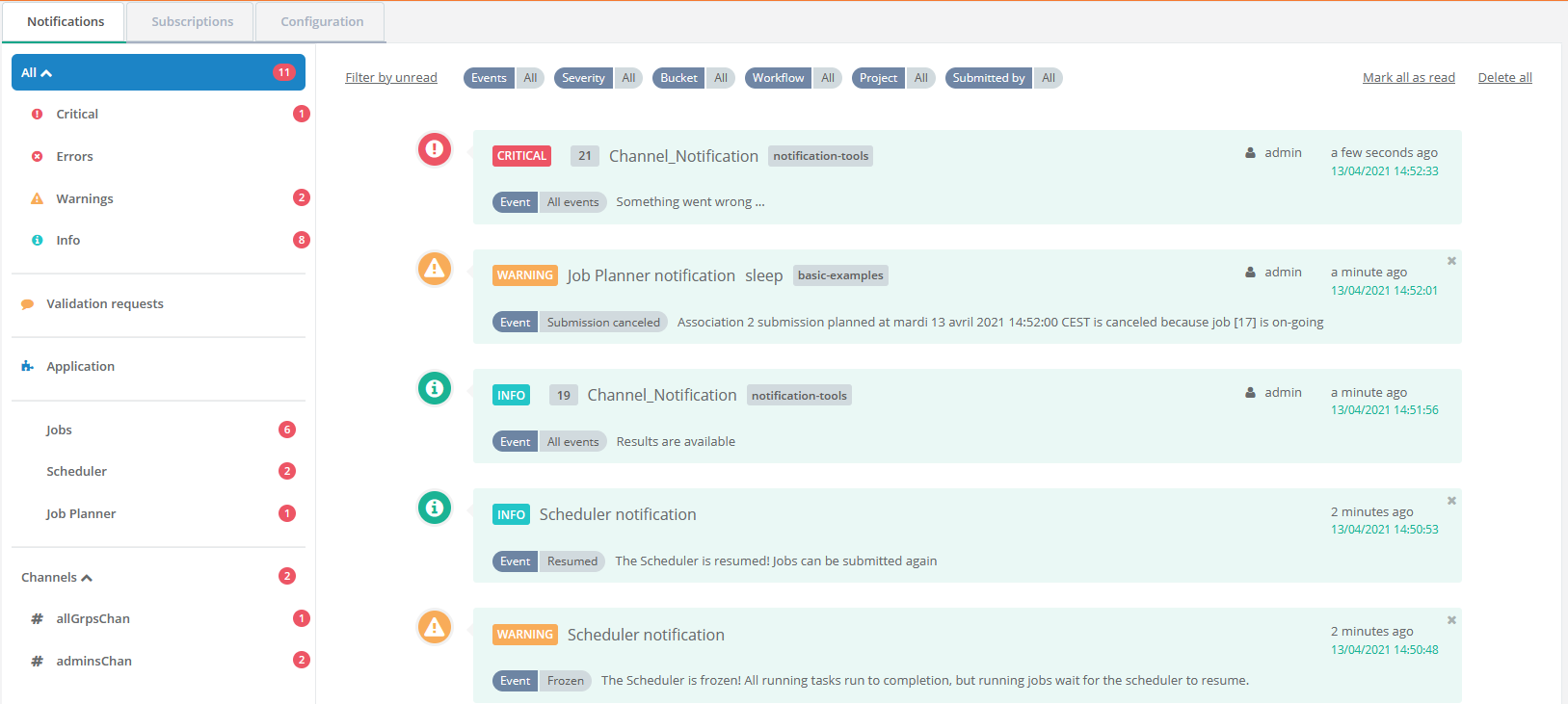

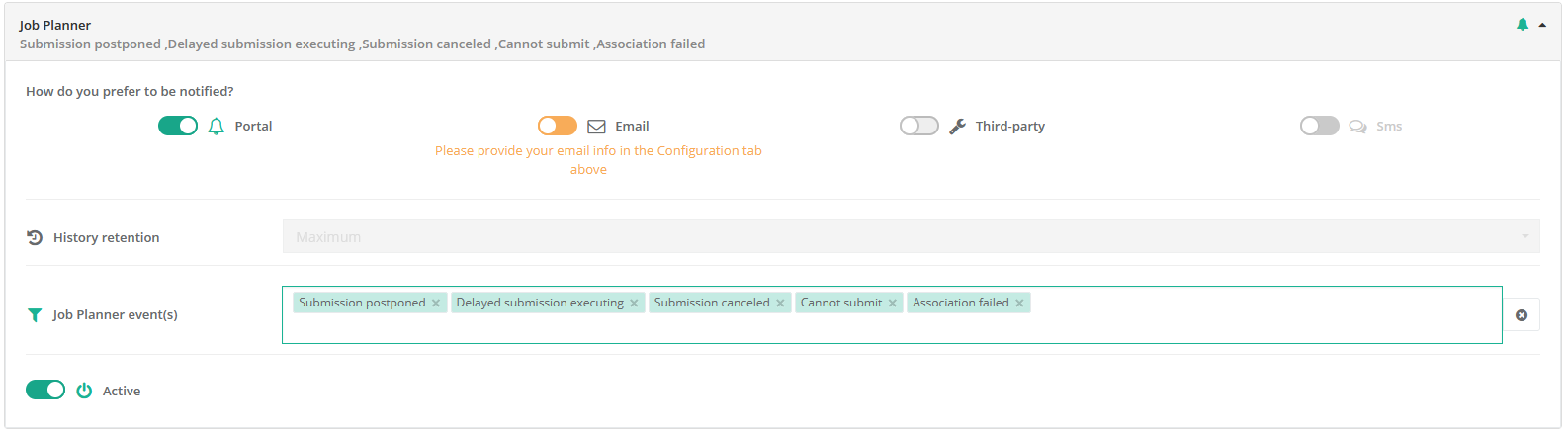

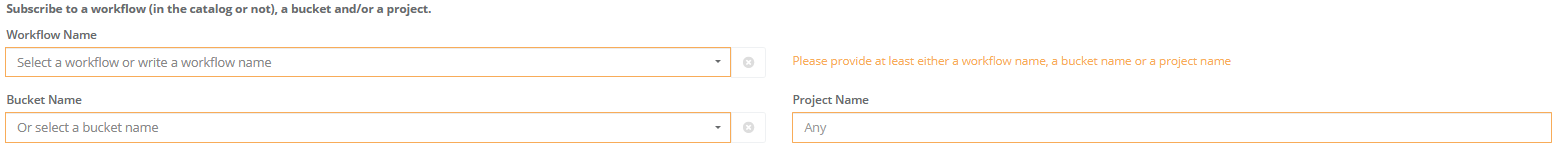

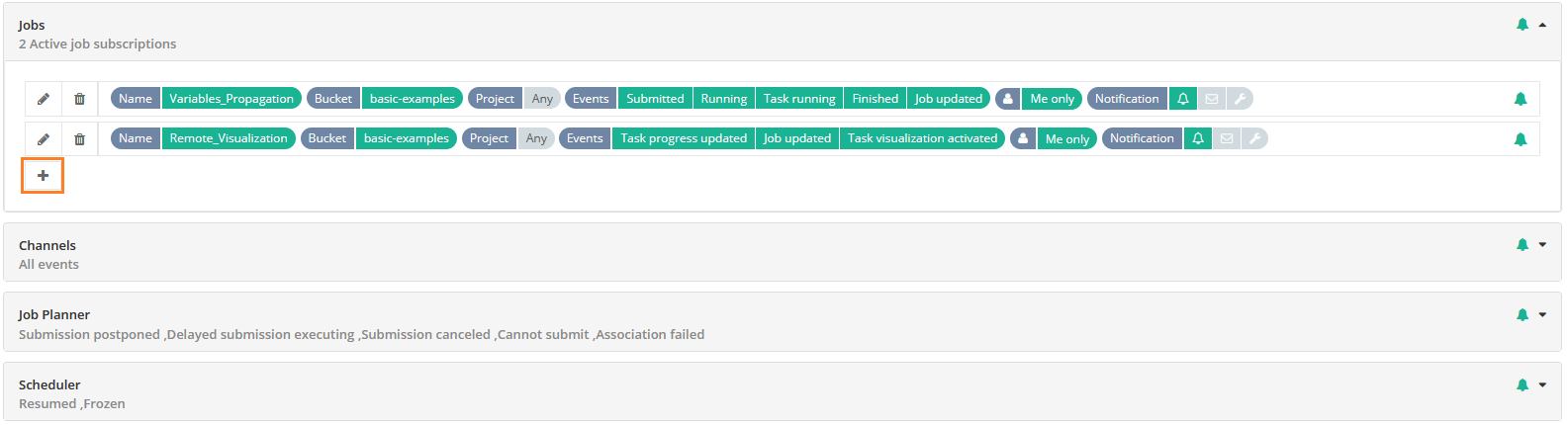

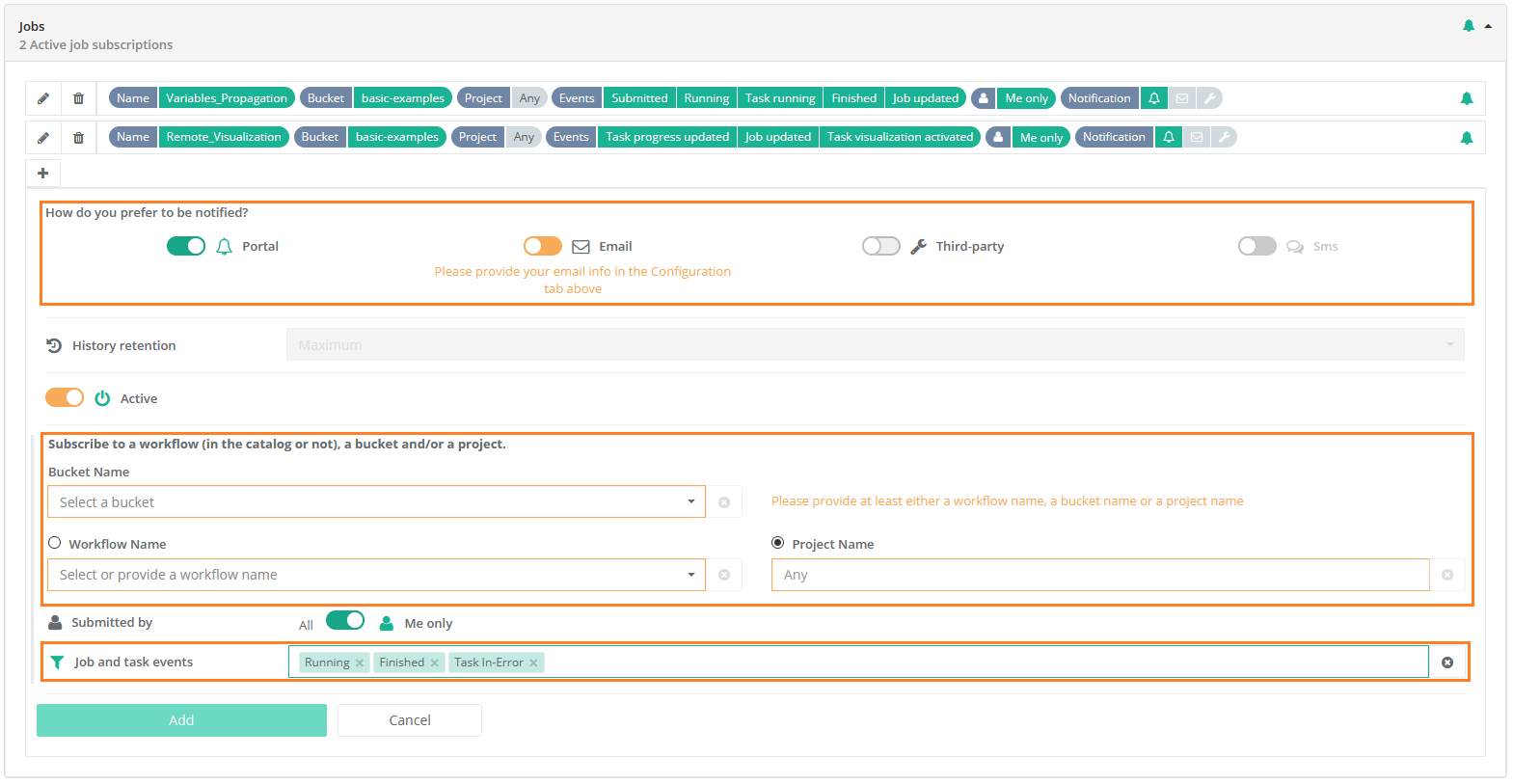

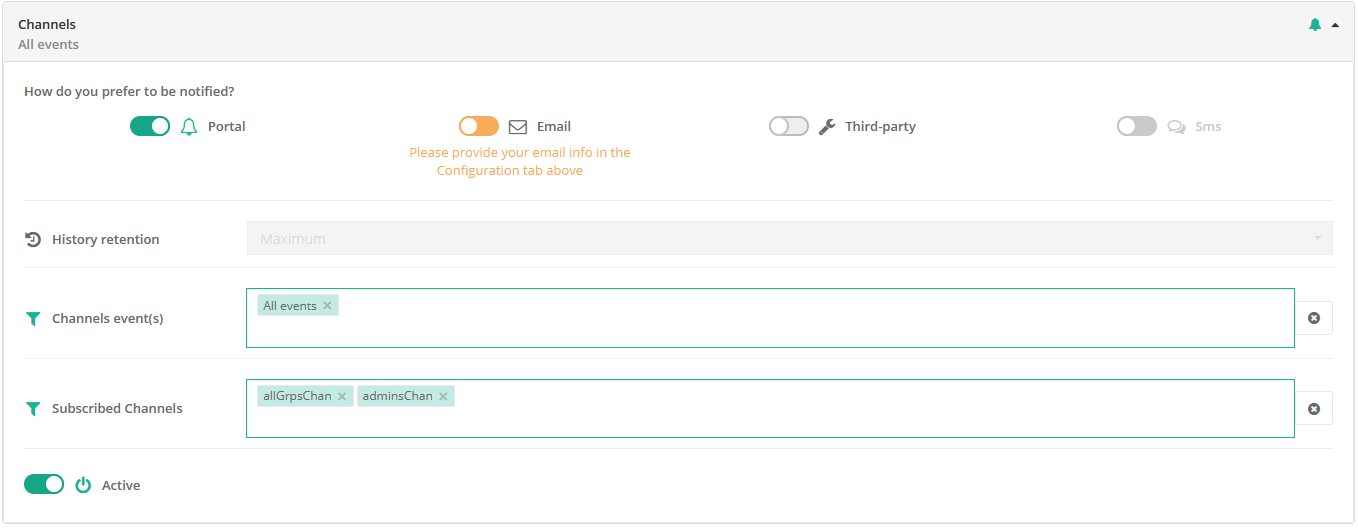

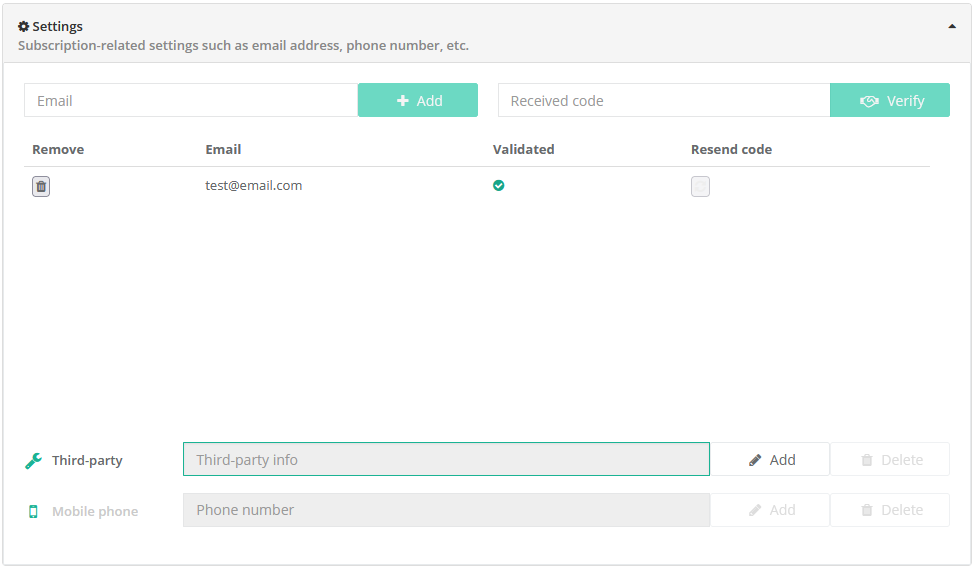

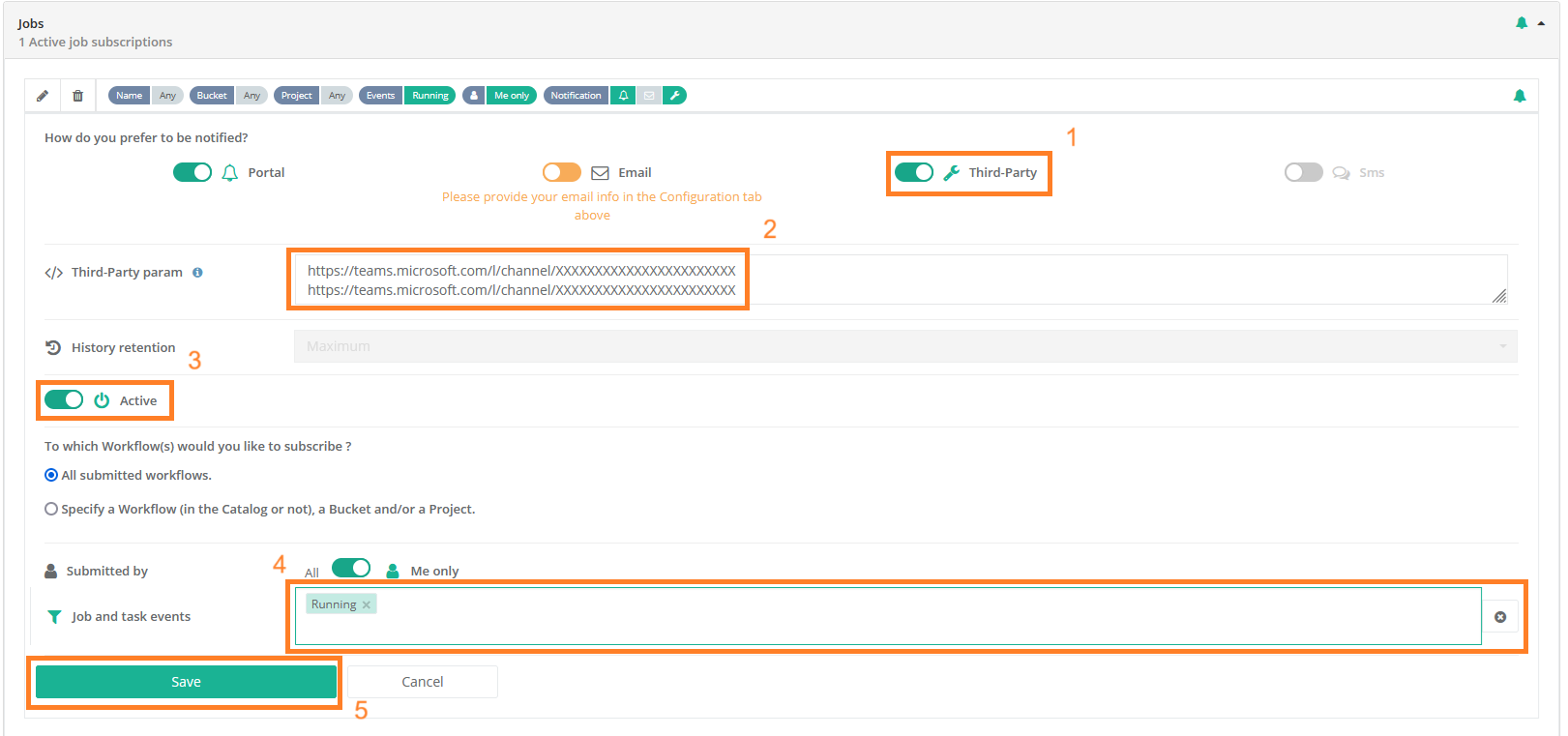

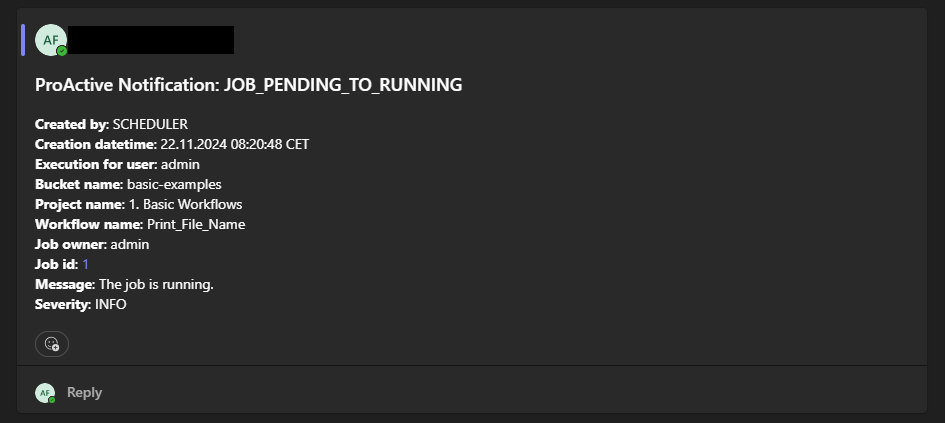

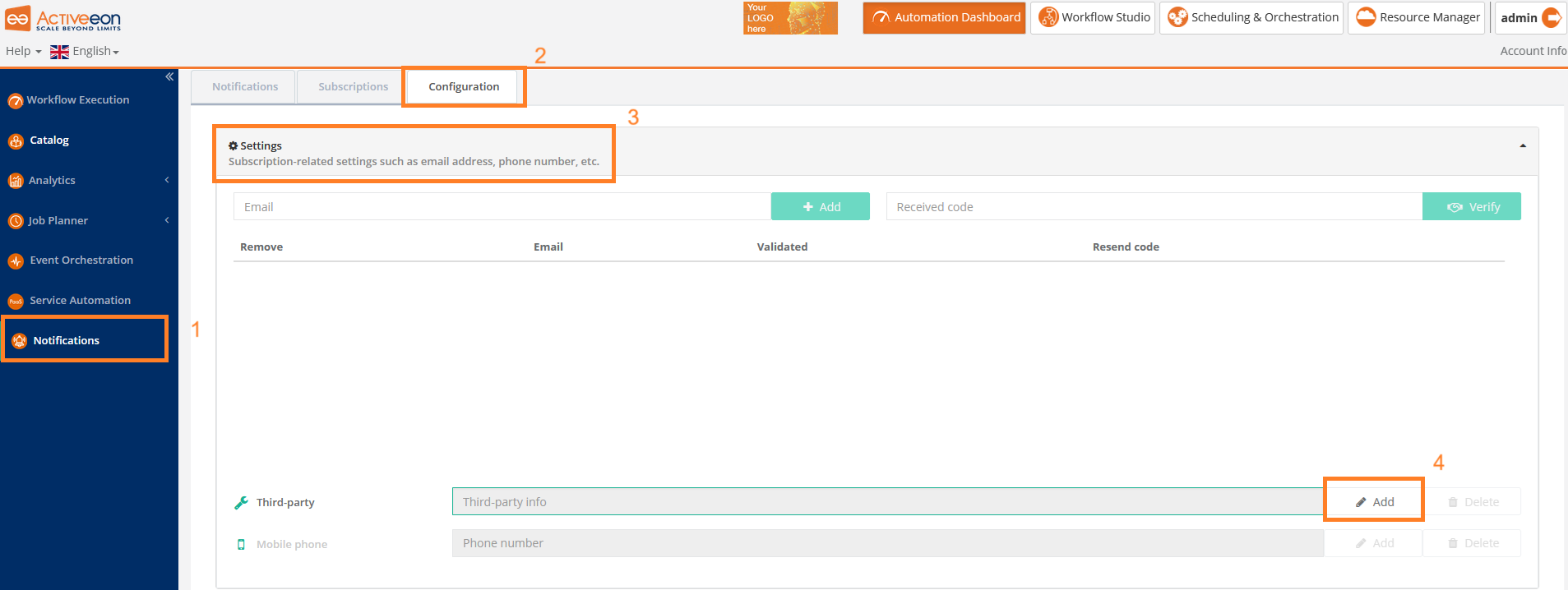

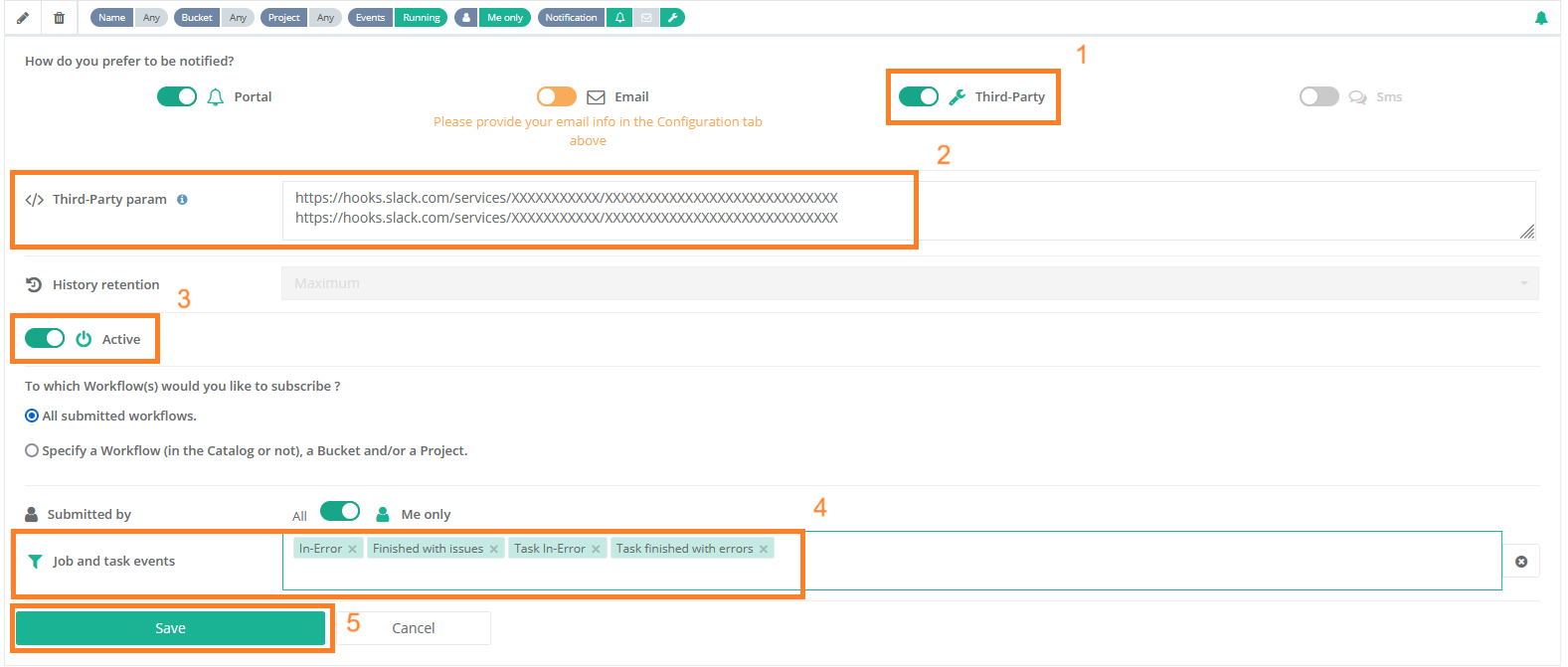

The screenshot above shows the Notifications Portal, which is a ProActive component that allows a user or a group of users to subscribe to different types of notifications (web, email, sms or a third-party notification) when certain event(s) occur (e.g., job In-Error state, job in Finished state, scheduler in Paused state, etc.).

1.1. Glossary

The following terms are used throughout the documentation:

- ProActive Workflows & Scheduling

-

The full distribution of ProActive for Workflows & Scheduling, it contains the ProActive Scheduler server, the REST & Web interfaces, the command line tools. It is the commercial product name.

- ProActive Scheduler

-

Can refer to any of the following:

-

A complete set of ProActive components.

-

An archive that contains a released version of ProActive components, for example

activeeon_enterprise-pca_server-OS-ARCH-VERSION.zip. -

A set of server-side ProActive components installed and running on a Server Host.

-

- Resource Manager

-

ProActive component that manages ProActive Nodes running on Compute Hosts.

- Scheduler

-

ProActive component that accepts Jobs from users, orders the constituent Tasks according to priority and resource availability, and eventually executes them on the resources (ProActive Nodes) provided by the Resource Manager.

| Please note the difference between Scheduler and ProActive Scheduler. |

- REST API

-

ProActive component that provides RESTful API for the Resource Manager, the Scheduler and the Catalog.

- Resource Manager Portal

-

ProActive component that provides a web interface to the Resource Manager. Also called Resource Manager portal.

- Scheduler Portal

-

ProActive component that provides a web interface to the Scheduler. Also called Scheduler portal.

- Workflow Studio

-

ProActive component that provides a web interface for designing Workflows.

- Automation Dashboard

-

Centralized interface for the following web portals: Workflow Execution, Catalog, Analytics, Job Planner, Service Automation, Event Orchestration and Notifications.

- Analytics

-

Web interface and ProActive component, responsible to gather monitoring information for ProActive Scheduler, Jobs and ProActive Nodes

- Job Analytics

-

ProActive component, part of the Analytics service, that provides an overview of executed workflows along with their input variables and results.

- Node Gantt

-

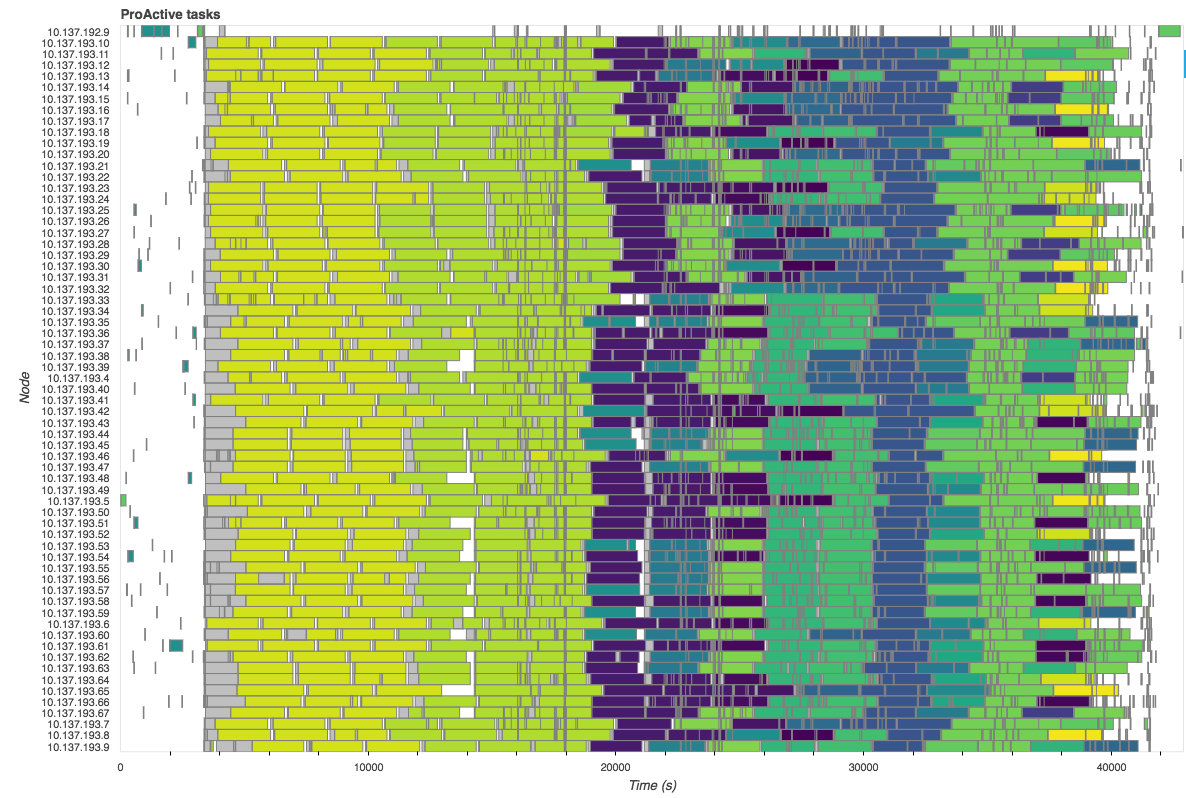

ProActive component, part of the Analytics service, that provides an overview of ProActive Nodes usage over time.

- Health Dashboard

-

Web interface, part of the Analytics service, that displays global statistics and current state of the ProActive server.

- Job Analytics portal

-

Web interface of the Analytics component.

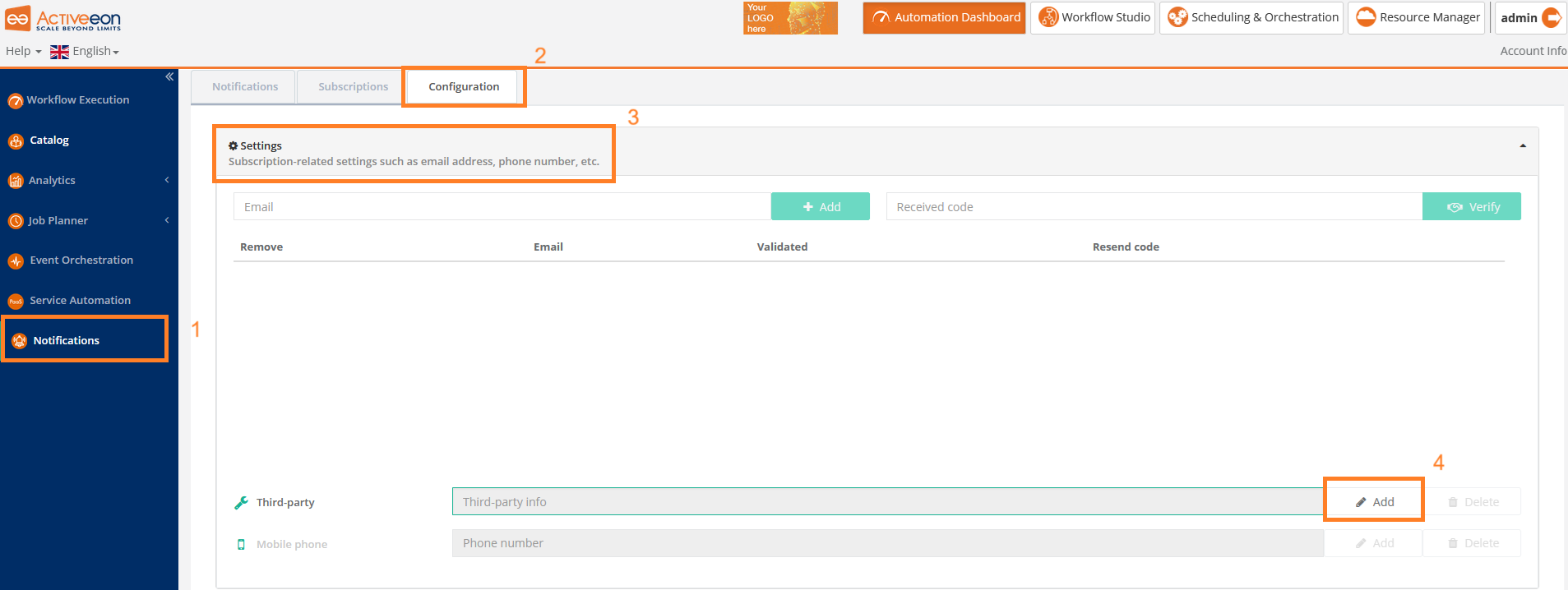

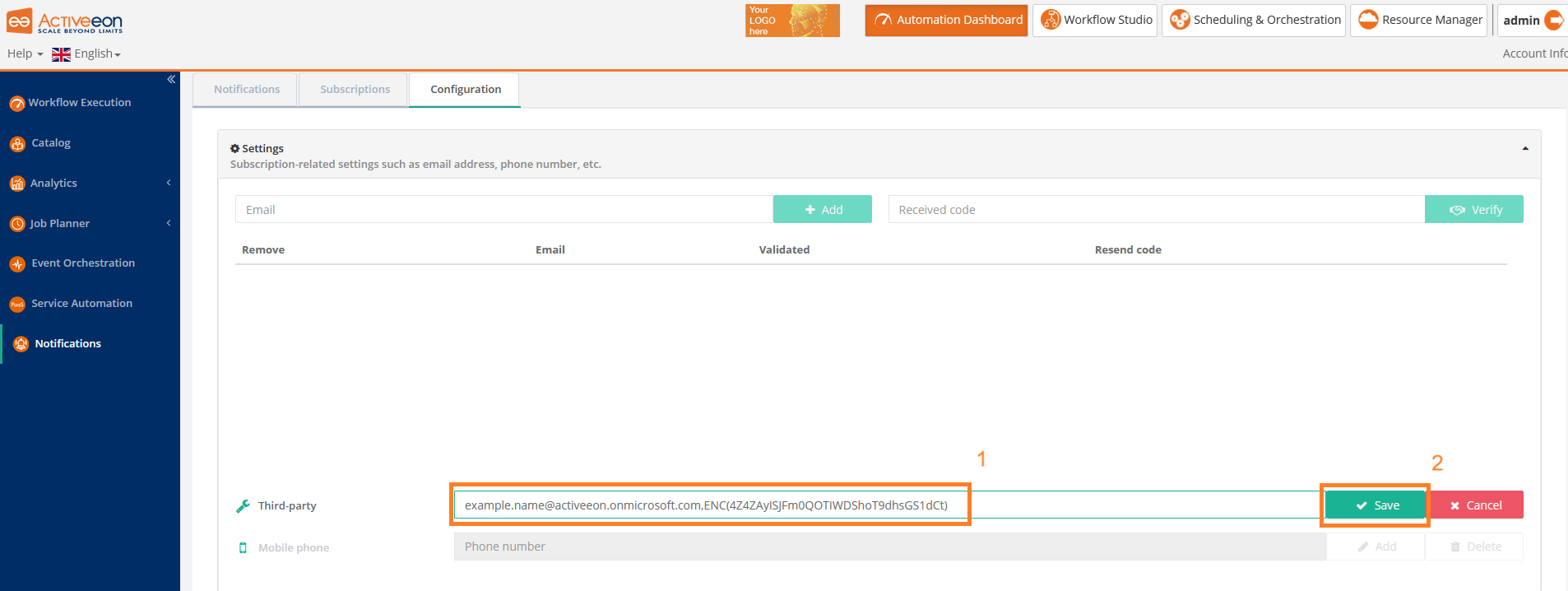

- Notification Service

-

ProActive component that allows a user or a group of users to subscribe to different types of notifications (web, email, sms or a third-party notification) when certain event(s) occur (e.g., job In-Error state, job in Finished state, scheduler in Paused state, etc.).

- Notification portal

-

Web interface to visualize notifications generated by the Notification Service and manage subscriptions.

- Notification Subscription

-

In Notification Service, a subscription is a per-user configuration to receive notifications for a particular type of events.

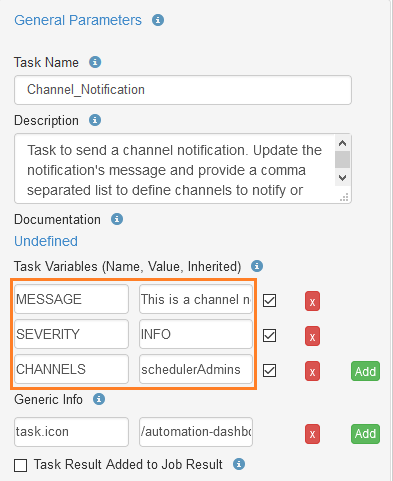

- Third Party Notification

-

A Notification Method which executes a script when the notification is triggered.

- Notification Method

-

Element of a Notification Subscription which defines how a user is notified (portal, email, third-party, etc.).

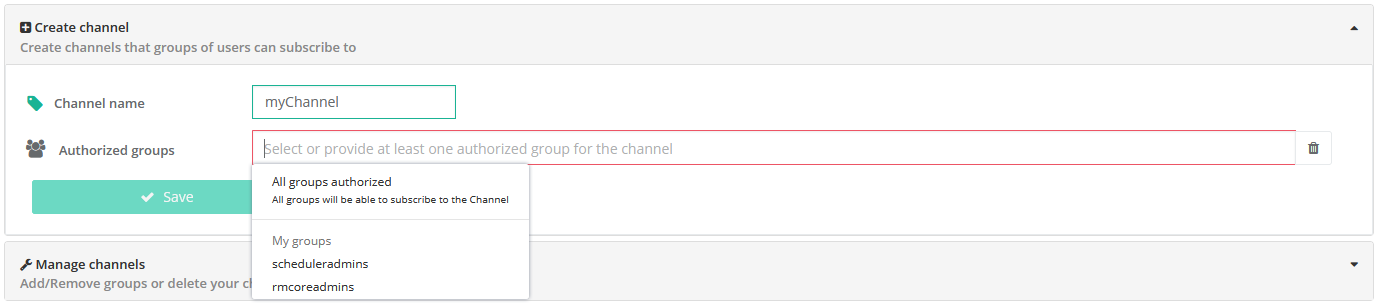

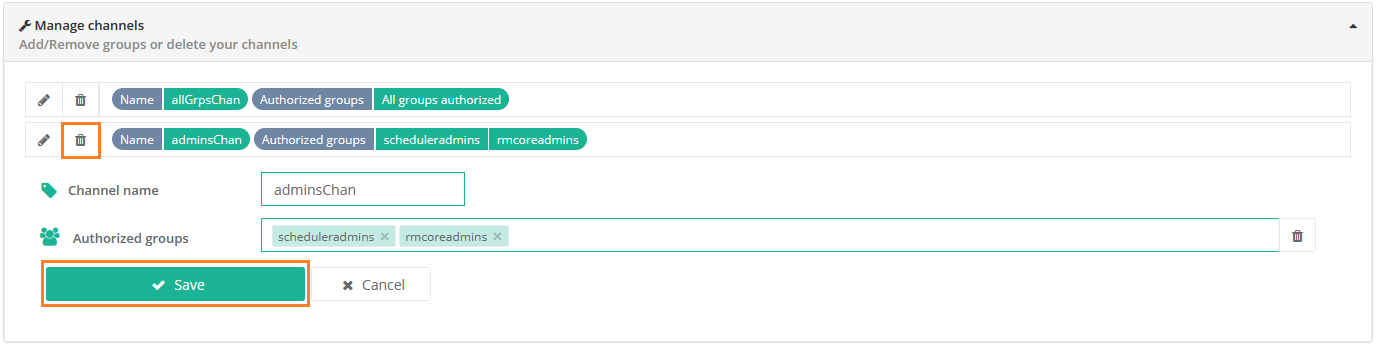

- Notification Channel

-

In the Notification Service, a channel is a notification container that can be notified and displays notifications to groups of users

- Service Automation

-

ProActive component that allows a user to easily manage any On-Demand Services (PaaS, IaaS and MaaS) with full Life-Cycle Management (create, deploy, pause, resume and terminate).

- Service Automation portal

-

Web interface of the Service Automation.

- Workflow Execution portal

-

Web interface, is the main portal of ProActive Workflows & Scheduling and the entry point for end-users to submit workflows manually, monitor their executions and access job outputs, results, services endpoints, etc.

- Catalog portal

-

Web interface of the Catalog component.

- Event Orchestration

-

ProActive component that monitors a system, according to predefined set of rules, detects complex events and then triggers user-specified actions.

- Event Orchestration portal

-

Web interface of the Event Orchestration component.

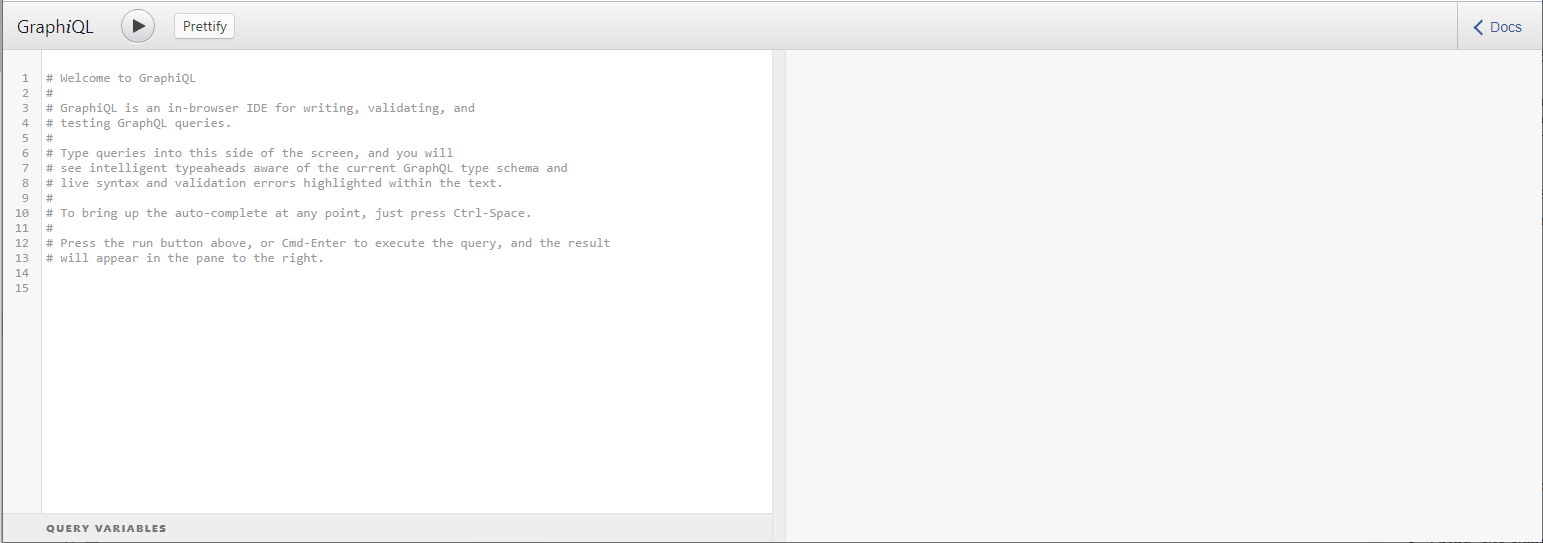

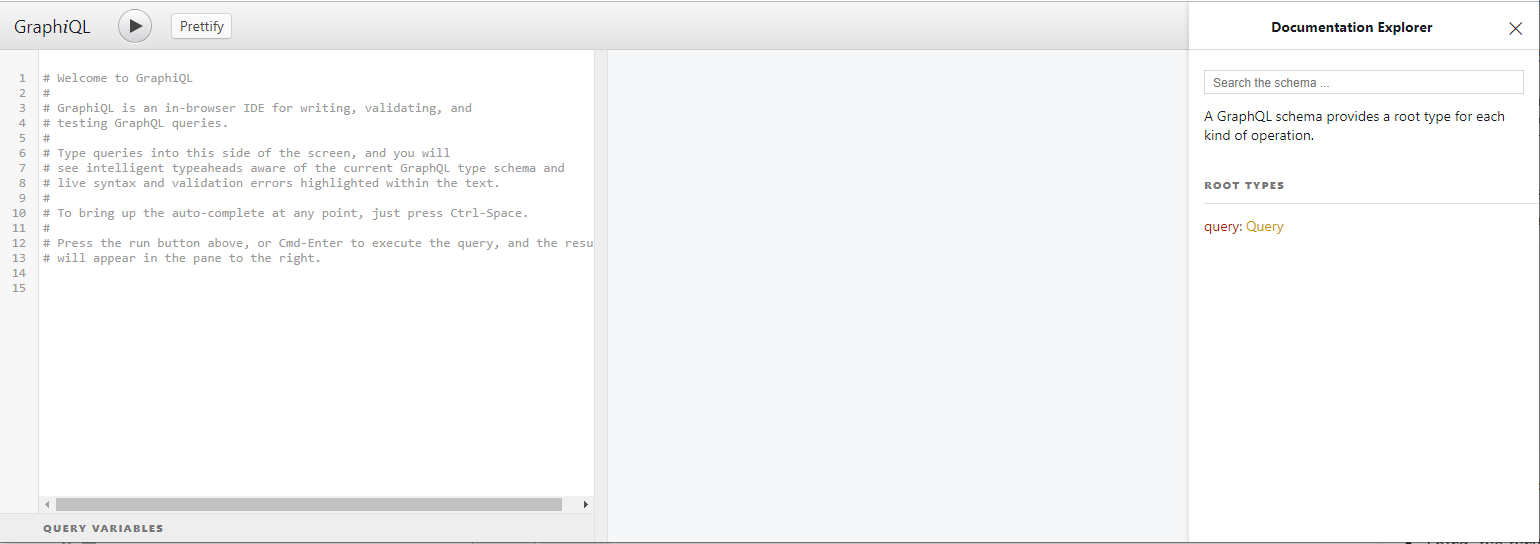

- Scheduling API

-

ProActive component that offers a GraphQL endpoint for getting information about a ProActive Scheduler instance.

- Connector IaaS

-

ProActive component that enables to do CRUD operations on different infrastructures on public or private Cloud (AWS EC2, Openstack, VMWare, Kubernetes, etc).

- Job Planner

-

ProActive component providing advanced scheduling options for Workflows.

- Job Planner portal

-

Web interface to manage the Job Planner service.

- Calendar Definition portal

-

Web interface, component of the Job Planner that allows to create Calendar Definitions.

- Calendar Association portal

-

Web interface, component of the Job Planner that defines Workflows associated to Calendar Definitions.

- Execution Planning portal

-

Web interface, component of the Job Planner that allows to see future executions per calendar.

- Job Planner Gantt portal

-

Web interface, component of the Job Planner that allows to see past and future executions on a Gantt diagram.

- Bucket

-

ProActive notion used with the Catalog to refer to a specific collection of ProActive Objects and in particular ProActive Workflows.

- Server Host

-

The machine on which ProActive Scheduler is installed.

SCHEDULER_ADDRESS-

The IP address of the Server Host.

- ProActive Node

-

One ProActive Node can execute one Task at a time. This concept is often tied to the number of cores available on a Compute Host. We assume a task consumes one core (more is possible, see multi-nodes tasks), so on a 4 cores machines you might want to run 4 ProActive Nodes. One (by default) or more ProActive Nodes can be executed in a Java process on the Compute Hosts and will communicate with the ProActive Scheduler to execute tasks. We distinguish two types of ProActive Nodes:

-

Server ProActive Nodes: Nodes that are running in the same host as ProActive server;

-

Remote ProActive Nodes: Nodes that are running on machines other than ProActive Server.

-

- Compute Host

-

Any machine which is meant to provide computational resources to be managed by the ProActive Scheduler. One or more ProActive Nodes need to be running on the machine for it to be managed by the ProActive Scheduler.

|

Examples of Compute Hosts:

|

- Node Source

-

A set of ProActive Nodes deployed using the same deployment mechanism and sharing the same access policy.

- Node Source Infrastructure

-

The configuration attached to a Node Source which defines the deployment mechanism used to deploy ProActive Nodes.

- Node Source Policy

-

The configuration attached to a Node Source which defines the ProActive Nodes acquisition and access policies.

- Scheduling Policy

-

The policy used by the ProActive Scheduler to determine how Jobs and Tasks are scheduled.

PROACTIVE_HOME-

The path to the extracted archive of ProActive Scheduler release, either on the Server Host or on a Compute Host.

- Workflow

-

User-defined representation of a distributed computation. Consists of the definitions of one or more Tasks and their dependencies.

- Workflow Revision

-

ProActive concept that reflects the changes made on a Workflow during it development. Generally speaking, the term Workflow is used to refer to the latest version of a Workflow Revision.

- Generic Information

-

Are additional information which are attached to Workflows or Tasks. See generic information.

- Calendar Definition

-

Is a json object attached by adding it to the Generic Information of a Workflow.

- Job

-

An instance of a Workflow submitted to the ProActive Scheduler. Sometimes also used as a synonym for Workflow.

- Job Id

-

An integer identifier which uniquely represents a Job inside the ProActive Scheduler.

- Job Icon

-

An icon representing the Job and displayed in portals. The Job Icon is defined by the Generic Information workflow.icon.

- Task

-

A unit of computation handled by ProActive Scheduler. Both Workflows and Jobs are made of Tasks. A Task must define a ProActive Task Executable and can also define additional task scripts

- Task Id

-

An integer identifier which uniquely represents a Task inside a Job ProActive Scheduler. Task ids are only unique inside a given Job.

- Task Executable

-

The main executable definition of a ProActive Task. A Task Executable can either be a Script Task, a Java Task or a Native Task.

- Script Task

-

A Task Executable defined as a script execution.

- Java Task

-

A Task Executable defined as a Java class execution.

- Native Task

-

A Task Executable defined as a native command execution.

- Additional Task Scripts

-

A collection of scripts part of a ProActive Task definition which can be used in complement to the main Task Executable. Additional Task scripts can either be Selection Script, Fork Environment Script, Pre Script, Post Script, Control Flow Script or Cleaning Script

- Selection Script

-

A script part of a ProActive Task definition and used to select a specific ProActive Node to execute a ProActive Task.

- Fork Environment Script

-

A script part of a ProActive Task definition and run on the ProActive Node selected to execute the Task. Fork Environment script is used to configure the forked Java Virtual Machine process which executes the task.

- Pre Script

-

A script part of a ProActive Task definition and run inside the forked Java Virtual Machine, before the Task Executable.

- Post Script

-

A script part of a ProActive Task definition and run inside the forked Java Virtual Machine, after the Task Executable.

- Control Flow Script

-

A script part of a ProActive Task definition and run inside the forked Java Virtual Machine, after the Task Executable, to determine control flow actions.

- Control Flow Action

-

A dynamic workflow action performed after the execution of a ProActive Task. Possible control flow actions are Branch, Loop or Replicate.

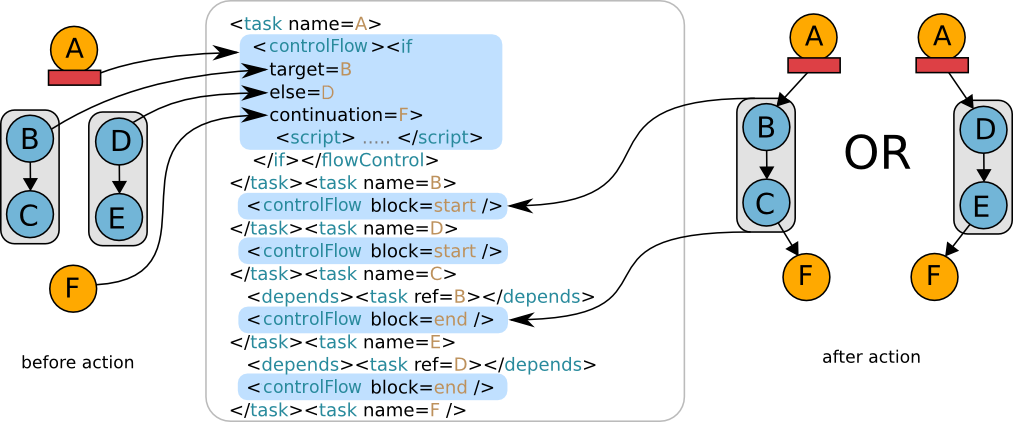

- Branch

-

A dynamic workflow action performed after the execution of a ProActive Task similar to an IF/THEN/ELSE structure.

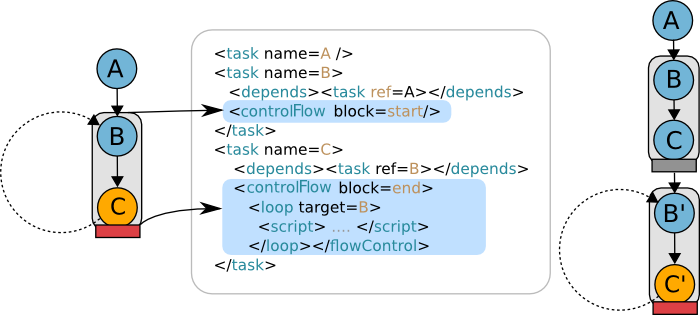

- Loop

-

A dynamic workflow action performed after the execution of a ProActive Task similar to a FOR structure.

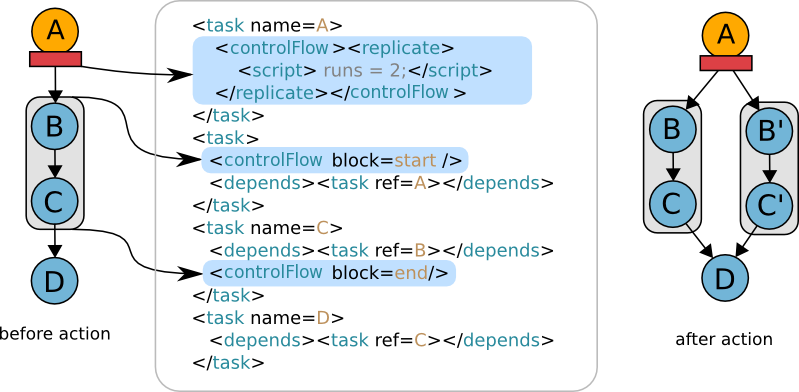

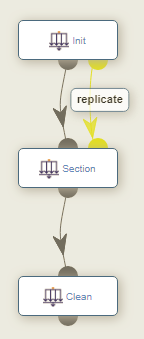

- Replicate

-

A dynamic workflow action performed after the execution of a ProActive Task similar to a PARALLEL FOR structure.

- Cleaning Script

-

A script part of a ProActive Task definition and run after the Task Executable and before releasing the ProActive Node to the Resource Manager.

- Script Bindings

-

Named objects which can be used inside a Script Task or inside Additional Task Scripts and which are automatically defined by the ProActive Scheduler. The type of each script binding depends on the script language used.

- Task Icon

-

An icon representing the Task and displayed in the Studio portal. The Task Icon is defined by the Task Generic Information task.icon.

- ProActive Agent

-

A daemon installed on a Compute Host that starts and stops ProActive Nodes according to a schedule, restarts ProActive Nodes in case of failure and enforces resource limits for the Tasks.

2. Get started

To submit your first computation to ProActive Scheduler, install it in your environment (default credentials: admin/admin) or just use our demo platform try.activeeon.com.

ProActive Scheduler provides comprehensive interfaces that allow to:

-

Create workflows using ProActive Workflow Studio

-

Submit workflows, monitor their execution and retrieve the tasks results using ProActive Scheduler Portal

-

Add resources and monitor them using ProActive Resource Manager Portal

-

Version and share various objects using ProActive Catalog Portal

-

Provide an end-user workflow submission interface using Workflow Execution Portal

-

Generate metrics of multiple job executions using Job Analytics Portal

-

Plan workflow executions over time using Job Planner Portal

-

Add services using Service Automation Portal

-

Perform event based scheduling using Event Orchestration Portal

-

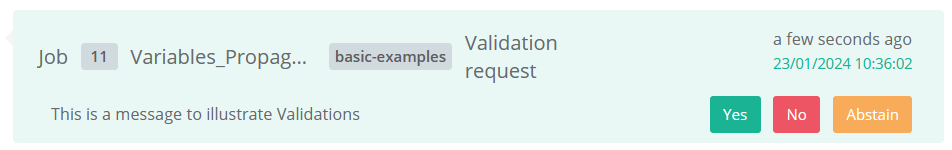

Control manual workflows validation steps using Notification Portal

We also provide a REST API. and command line interfaces for advanced users.

3. Create and run your computation

3.1. Jobs, workflows and tasks

In order to use Scheduler for executing various computations, one needs to write the execution definition also known as the Workflow definition. A workflow definition is an XML file that adheres to XML schema for ProActive Workflows.

It specifies a number of XML tags for specifying execution steps, their sequence and dependencies. Each execution step corresponds to a task which is the smallest unit of execution that can be performed on a computation resources (ProActive Node). There are several types of tasks which caters different use cases.

3.1.1. Task Types

ProActive Scheduler currently supports three main types of tasks:

-

Native Task, a command with eventual parameters to be executed

-

Script Task, a script written in Groovy, Ruby, Python and other languages supported by the JSR-223

-

Java Task, a task written in Java extending the Scheduler API

For instance, a Script Task can be used to execute an inline script definition or a script file as an execution step whereas a Native Task can be used to execute a native executable file.

| We recommend to use script tasks that are more flexible rather than Java tasks. You can easily integrate with any Java code from a Groovy script task. |

3.1.2. Additional Scripts

In addition to the main definition of a Task, scripts can also be used to provide additional actions. The following actions are supported:

-

one or more selection scripts to control the Task resource (ProActive Node) selection.

-

a fork environment script script to control the Task execution environment (a separate Java Virtual Machine or Docker container).

-

a pre script executed immediately before the main task definition (and inside the forked environment).

-

a post script executed immediately after the main task definition if and only if the task did not trigger any error (also run inside the forked environment).

-

a control flow script executed immediately after the post script (if present) or main task definition to control flow behavior such as branch, loop or replicate (also run inside the forked environment).

-

finally, a clean script executed after the task is finished, whether the task succeeded or not, and directly on the ProActive Node which hosted the task.

3.1.3. Task Dependencies

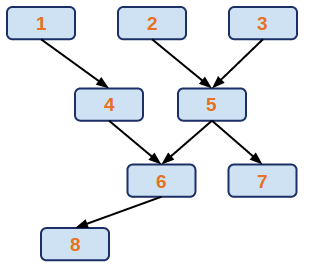

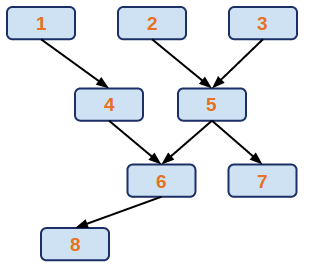

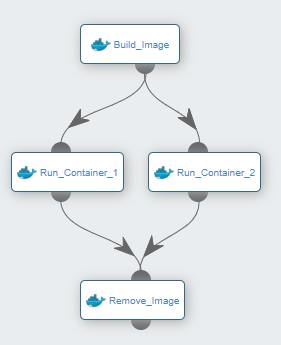

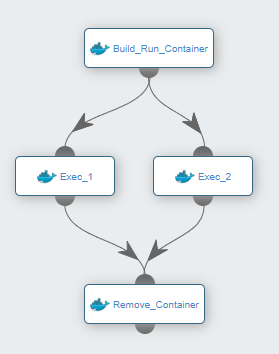

A workflow in ProActive Workflows & Scheduling can be seen as an oriented graph of Tasks:

In this tasks graph, we see that task 4 is preceded by task 1, this means that the ProActive Scheduler will wait for the end of task 1 execution before launching task 4. In a more concrete way, task 1 could be the calculation of a part of the problem to solve, and task 4 takes the result provided by task 1 and compute another step of the calculation. We introduce here the concept of passing data between tasks. This relation is called a Dependency, and we say that task 4 depends on task 1.

We see that task 1, 2 and 3 are not linked, so these three tasks can be executed in parallel, because they are independent from each other.

The task graph is defined by the user at the time of workflow creation, but can also be modified dynamically during the job execution by control flow actions such as Replicate.

A finished job contains the results and logs of each task. Upon failure, a task can be restarted automatically or cancelled.

3.2. Native Tasks

A Native Task is a ProActive Workflow task which main execution is defined as a command to run.

Native tasks are the simplest type of Task, where a user provides a command with a list of parameters.

Using native tasks, one can easily reuse existing applications and embed them in a workflow.

Once the executable is wrapped as a task you can easily leverage some of the workflow constructs to run your executable commands in parallel.

You can find an example of such integration in this XML workflow or you can also build one yourself using the Workflow Studio.

Native application by nature can be tied to a given operating system so if you have heterogeneous nodes at your disposal, you might need to select a suitable node to run your native task. This can be achieved using selection script.

| Native Tasks are not the only possibility available to run executables or commands, this can also be achieved using shell language script tasks. |

3.3. Script Tasks

A Script Task is a ProActive Workflow task which main execution is defined as a script.

ProActive Workflows supports tasks in many scripting languages. The currently supported dynamic languages or backends are Groovy, Jython, Python, JRuby, Javascript, Scala, Powershell, VBScript, R, Perl, PHP, Bash, Any Unix Shell, Windows CMD, Dockerfile, Docker Compose and Kubernetes.

Dockerfile, Docker Compose and Kubernetes are not really scripting languages but rather description languages, ProActive tasks can interpret Dockerfiles, Docker Compose or Kubernetes yaml files to start containers or pods.

|

As seen in the list above, Script Tasks can be used to run native operating system commands by providing a script written in bash, ksh, windows cmd, Powershell, etc…

Simply set the language attribute to bash, shell, or cmd and type your command(s) in the workflow.

In this sense, it can replace in most cases a Native Task.

| You can easily embed small scripts in your workflow. The nice thing is that the workflow will be self-contained and will not require to compile your code before executing. However, we recommend that you keep these scripts small to keep the workflow easy to understand. |

You can find an example of a script task in this XML workflow or you can also build one yourself using the Workflow Studio. The quickstart tutorial relies on script tasks and is a nice introduction to writing workflows.

Scripts can also be used to decorate any kind of task (Script, Java or Native) with specific actions, as described in the additional scripts section.

It is thus possible to combine various scripting languages in a single Task. For example a pre script could be written in bash to transfer some files from a remote file system, while the main script will be written in python to process those files.

Finally, a Script Task may or may not return a result materialized by the result script binding.

3.3.1. Script Bindings

A Script Task or additional scripts can use automatically-defined script bindings. These bindings allow to associate the script inside its workflow context, for example, the ability to know the current workflow variables as submitted by a user, the workflow job id as run inside the ProActive Scheduler, etc.

Bindings allow as well to pass information between various scripts inside the same task or across different tasks.

A script binding can be either:

-

Defined automatically by the ProActive Scheduler before the script starts its execution. In this case, we call it also a provided or input script binding.

-

Needed to be defined by the script during its execution to provide meaningful results. In this case, we call it also a result or output script binding.

Below is an example of Script Task definition which uses both types of bindings:

jobId = variables.get("PA_JOB_ID")

result = "The Id of the current job is: " + jobIdAs we can see, the variables binding in provided automatically by the ProActive Scheduler before the script execution and it is used to compute a result binding as task output.

Bindings are stored internally inside the ProActive Scheduler as Java Objects. Accordingly, a conversion may be performed when targeting (or when reading from) other languages.

For example, Generic Information or Workflow Variables are stored as Java objects implementing the Map interface.

When creating the variables or genericInformation bindings prior to executing a Groovy, JRuby, Jython or Python script, the ProActive Scheduler will convert it to various types accepted by the target language.

In those languages, printing the type of the genericInformation binding will show:

Groovy: class com.google.common.collect.RegularImmutableBiMap JRuby: Java::ComGoogleCommonCollect::RegularImmutableBiMap Jython: <type 'com.google.common.collect.RegularImmutableBiMap'> Python: <class 'py4j.java_collections.JavaMap'>

We see here that Groovy, JRuby and Jython did not perform any conversion, whereas Python (CPython) did. This behavior is expected as CPython script execution is run as a standalone python process and a custom type conversion occurs while the formers are run directly inside the task Java Virtual Machine will full Java type compatibility.

Depending on the script type (task script, selection script, etc…), the script may need to define an output binding to return some information to the scheduler.

Below are some examples of output bindings for various kind of scripts:

-

resultandresultMetadatafor a Script Task main execution script. -

selectedfor a selection script. -

loopfor a Loop construct flow script. -

runsfor a Replicate construct flow script.

The complete list of script bindings (both input and output) is available in the Script Bindings Reference section.

Below are descriptions of some specific scripting language support, which can be used in Script Tasks main execution script or in any Task additional scripts.

3.4. Java Tasks

A workflow can execute Java classes thanks to Java Tasks. In terms of XML definition, a Java Task consists of a fully-qualified class name along with parameters:

<task name="Java_Task">

<javaExecutable class="org.ow2.proactive.scheduler.examples.WaitAndPrint">

<parameters>

<parameter name="sleepTime" value="20"/>

<parameter name="number" value="2"/>

</parameters>

</javaExecutable>

</task>The provided class must extend the JavaExecutable abstract class and implement the execute method.

Any parameter must be defined as public attributes. For example, the above WaitAndPrint class contains the following attributes definitions:

/** Sleeping time before displaying. */

public int sleepTime;

/** Parameter number. */

public int number;A parameter conversion is performed automatically by the JavaExecutable super class. If this automatic conversion is not suitable, it is possible to override the init method.

Finally, several utility methods are provided by JavaExecutable and should be used inside execute. A good example is getOut which allows writing some textual output to the workflow task or getLocalSpace which allows access to the task execution directory.

The complete code for the WaitAndPrint class is available below:

public class WaitAndPrint extends JavaExecutable {

/** Sleeping time before displaying. */

public int sleepTime;

/** Parameter number. */

public int number;

/**

* @see JavaExecutable#execute(TaskResult[])

*/

@Override

public Serializable execute(TaskResult... results) throws Throwable {

String message = null;

try {

getErr().println("Task " + number + " : Test STDERR");

getOut().println("Task " + number + " : Test STDOUT");

message = "Task " + number;

int st = 0;

while (st < sleepTime) {

Thread.sleep(1000);

try {

setProgress((st++) * 100 / sleepTime);

} catch (IllegalArgumentException iae) {

setProgress(100);

}

}

} catch (Exception e) {

message = "crashed";

e.printStackTrace(getErr());

}

getOut().println("Terminate task number " + number);

return ("No." + this.number + " hi from " + message + "\t slept for " + sleepTime + " Seconds");

}

}3.5. Run a workflow

To run a workflow, the user submits it to the ProActive Scheduler. It will be possible to choose the values of all Job Variables when submitting the workflow (see section Job Variables). A verification is performed to ensure the well-formedness of the workflow. A verification will also be performed to ensure that all variables are valid according to their model definition (see section Variable Model). Next, a job is created and inserted into the pending queue and waits until free resources become available. Once the required resources are provided by the ProActive Resource Manager, the job is started. Finally, once the job is finished, it goes to the queue of finished jobs where its result can be retrieved.

You can submit a workflow to the Scheduler using the Workflow Studio, the Scheduler Web Interface or command line tools. For advanced users we also expose REST and JAVA APIs.

| During the submission, you will be able to edit Workflow variables, so you can effectively use them to parameterize workflow execution and use workflows as templates. |

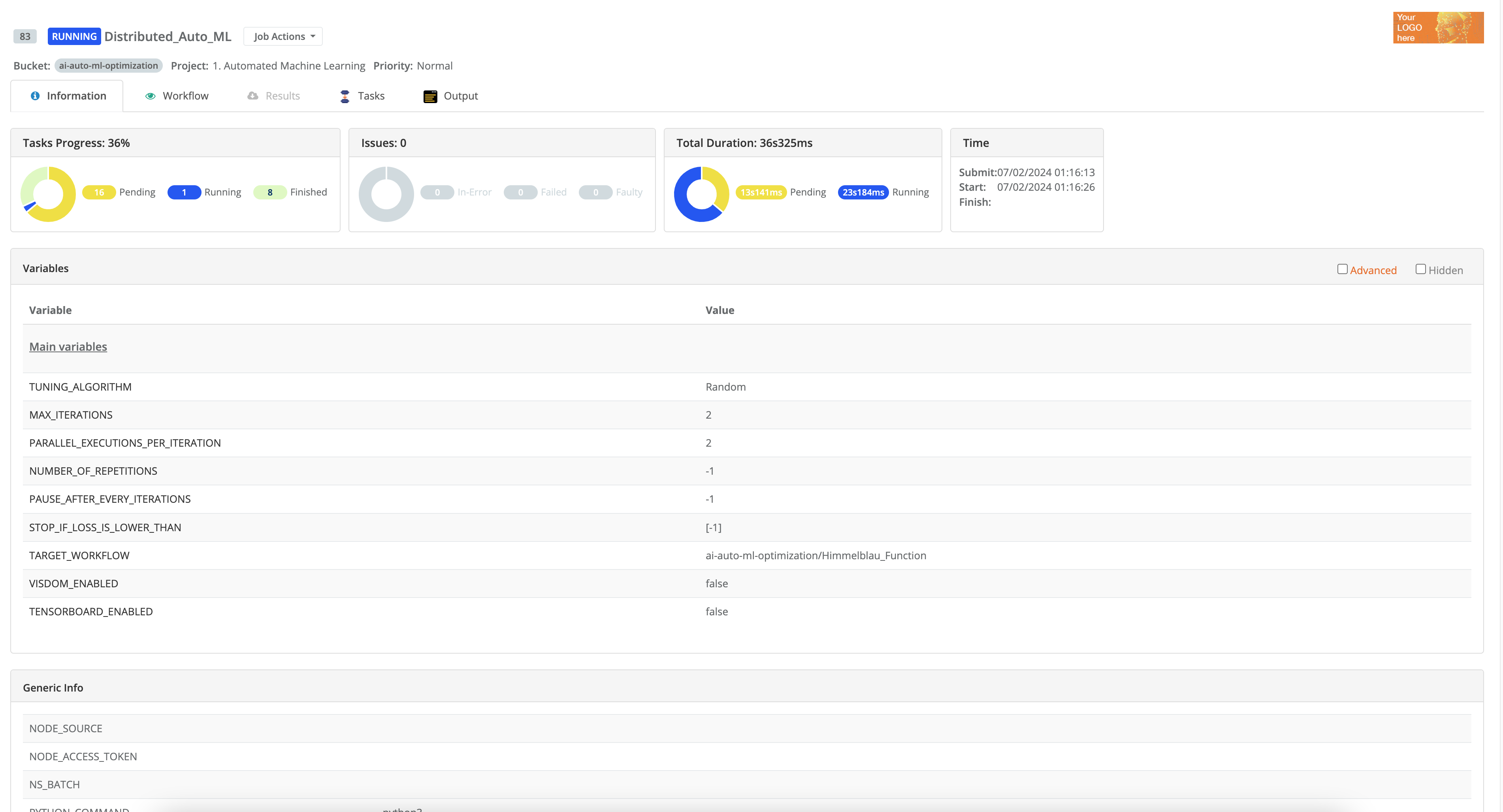

3.5.1. Job & Task status

During their execution, jobs go through different status.

| Status | Name | Description |

|---|---|---|

|

Pending |

The job is waiting to be scheduled. None of its tasks have been Running so far. |

|

Running |

The job is running. At least one of its task has been scheduled. |

|

Stalled |

The job has been launched but no task is currently running. |

|

Paused |

The job is paused waiting for user to resume it. |

|

In-Error |

The job has one or more In-Error tasks that are suspended along with their dependencies. User intervention is required to fix the causing issues and restart the In-Error tasks to resume the job. |

| Status | Name | Description |

|---|---|---|

|

Finished |

The job is finished. Tasks are finished or faulty. |

|

Cancelled |

The job has been canceled because of an exception. This status is used when an exception is thrown by the user code of a task and when the user has asked to cancel the job on exception. |

|

Failed |

The job has failed. One or more tasks have failed (due to resources failure). There is no more executionOnFailure left for a task. |

|

Killed |

The job has been killed by the user. |

Similarly to jobs, during their execution, tasks go through different status.

| Status | Name | Description |

|---|---|---|

|

Aborted |

The task has been aborted by an exception on an other task while the task is running. (job is cancelOnError=true). Can be also in this status if the job is killed while the concerned task was running. |

|

Resource down |

The task is failed (only if maximum number of execution has been reached and the node on which it was started is down). |

|

Faulty |

The task has finished execution with error code (!=0) or exception. |

|

Finished |

The task has finished execution. |

|

In-Error |

The task is suspended after first error, if the user has asked to suspend it. The task is waiting for a manual restart action. |

|

Could not restart |

The task could not be restarted. It means that the task could not be restarted after an error during the previous execution. |

|

Could not start |

The task could not be started. It means that the task could not be started due to dependencies failure. |

|

Paused |

The task is paused. |

|

Pending |

The task is in the scheduler pending queue. |

|

Running |

The task is executing. |

|

Skipped |

The task was not executed: it was the non-selected branch of an IF/ELSE control flow action. |

|

Submitted |

The task has just been submitted by the user. |

|

Faulty… |

The task is waiting for restart after an error (i.e. native code != 0 or exception, and maximum number of execution is not reached). |

|

Failed… |

The task is waiting for restart after a failure (i.e. node down). |

3.5.2. Job Priority

A job is assigned a default priority of NORMAL but the user can increase or decrease the priority once the

job has been submitted. When they are scheduled, jobs with the highest priory are executed first.

The following values are available:

-

IDLE -

LOWEST -

LOW -

NORMAL -

HIGHcan only be set by an administrator -

HIGHESTcan only be set by an administrator

3.6. Retrieve logs

It is possible to retrieve multiple logs from a job, these logs can either be:

-

The standard output/error logs associated with a job.

-

The standard output/error logs associated with a task.

-

The scheduler server logs associated with a job.

-

The scheduler server logs associated with a task.

Unless your account belongs to an administrator group, you can only see the logs of a job that you own.

3.6.1. Retrieve logs from the portal

-

Job standard output/error logs:

To view the standard output or error logs associated with a job, select a job from the job list and then on the Output tab in the bottom right panel.

Click on Streaming Output checkbox to auto-fetch logs for running tasks of the entire Job. The logs panel will be updated as soon as new log lines will be printed by this job.

You cannot select a specific Task in the streaming mode. If you activate streaming while some Tasks are already finished, you will get the logs of those Tasks as well.

Click on Finished Tasks Output button to retrieve logs for already finished tasks. For all the finished Tasks within the Job, or for the selected Task.

The command does work when Job is still in Running status, as well as when Job is Finished.

Logs are limited to 1024 lines. Should your job logs be longer, you can select the Full logs (download) option from the drop down list. -

Task standard output/error logs:

To view the standard output or error logs associated with a task, select a job from the job list and a task from the task list.

Then in the Output tab, choose Selected task from the drop down list.

Once the task is terminated, you will be able to click on the Finished Tasks Output button to see the standard output or error associated with the task.

It is not possible to view the streaming logs of single task, only the job streaming logs are available. -

Job server logs:

Whether a job is running or finished, you can access the associated server logs by selecting a job, opening the Server Logs tab in the bottom-right panel and then clicking on Fetch logs.

Server logs contains debugging information, such as the job definition, output of selection or cleaning scripts, etc. -

Task server logs:

In the Server Logs tab, you can choose Selected task to view the server logs associated with a single task.

3.6.2. Retrieve logs from the command line

The chapter command line tools explains how to use the command line interface. Once connected, you can retrieve the various logs using the following commands. Server logs cannot be accessed from the command line.

-

Job standard output/error logs: joboutput(jobid)

> joboutput(1955) [1955t0@precision;14:10:57] [2016-10-27 14:10:057 precision] HelloWorld [1955t1@precision;14:10:56] [2016-10-27 14:10:056 precision] HelloWorld [1955t2@precision;14:10:56] [2016-10-27 14:10:056 precision] HelloWorld [1955t3@precision;14:11:06] [2016-10-27 14:11:006 precision] HelloWorld [1955t4@precision;14:11:05] [2016-10-27 14:11:005 precision] HelloWorld

-

Task standard output/error logs: taskoutput(jobid, taskname)

> taskoutput(1955,'0_0') [1955t0@precision;14:10:57] [2016-10-27 14:10:057 precision] HelloWorld

-

Streaming job standard output/error logs: livelog(jobid)

> livelog(2002) Displaying live log for job 2002. Press 'q' to stop. > Displaying live log for job 2002. Press 'q' to stop. [2002t2@precision;15:57:13] [ABC, DEF]

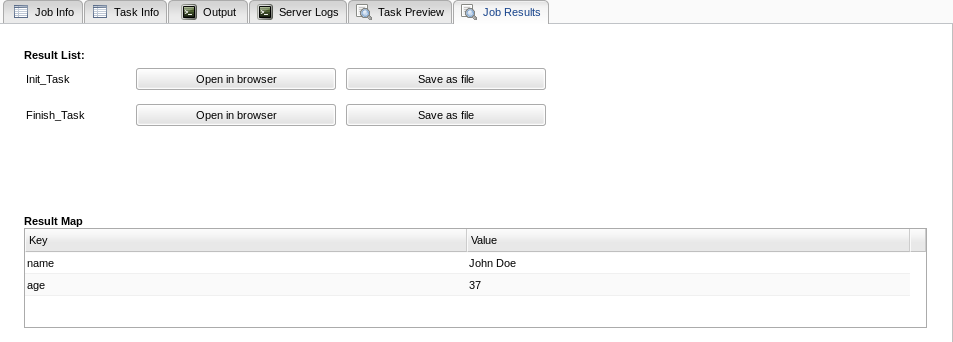

3.7. Retrieve results

Once a job or a task is terminated, it is possible to get its result. Unless you belong to the administrator group, you can only get the result of the job that you own. Results can be retrieved using the Scheduler Web Interface or the command line tools.

| When running native application, the task result will be the exit code of the application. Results usually make more sense when using script or Java tasks. |

3.7.1. Retrieve results from the portal

In the scheduler portal, select a job, then select a task from the job’s task list. Click on Preview tab in the bottom-right panel.

In this tab, you will see two buttons:

-

Open in browser: when clicking on this button, the result will be displayed in a new browser tab. By default, the result will be displayed in text format. If your result contains binary data, it is possible to specify a different display mode using <<_assigning_metadata_to_task_result,Result Metadata>.

If the task failed, when clicking on the Open in browser button, the task error will be displayed. -

Save as file: when clicking on this button, the result will be saved on disk in binary format. By default, the file name will be generated automatically using the job and task ids, without an extension. It is possible to customize this behavior and specify in the task a file name, or a file extension using Result Metadata.

The following example gets one png image and add the metadata to help the browser display it and add a name when downloading.

file = new File(fileName)

result = file.getBytes()

resultMetadata.put("file.name", fileName)

resultMetadata.put("content.type", "image/png")

3.7.2. Retrieve results from the command line

The chapter command line tools explains how to use the command line interface. Once connected, you can retrieve the task or job results:

-

Result of a single task: taskresult(jobid, taskname)

> taskresult(2002, 'Merge')

task('Merge') result: true

-

Result of all tasks of a job: jobresult(jobid)

> jobresult(2002)

job('2002') result:

Merge : true

Process*1 : DEF

Process : ABC

Split : {0=abc, 1=def}

4. ProActive Studio

ProActive Workflow Studio is used to create and submit workflows graphically. The Studio allows to simply drag-and-drop various task constructs and draw their dependencies to form complex workflows. It also provides various flow control widgets such as conditional branch, loop, replicate etc to construct workflows with dynamic structures.

The studio usage is illustrated in the following example.

4.1. A simple example

The quickstart tutorial on try.activeeon.com shows how to build a simple workflow using script tasks.

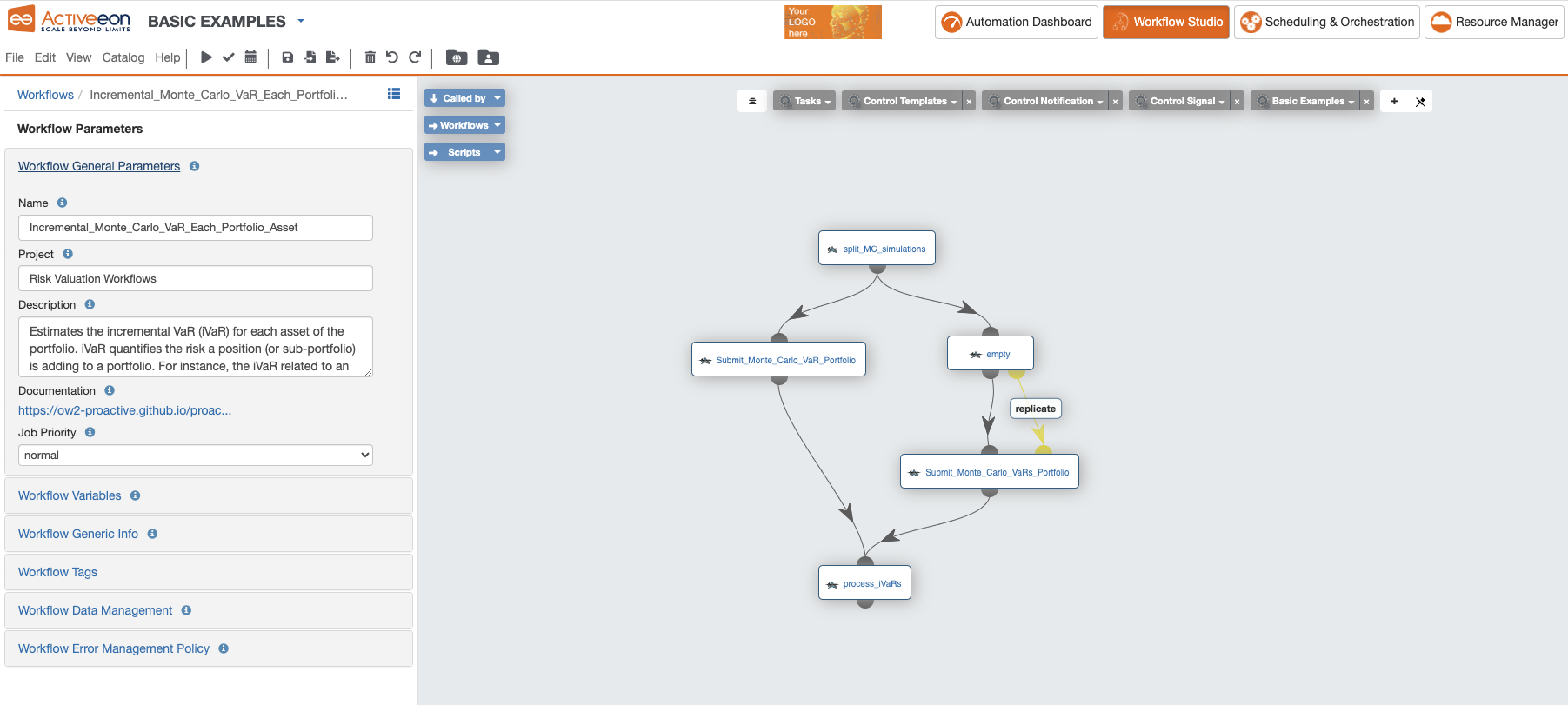

Below is an example of a workflow created with the Studio:

In the left part, are illustrated the General Parameters of the workflow with the following information:

-

Name: the name of the workflow. -

Project: the project name to which belongs the workflow. -

Description: the textual description of the workflow. -

Documentation: if the workflow has a Generic Information named "Documentation", then its URL value is displayed as a link. -

Job Priority: the priority assigned to the workflow. It is by default set toNORMAL, but can be increased or decreased once the job is submitted.

In the right part, we see the graph of dependent tasks composing the workflow. Each task can be selected to show the task specific attributes and defintions.

Finally, above the workflow graph, we see the various palettes which can be used to drag & drop sample task definitions or complex constructs.

The following chapters describe the three main type of tasks which can be defined inside ProActive Workflows.

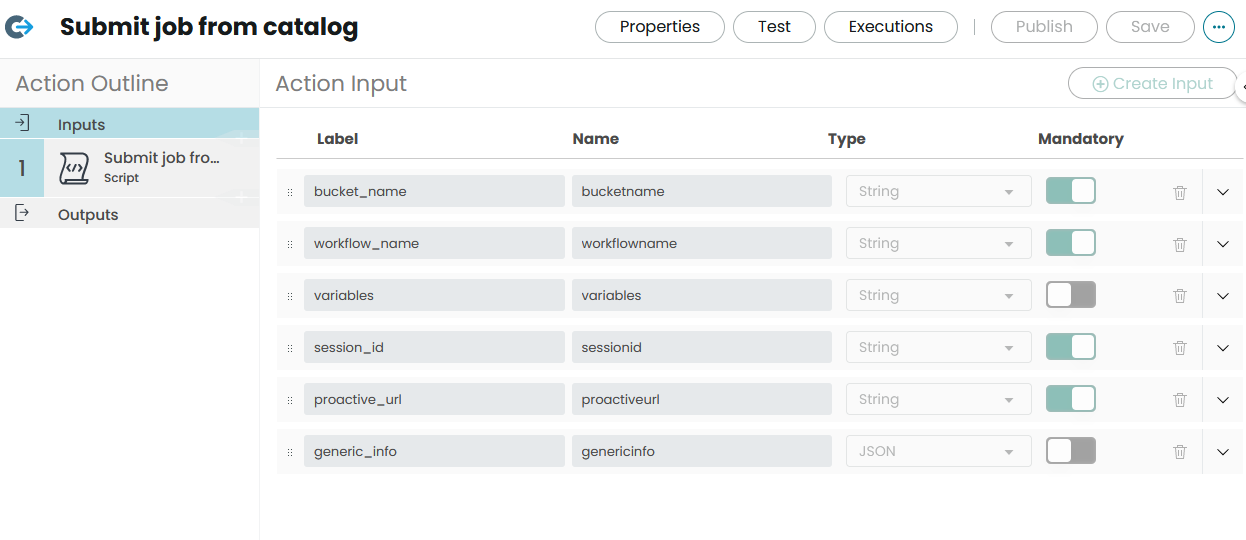

4.2. Use the ProActive Catalog from the Studio

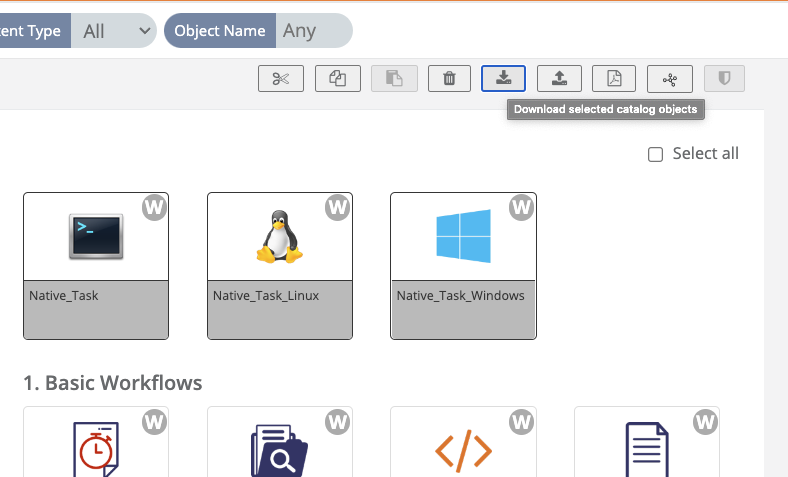

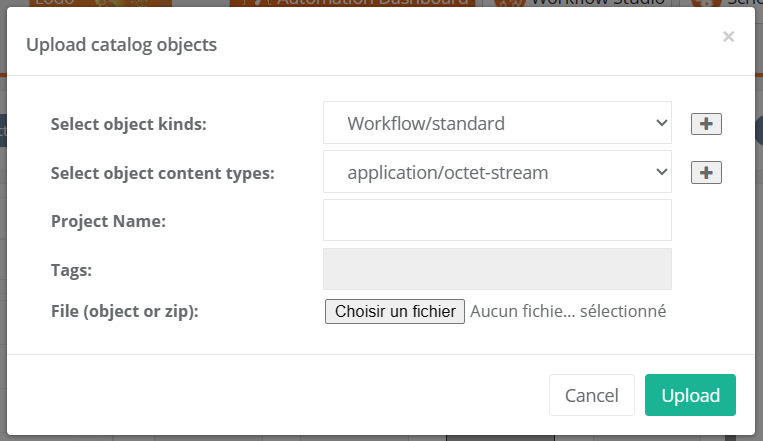

The GUI interaction with the Catalog can be done in two places: the Studio and Catalog Portal. The portals follow the concepts of the Catalog: Workflows are stored inside buckets, a workflow has some metadata and can have several revisions.

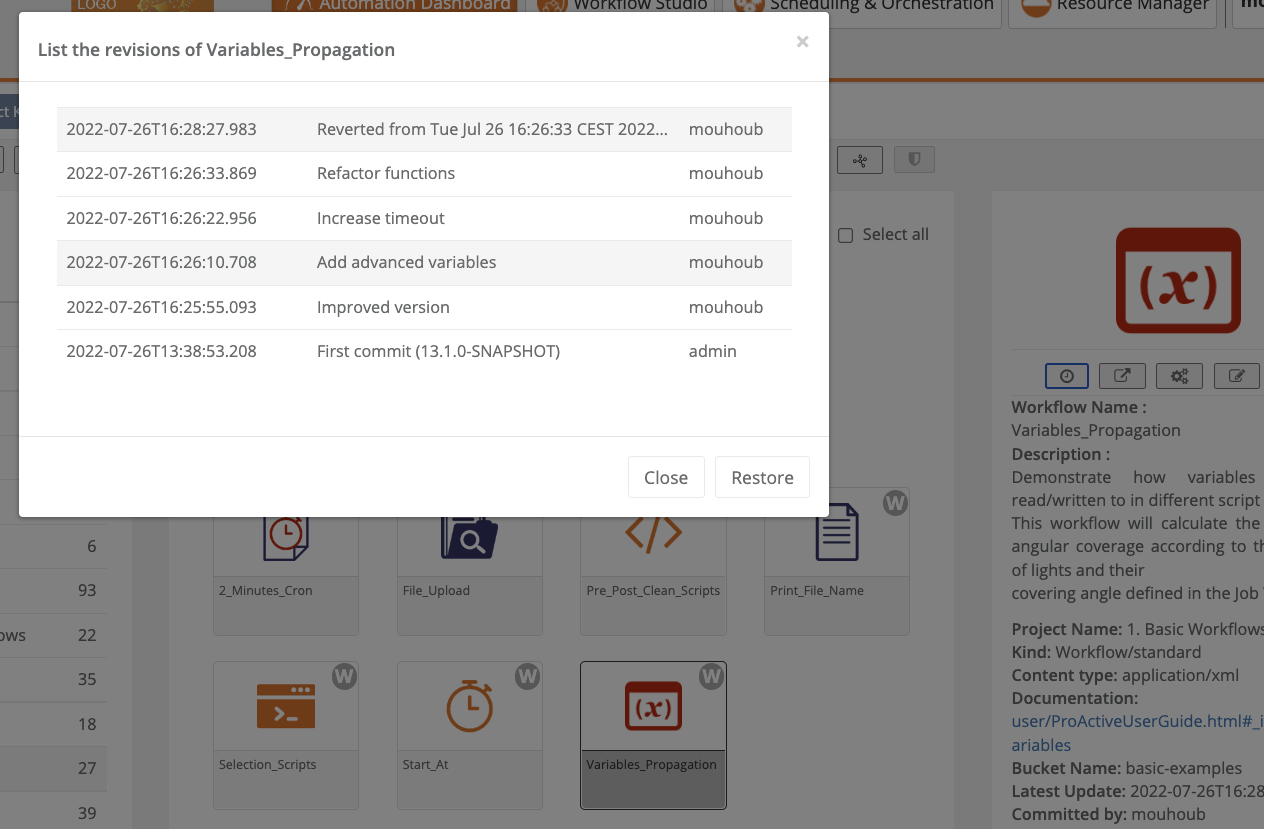

The Catalog view in the Studio or in the Catalog Portal allows to browse all the workflows in the Catalog grouped by buckets and to list all the revisions of a workflow along with their commit message.

Inside the Studio, there is a Catalog menu from which a user can directly interact with the Catalog to import or publish Workflows.

Additionally, the Palette of the Studio lists the user’s favourite buckets to allow easy import of workflows from the Catalog with a simple Drag & Drop.

4.2.1. Publish current Workflow to the Catalog

Workflows created in the studio can be saved inside a bucket in the Catalog by using the Publish current Workflow to the Catalog action.

When saving a Workflow in the Catalog, users can add a specific commit message.

If a Workflow with the same name already exists in the specified bucket, a new revision of the Workflow is created.

We recommend to always specify commit messages at any commit for an easier differentiation between stored versions.

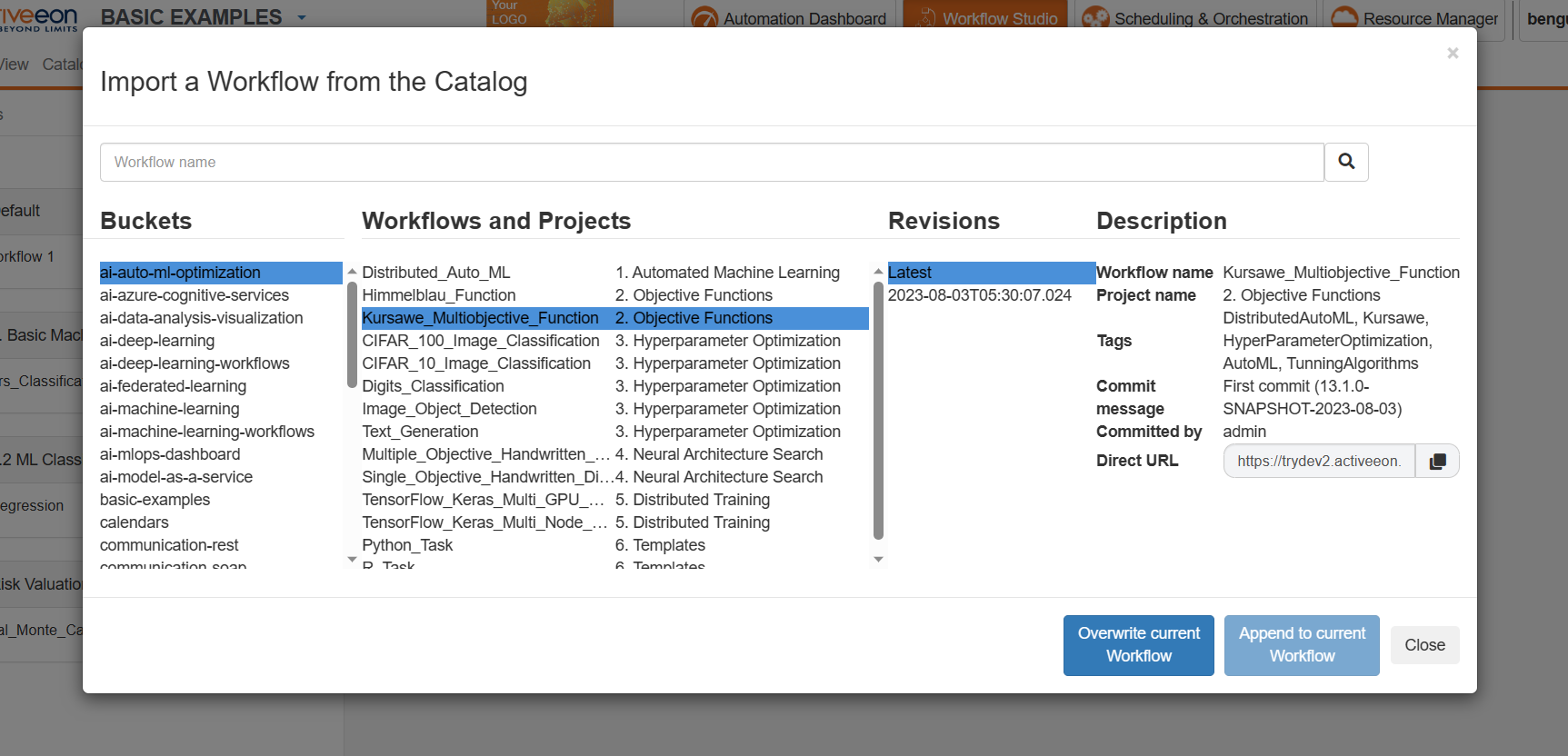

4.2.2. Get a Workflow from the Catalog

When the Workflow is selected from Catalog you have two options:

-

Open as a new Workflow: Open the Workflow from Catalog as a new Workflow in Studio. -

Append to current Workflow: Append the selected Workflow from the Catalog to the Workflow already opened inside the Studio.

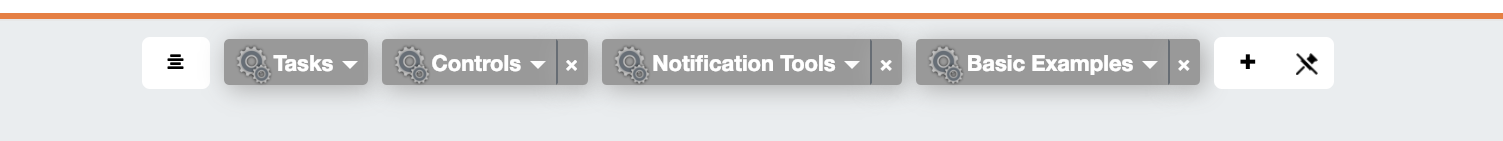

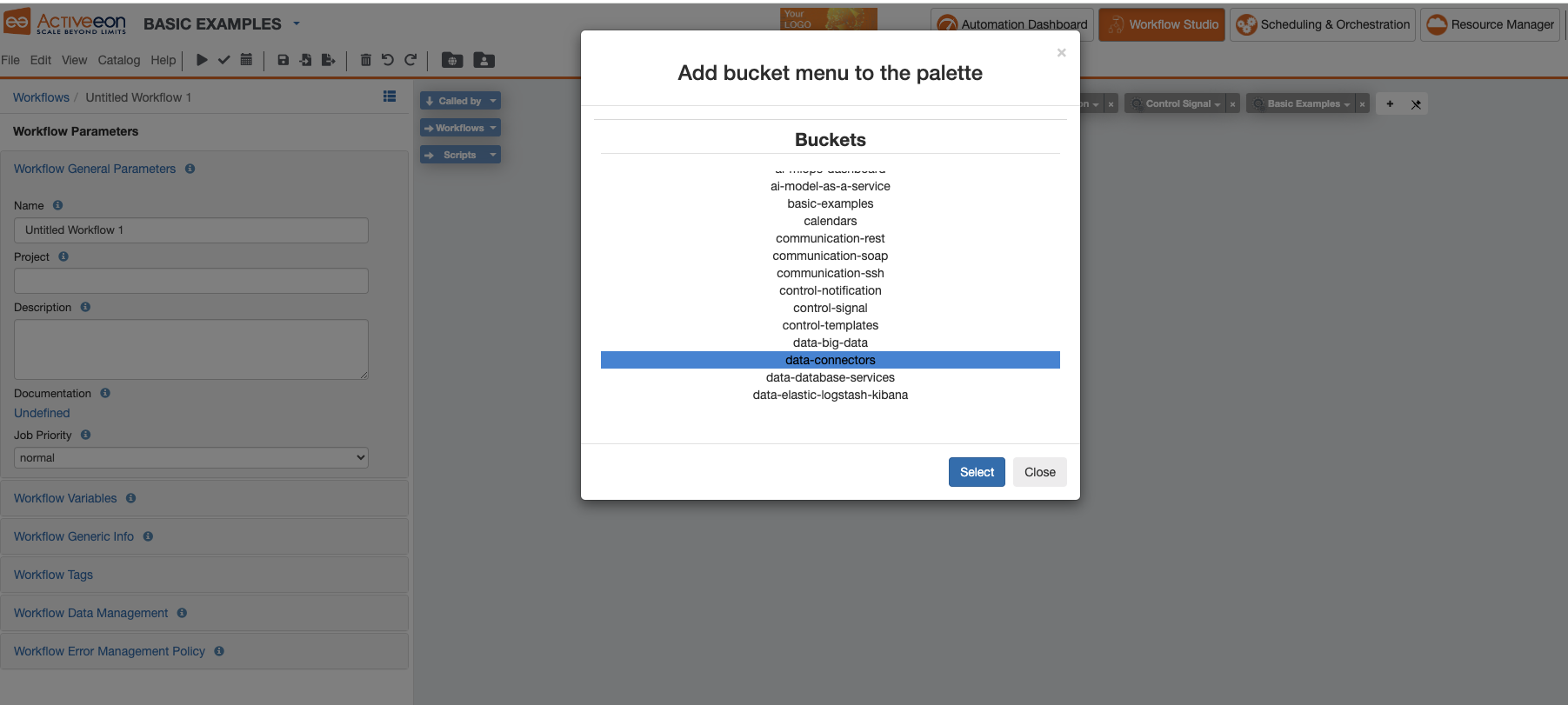

4.2.3. Add Bucket Menu to the Palette

The Palette makes it easier to use workflows stored in a specific Bucket of the Catalog as templates when designing a Workflow.

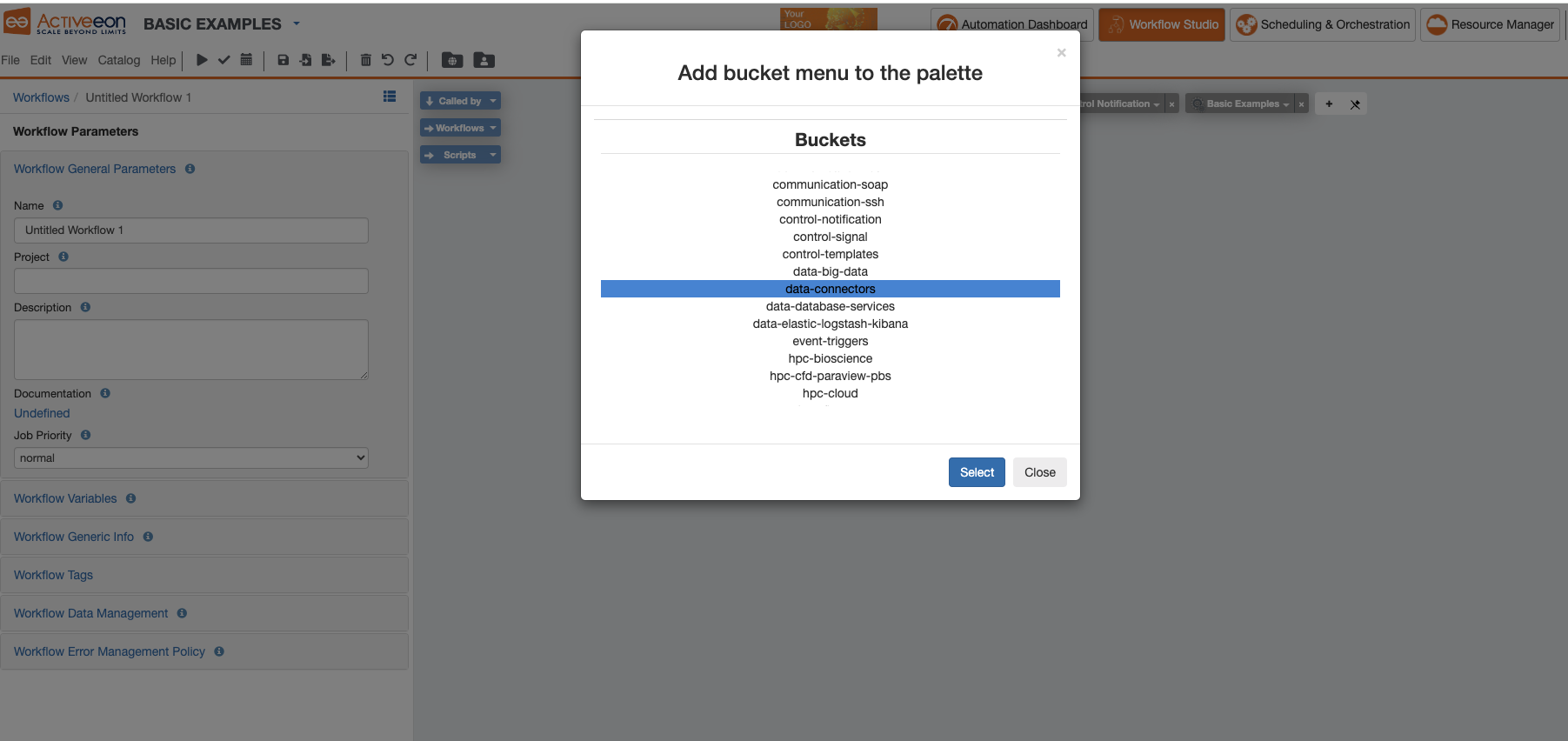

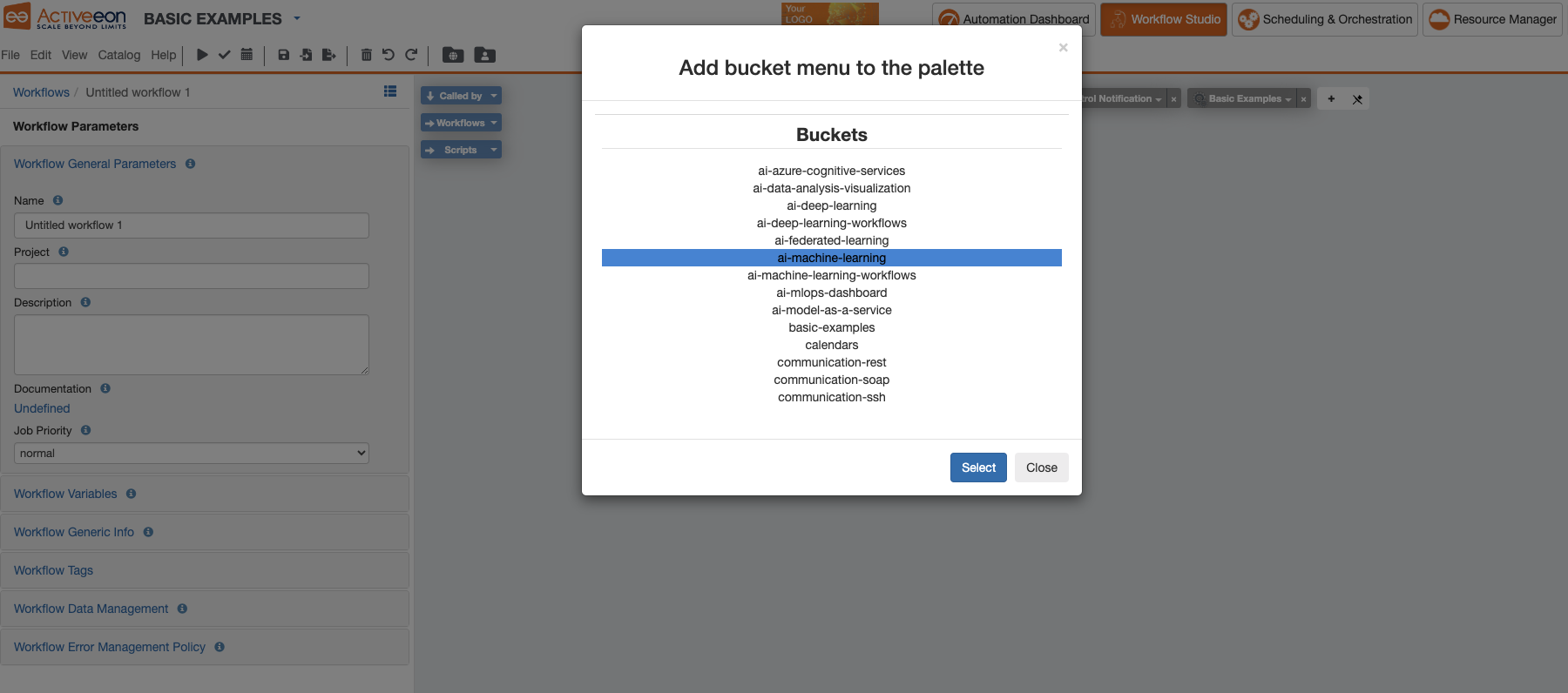

To add a bucket from the Catalog as a dropdown menu in the Palette: Click on View → Add Bucket Menu to the Palette or you press the ![]() button in the palette.

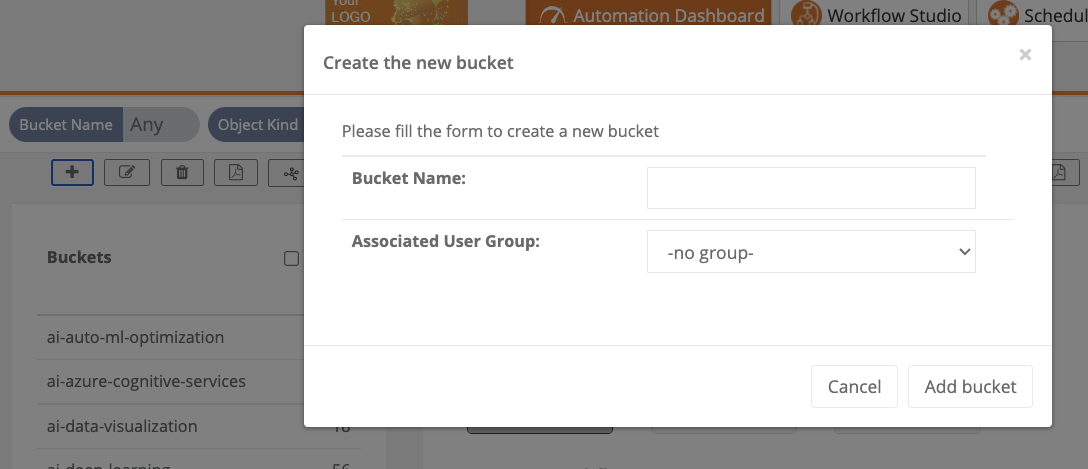

A window will show up to ask you which bucket you want to add to the Palette as in the image below.

button in the palette.

A window will show up to ask you which bucket you want to add to the Palette as in the image below.

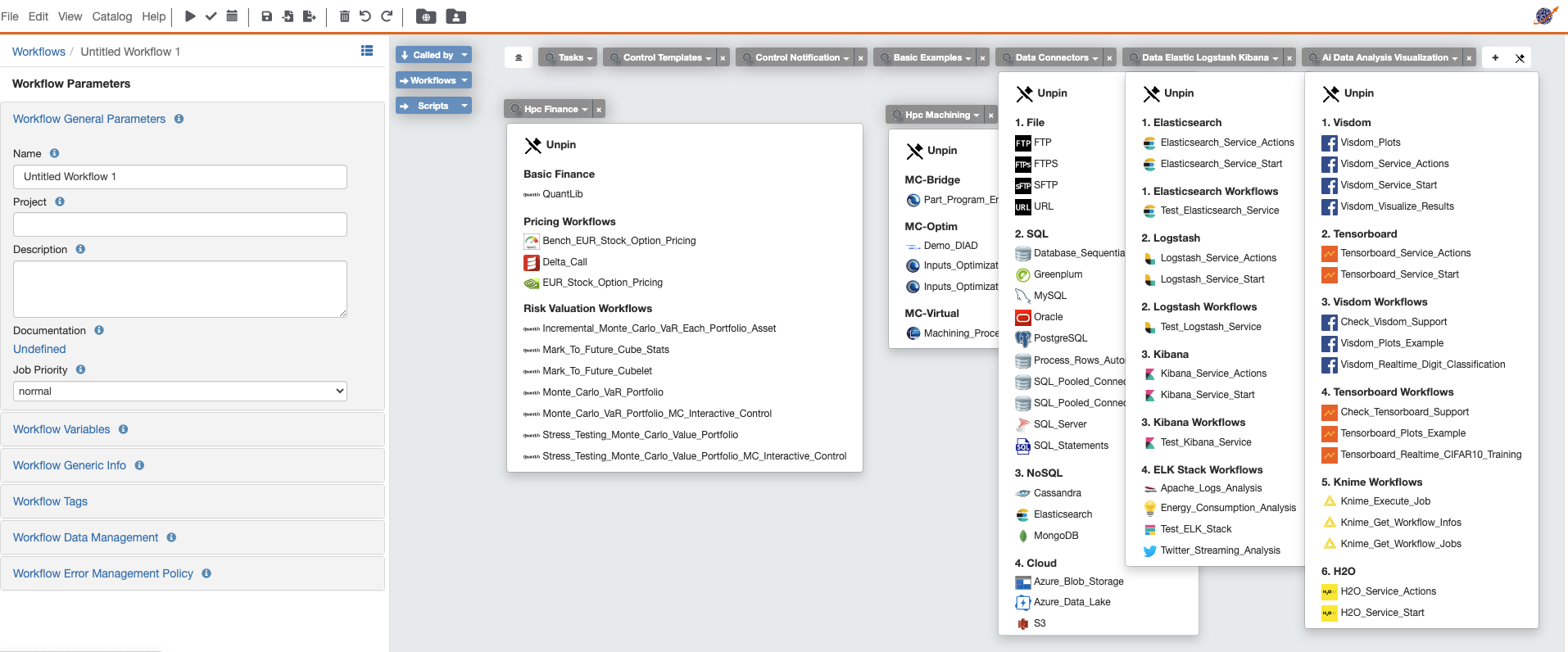

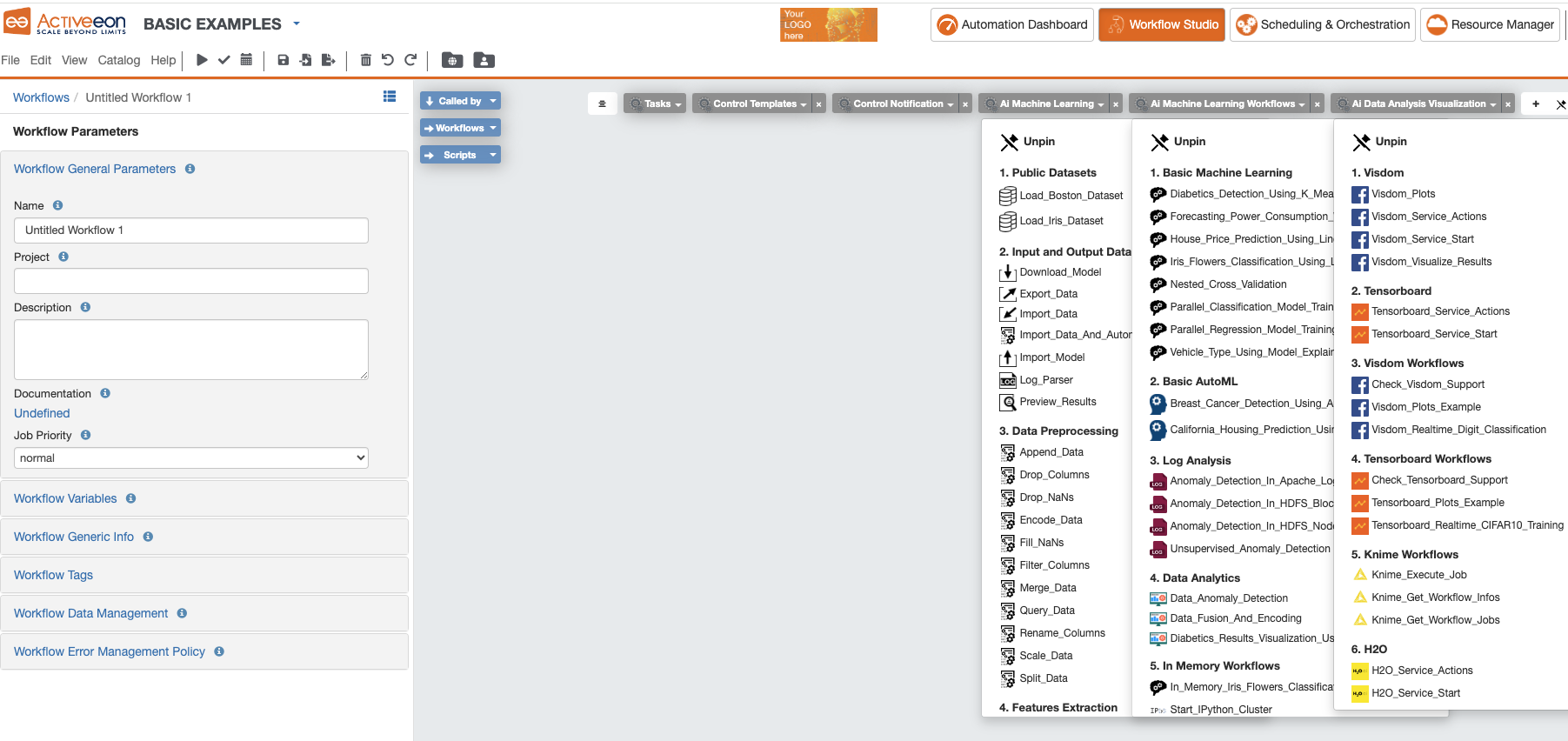

The selected Data Connectors Bucket now appears as a menu in the Studio. See the image below.

You can repeat the operation as many times as you wish in order to add other specific Menus you might need for your workflow design. See the image below.

4.2.4. Change Palette Preset

The Studio offers the possibility to set a Preset for the Palette. This makes it easier to load the Palette with a predefined set of default buckets.

Presets are generally arranged by theme (Basic Examples, Machine Learning, Deep Learning, Big Data, etc.)

The Palette’s preset can be changed by clicking View → Change Palette Preset. This will show a window with the current list of presets as in the image below.

After changing the preset, its name appears at the top of the Studio and all its buckets are added to the Palette.

5. Workflow concepts

Workflows come with constructs that help you distribute your computation. The tutorial Advanced workflows is a nice introduction to workflows with ProActive.

The following constructs are available:

-

Dependency

-

Replicate

-

Branch

-

Loop

| Use the Workflow Studio to create complex workflows, it is much easier than writing XML! |

5.1. Dependency

Dependencies can be set between tasks in a TaskFlow job. It provides a way to execute your tasks in a specified order, but also to forward result of an ancestor task to its children as parameter. Dependency between tasks is then both a temporal dependency and a data dependency.

Dependencies between tasks can be added either in ProActive Workflow Studio or simply in workflow XML as shown below:

<taskFlow>

<task name="task1">

<scriptExecutable>

<script>

<code language="groovy">

println "Executed first"

</code>

</script>

</scriptExecutable>

</task>

<task name="task2">

<depends>

<task ref="task1"/>

</depends>

<scriptExecutable>

<script>

<code language="groovy">

println "Now it's my turn"

</code>

</script>

</scriptExecutable>

</task>

</taskFlow>5.2. Replicate

The replication allows the execution of multiple tasks in parallel when only one task is defined and the number of tasks to run could change.

-

The target is the direct child of the task initiator.

-

The initiator can have multiple children; each child is replicated.

-

If the target is a start block, the whole block is replicated.

-

The target must have the initiator as only dependency: the action is performed when the initiator task terminates. If the target has an other pending task as dependency, the behavior cannot be specified.

-

There should always be a merge task after the target of a replicate: if the target is not a start block, it should have at least one child, if the target is a start block, the corresponding end block should have at least one child.

-

The last task of a replicated task block (or the replicated task if there is no block) cannot perform a branching or replicate action.

-

The target of a replicate action cannot be tagged as end block.

-

The current replication index (from to 0 to

runs) can be accessed via thePA_TASK_REPLICATIONvariable.

| If you are familiar with programming, you can see the replication as forking tasks. |

5.3. Branch

The branch construct provides the ability to choose between two alternative task flows, with the possibility to merge back to a common flow.

-

There is no explicit dependency between the initiator and the if/else targets. These are optional links (ie. A → B or E → F) defined in the if task.

-

The if and else flows can be merged by a continuation task referenced in the if task, playing the role of an endif construct. After the branching task, the flow will either be that of the if or the else task, but it will be continued by the continuation task.

-

If and else targets are executed exclusively. The initiator however can be the dependency of other tasks, which will be executed normally along the if or the else target.

-

A task block can be defined across if, else and continuation links, and not just plain dependencies (i.e. with A as start and F as end).

-

If using no continuation task, the if and else targets, along with their children, must be strictly distinct.

-

If using a continuation task, the if and else targets must be strictly distinct and valid task blocks.

-

if, else and continuation tasks (B, D and F) cannot have an explicit dependency.

-

if, else and continuation tasks cannot be entry points for the job, they must be triggered by the if control flow action.

-

A task can be target of only one if or else action. A continuation task can not merge two different if actions.

| If you are familiar with programming, you can see the branch as an if/then/else. |

5.4. Loop

The loop provides the ability to repeat a set of tasks.

-

The target of a loop action must be a parent of the initiator following the dependency chain; this action goes back to a previously executed task.

-

Every task is executed at least once; loop operates in a do…while fashion.

-

The target of a loop should have only one explicit dependency. It will have different parameters (dependencies) depending if it is executed for the first time or not. The cardinality should stay the same.

-

The loop scope should be a task block: the target is a start block task, and the initiator its related end block task.

-

The current iteration index (from 0 to n until

loopis false) can be accessed via thePA_TASK_ITERATIONvariable.

| If you are familiar with programming, you can see the loop as a do/while. |

5.5. Task Blocks

Workflows often relies on task blocks. Task blocks are defined by pairs of start and end tags.

-

Each task of the flow can be tagged either start or end

-

Tags can be nested

-

Each start tag needs to match a distinct end tag

Task blocks are very similar to the parenthesis of most programming languages: anonymous and nested start/end tags. The only difference is that a parenthesis is a syntactical information, whereas task blocks are semantic.

The role of task blocks is to restrain the expressiveness of the system so that a workflow can be statically checked and validated. A treatment that can be looped or iterated will be isolated in a well-defined task block.

-

A loop flow action only applies on a task block: the initiator of the loop must be the end of the block, and the target must be the beginning.

-

When using a continuation task in an if flow action, the if and else branches must be task blocks.

-

If the child of a replicate task is a task block, the whole block will be replicated and not only the child of the initiator.

5.6. Variables

Variables can come at hand while developing ProActive applications. Propagate data between tasks, customize jobs, and store useful debug information are a few examples of how variables can ease development. ProActive has basically two groups of variables:

-

Workflow variables, declared on the XML job definition.

-

Dynamic Variables, system variables or variables created at task runtime.

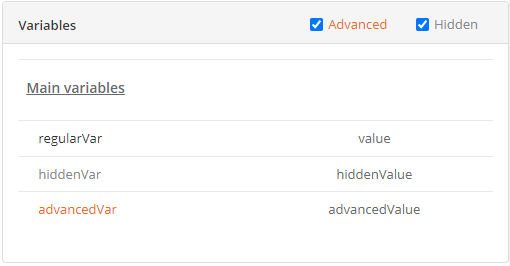

In order to immediately distinguish advanced and hidden variables, we present advanced variables in orange and hidden variables in light grey in our portals.

Here is an example with an Object’s details in the Catalog Portal

5.6.1. Workflow variables

Workflow variables are declared in the XML job definition. Within the definition we can have variables in several levels, at job level the variables are visible and shared by all tasks. At task level, variables are visible only within the task execution context.

Job variables

In a workflow, you can define job variables that are shared and visible by all tasks.

A job variable is defined using the following attributes:

-

name: the variable name.

-

value: the variable value.

-

model: the variable value type or model (optional). See section Variable Model.

-

description: the variable description (optional). The description can be written using html syntax (e.g.

This variable is <b>mandatory</b>.). -

group: the group name associated with the variable (optional). When the workflow has many variables, defining variable groups helps understanding the various parameters. Variables with no associated group are called Main Variables.

-

advanced: when a variable is advanced, it is not displayed by default in the workflow submission form. A checkbox allows the user to display or hide advanced variables.

-

hidden: a hidden variable is not displayed to the user in the workflow submission form. Hidden variables have the following usages:

-

perform hidden checks on other variables, defined by a variable model SpEL expression, see Variable Model and Spring Expression Language Model.

-

build dynamic forms where variables are displayed or hidden depending on the values of other variables, see Variable Model, Spring Expression Language Model and Dynamic form example using SpEL.

-

Variables are very useful to use workflows as templates where only a few parameters change for each execution. Upon submission you can define variable values in several ways from CLI, using the ProActive Workflow Studio, directly editing the XML job definition, or even using REST API.

The following example shows how to define a job variable in the XML:

<job ... >

<variables>

<variable name="variable" value="value" model=""/>

</variables>

...

</job>Job variables can be referenced anywhere in the workflow, including other job variables.

The syntax for referencing a variable is the pattern ${variable_name} (case-sensitive), for example:

<nativeExecutable>

<staticCommand value="${variable}" />

</nativeExecutable>Task variables

Similarly to job variables, Task variables can be defined within task scope in the job XML definition. Task variables scope is strictly limited to the task definition.

A task variable is defined using the following attributes:

-

name: the variable name.

-

value: the variable value.

-

inherited: asserts when the content of this variable is propagated from a job variable or a parent task. If true, the value defined in the task variable will only be used if no variable with the same name is propagated. Value field can be left empty however it can also work as a default value in case a previous task fails to define the variable.

-

model: the variable value type or model (optional). See section Variable Model

-

description: the variable description (optional). The description can be written using html syntax (e.g.

This variable is <b>mandatory</b>.). -

group: the group name associated with the variable (optional). When the workflow has many variables, defining variable groups helps understanding the various parameters. Variables with no associated group are called Main Variables.

-

advanced: when a variable is advanced, it is not displayed by default in the workflow submission form. A checkbox allows the user to display or hide advanced variables.

-

hidden: a hidden variable is not displayed to the user in the workflow submission form. Hidden variables have the following usages:

-

perform hidden checks on other variables, defined by a variable model SpEL expression, see Variable Model and Spring Expression Language Model.

-

build dynamic forms where variables are displayed or hidden depending on the values of other variables, see Variable Model, Spring Expression Language Model and Dynamic form example using SpEL.

-

For example:

<task ... >

<variables>

<variable name="variable" value="value" inherited="false" model=""/>

</variables>

...

</task>Task variables can be used similarly to job variables using the pattern ${variable_name} but only inside the task where the variable is defined.

Task variables override job variables, this means that if a job variable and a task variable are defined with the same name, the task variable value will be used inside the task (unless the task variable is marked as inherited), and the job variable value will be used elsewhere in the job.

Global Variables

Global variables are job variables that are configured inside ProActive server and apply to all workflows or to certain categories of workflows (e.g. workflows with a given name).

See Configure Global Variables and Generic Information section to understand how global variables can be configured on the ProActive server.

Variable Model

Job and Task variables can define a model attribute which let the workflow designer control the variable value syntax.

A workflow designer can for example decide that the variable NUMBER_OF_ENTRIES is expected to be convertible to an Integer ranged between 0 and 20.

The workflow designer will then provide a default value to the variable NUMBER_OF_ENTRIES, for example "10". This workflow is valid as "10" can be converted to an Integer and is between 0 and 20.

When submitting the workflow, it will be possible to choose a new value for the variable NUMBER_OF_ENTRIES. If the user submitting the workflow chooses for example the value -5, the validation will fail and an error message will appear.

Available Models

The following list describes the various model syntaxes available:

-

Main variable models

-

PA:INTEGER , PA:INTEGER[min,max] : variable can be converted to java.lang.Integer, and eventually is contained in the range [min, max].

Examples: PA:INTEGER will accept "-5" but not "1.4", PA:INTEGER[0,20] will accept "12" but not "25". -

PA:LONG , PA:LONG[min,max] : same as above with java.lang.Long.

-

PA:FLOAT , PA:FLOAT[min,max] : same as above with java.lang.Float.

Examples: PA:FLOAT[-0.33,5.99] will accept "3.5" but not "6". -

PA:DOUBLE , PA:DOUBLE[min,max] : same as above with java.lang.Double.

-

PA:SHORT , PA:SHORT[min,max] : same as above with java.lang.Short.

-

PA:BOOLEAN : variable is either "true", "false", "0" or "1".

-

PA:NOT_EMPTY_STRING : variable must be provided with a non-empty string value.

-

PA:HIDDEN : variable which allows the user to securely enter his/her value (i.e., each character is shown as an asterisk, so that it cannot be read.) while submitting the workflow.

-

PA:URL : variable can be converted to java.net.URL.

Examples: PA:URL will accept "http://mysite.com" but not "c:/Temp". -

PA:URI : variable can be converted to java.net.URI.

Examples: PA:URI will accept "/tmp/file" but not "c:\a^~to" due to invalid characters. -

PA:LIST(item1,item2,…) : variable must be one of the values defined in the list.

Examples: PA:LIST(a,b,c) will accept "a", "b", "c" but no other value. -

PA:JSON : variable syntax must be a valid JSON expression as defined in JSON doc.

Examples: PA:JSON will accept {"name": "John", "city":"New York"} and empty values like {} or [{},{}], but not ["test" : 123] (Unexpected character ':') and {test : 123} (Unexpected character 't'). -

PA:REGEXP(pattern) : variable syntax must match the regular expression defined in the pattern. The regular expression syntax is described in class Pattern.

Examples: PA:REGEXP([a-z]+) will accept "abc", "foo", but not "Foo".

-

-

Advanced variable models

-

PA:CATALOG_OBJECT : use this type when you want to reference and use, in the current workflow, another object from the Catalog. Various portals and tools will make it easy to manage catalog objects. For instance, at Job submission, you will be able to browse the Catalog to select the needed object.

Variable value syntax must be a valid expression that matches the following pattern:bucketName/objectName[/revision]. Note that the revision sub-pattern is a hash code number represented by 13 digit.

Examples: PA:CATALOG_OBJECT accepts "bucket-example/object-example" and "bucket-example/object-example/1539310165443" but not "bucket-example/object-example/153931016" (invalid revision number) nor "bucket-example/" (missing object name). -

PA:CATALOG_OBJECT(kind,contentType,bucketName,objectName) : one or more filters can be specified in the specified order of the PA:CATALOG_OBJECT model to limit the accepted values. For example PA:CATALOG_OBJECT(kind) can be used to filter a specific

Kind, PA:CATALOG_OBJECT(kind,contentType) to filter bothKindandContent-type, PA:CATALOG_OBJECT(,contentType) to filter only aContent-type(note the empty first parameter), etc…

In that case, the variable value must be a catalog object which matches theKind,Content type,BucketNameand/orObjectNamerequirements (For more information regarding Kind and Content type click here).

Note that Kind and Content type are case insensitive and require a "startsWith" matching, while BucketName and ObjectName are case sensitive and, by default, require a "contains" matching. That is, the Kind and the Content type of the provided catalog object must start with the filters specified in the model while BucketName and ObjectName of the catalog object should contain them. The scheduler server verifies that the object exists in the catalog and fulfills the specified requirements when the workflow is submitted.

Examples:-

PA:CATALOG_OBJECT(Workflow/standard) accepts only standard workflow objects, that means PSA workflows or scripts are not valid values.

-

PA:CATALOG_OBJECT(Script, text/x-python) accepts a catalog object which is a Python script but not a workflow object or a Groovy script.

-

PA:CATALOG_OBJECT(,,basic-example, Native_Task) accepts catalog objects that are in buckets ia-basic-example or basic-example-python and named Native_Task or Native_Task_Python but not NATIVE_TASK.

-

-

Notice that the default behaviour of BucketName or ObjectName filters can be modified by including the special character

%that matches any word. Hence, by adding%in the beginning (resp. end) of a BucketName or an ObjectName we mean that the filtered value should end (resp. start) with the specified string.

Examples:

-

-

-

-

PA:CATALOG_OBJECT(,,%basic-example) accepts catalog objects that are in buckets ia-basic-example or db-basic-example but not the ones in basic-example-python.

-

PA:CATALOG_OBJECT(,,,Native_Task%) accepts catalog objects that are named Native_Task or Native_Task_Python but not Simplified_Native_Task.

It is possible to have a PA:CATALOG_OBJECT object variable that has an optional value by using the notation PA:CATALOG_OBJECT?. See here.

-

-

-

-

PA:CREDENTIAL : variable whose value is a key of the ProActive Scheduler Third-Party Credentials (which are stored on the server side in encrypted form). The variable allows the user to access a credential from the task implementation in a secure way (e.g., for a groovy task credentials.get(variables.get("MY_CRED_KEY")) instead of a plain-text. At workflow submission, the scheduler server verifies that the key exists in the 3rd party credentials of the user. In addition, the use of this model enables the user to manage her/his credentials via a graphical interface.

-

PA:CRON : variable syntax must be a valid cron expression as defined in the cron4j manual. This model can be used for example to control the provided value to the

loopcontrol flow parameter.+ Examples: PA:CRON will accept "5 * * * *" but not "* * * *" (missing minutes sub-pattern). -

PA:DATETIME(format) , PA:DATETIME(format)[min,max] : variable can be converted to a java.util.Date using the format specified (see the format definition syntax in the SimpleDateFormat class).

A range can also be used in the PA:DATETIME model. In that case, each bound of the range must match the date format used.

This model is used for example to control an input value used to trigger the execution of a task at a specific date time.Examples:

PA:DATETIME(yyyy-MM-dd) will accept "2014-12-01" but not "2014".

PA:DATETIME(yyyy-MM-dd)[2014-01-01, 2015-01-01] will accept "2014-12-01" but not "2015-03-01".

PA:DATETIME(yyyy-MM-dd)[2014, 2015] will result in an error during the workflow definition as the range bounds [2014, 2015] are not using the format yyyy-MM-dd. -

PA:MODEL_FROM_URL(url) : variable syntax must match the model fetched from the given URL. This can be used for example when the model needs to represent a list of elements which may evolve over time and is updated inside a file. Such as a list of machines in an infrastructure, a list of users, etc.

See Variable Model using Resource Manager data for premade models based on the Resource Manager state.

Examples: PA:MODEL_FROM_URL(file:///srv/machines_list_model.txt), if the file machines_list_model.txt contains PA:LIST(host1,host2), will accept only "host1" and "host2", but may accept other values as the machines_list_model file changes. -

PA:GLOBAL_FILE : variable whose value is the relative path of a file in the Global Data Space. At workflow submission, the scheduler server verifies that the file exists in the global dataspace. In addition, this model enables the user to graphically browse the global dataspace to select a file as an input data for the workflow.

-

PA:USER_FILE : variable whose value is the relative path of a file in the User Data Space. At workflow submission, the scheduler server verifies that the file exists in the user dataspace. In addition, this model enables the user to graphically browse the user dataspace to select a file as an input data for the workflow.

-

PA:GLOBAL_FOLDER : variable whose value is the relative path of a folder in the Global Data Space. At workflow submission, the scheduler server verifies that the folder exists in the global dataspace. Note, the variable value should not end with a slash to avoid the problem of duplicate slash in its usage.

-

PA:USER_FOLDER : variable whose value is the relative path of a folder in the User Data Space. At workflow submission, the scheduler server verifies that the folder exists in the user dataspace. Note, the variable value should not end with a slash to avoid the problem of duplicate slash in its usage.

-

PA:SPEL(SpEL expression) : variable syntax will be evaluated by a SpEL expression. Refer to Spring Expression Language Model.

-

PA:SPEL2(error message,SpEL expression) : variable syntax will be evaluated by a SpEL expression. This model allows in addition to provide a user-friendly error message pPlease note that the error message must not contain any comma character). Refer to Spring Expression Language Model.

-

Variable Model (Type) using a type defined dynamically in another Variable

A Variable can use as its type a model that is defined in another variable.

To use such possibility, the workflow designer can simply use in the Model definition of another specific variable name proceeded by the character $.

When submitting the workflow, the user will have the ability to select the model dynamically by changing the value of the referenced variable. And then will be able to select the value of the first variable according to the selected type.

For example, if we have:

variable1 has as its model PA:LIST(PA:GLOBAL_FILE, PA:INTEGER)

variable2 has as its model $variable1

Then the model of variable2 is the value that the variable variable1 will have in runtime. Thus, it will be either PA:GLOBAL_FILE or PA:INTEGER.

Optional Variable

To define an optional variable, the workflow designer can simply add ? at the end of the model attribute, such as PA:INTEGER?.

When submitting the workflow, it will be allowed to not provide a value for the optional variables. The validation will only fail when the user fills in an invalid value.

For example, a variable MY_OPTIONAL_INTEGER defined as the model PA:INTEGER? will accept an empty string as the variable value, but it will refuse 1.4.

All the available model syntaxes, except PA:NOT_EMPTY_STRING support to be defined as optional.

Variable Model using Resource Manager data

ProActive Resource Manager provides a set of REST endpoints which allow to create dynamic models based on the Resource Manager state.

These models are summarized in the following table. Models returned are PA:LIST types which allow to select a value in ProActive portals through a drop-down list. The list always contain an empty value choice.

| Metric Name | Description | Model Syntax | Example query/returned data |

|---|---|---|---|

Hosts |

All machine host names or ip addresses registered in the Resource Manager |

|

PA:LIST(,try.activeeon.com,10.0.0.19) |

Hosts Filtered by Name |

All machine host names or ip addresses registered in the Resource Manager that match a given regular expression. |

Some characters of the regular expression must be encoded according to the specification. |

?name=try%5Ba-z%5D*.activeeon.com ⇒ PA:LIST(,try.activeeon.com,trydev.activeeon.com,tryqa.activeeon.com) |

Node Sources |

All node sources registered in the Resource Manager |

|

PA:LIST(,Default,LocalNodes,GPU,Kubernetes) |

Node Sources Filtered by Name |

All node sources registered in the Resource Manager that match a given regular expression. |

Some characters of the regular expression must be encoded according to the specification. |

?name=Azure.* ⇒ PA:LIST(,AzureScaleSet1,AzureScaleSet2) |

Node Sources Filtered by Infrastructure Type |

All node sources registered in the Resource Manager whose infrastructure type matches a given regular expression. See Node Source Infrastructures. |

Some characters of the regular expression must be encoded according to the specification. |

?infrastructure=Azure.* will return all node sources that have an AzureInfrastructure or AzureVMScaleSetInfrastructure types. |

Node Sources Filtered by Policy Type |

All node sources registered in the Resource Manager whose policy type matches a given regular expression. See Node Source Policies. |

Some characters of the regular expression must be encoded according to the specification. |

?policy=Dynamic.* will return all node sources that have a DynamicPolicy type. |

Tokens |

All tokens registered in the Resource Manager (across all registered ProActive Nodes). See NODE_ACCESS_TOKEN. |

|

PA:LIST(,token1,token2) |

Tokens Filtered by Name |

All tokens registered in the Resource Manager (across all registered ProActive Nodes) that match a given regular expression. See NODE_ACCESS_TOKEN. |

Some characters of the regular expression must be encoded according to the specification. |

?name=token.* ⇒ PA:LIST(,token1,token2) |

Tokens Filtered by Node Source |

All tokens registered in the given Node Source. This filter returns a list which contains tokens defined:

See NODE_ACCESS_TOKEN. |

The exact name of the target Node Source must be provided (check is case-sensitive). |

?nodeSource=LocalNodes ⇒ PA:LIST(,token1,token2) |

Spring Expression Language Model

The PA:SPEL(expr) or PA:SPEL2(message,expr) models allows defining expressions able to validate a variable value or not. Additionally, these models can be used to validate multiple variable values or to dynamically update other variables.

The PA:SPEL2 model allows in addition to provide an error message, which will be displayed to the user when the value entered does not satisfy the SpEL expression. Please note that the error message must not contain any comma character.

The syntax of the SpEL expression is defined by the Spring Expression Language reference.

For security concerns, we apply a restriction on the authorized class types. Besides the commonly used data types (Boolean, String, Long, Double, etc.), we authorize the use of ImmutableSet, ImmutableMap, ImmutableList, Math, Date types, JSONParser and ObjectMapper for JSON type and DocumentBuilderFactory for XML type.

In order to interact with variables, the expression has access to the following properties:

-

#value: this property will contain the value of the current variable defined by the user. -

variables['variable_name']: this property array contains all the variable values of the same context (for example of the same task for a task variable). -

models['variable_name']: this property array contains all the variable models of the same context (for example of the same task for a task variables). -

valid: can be set totrueorfalseto validate or invalidate a variable. -

temp: can be set to a temporary object used in the SpEL expression. -

tempMap: an empty Hash Map structure which can be populated and used in the SpEL expression.

The expression has also access to the following functions (in addition to the functions available by default in the SpEL language):

-

t(expression): evaluate the expression and returntrue. -

f(expression): evaluate the expression and returnfalse. -

s(expression): evaluate the expression and return an empty string. -

hideVar('variable name'): hides the variable given in parameter. Used to build Dynamic form example using SpEL. Returnstrueto allow chaining actions. -

showVar('variable name'): shows the variable given in parameter. Used to build Dynamic form example using SpEL. Returnstrueto allow chaining actions. -

hideGroup('group name'): hides all variables belonging to the variable group given in parameter. Used to build Dynamic form example using SpEL. Returnstrueto allow chaining actions. -

showGroup('group name'): shows all variables belonging to the variable group given in parameter. Used to build Dynamic form example using SpEL. Returnstrueto allow chaining actions.

The SpEL expression must either:

-

return a boolean value,

trueif the value is correct,falseotherwise. -

set the valid property to

trueorfalse.

Any other behavior will raise an error.

-

Example of SpEL simple validations:

PA:SPEL(#value == 'abc'): will accept the value if it’s the 'abc' string

PA:SPEL(T(Integer).parseInt(#value) > 10): will accept the value if it’s an integer greater than 10.Note that #value always contains a string and must be converted to other types if needed.

-

Example of SpEL multiple validations:

PA:SPEL(variables['var1'] + variables['var2'] == 'abcdef'): will be accepted if the string concatenation of variable var1 and var2 is 'abcdef'.

PA:SPEL(T(Integer).parseInt(variables['var1']) + T(Integer).parseInt(variables['var2']) < 100): will be accepted if the sum of variables var1 and var2 are smaller than 100. -

Example of SpEL variable inference:

PA:SPEL( variables['var2'] == '' ? t(variables['var2'] = variables['var1']) : true ): if the variable var2 is empty, it will use the value of variable var1 instead. -

Example of SpEL variable using ObjectMapper type:

PA:SPEL( t(variables['var1'] = new org.codehaus.jackson.map.ObjectMapper().readTree('{"abc": "def"}').get('abc').getTextValue()) ): will assign the value 'def' to the variable var1. -

Example of SpEL variable using DocumentBuilderFactory type:

PA:SPEL( t(variables['var'] = T(javax.xml.parsers.DocumentBuilderFactory).newInstance().newDocumentBuilder().parse(new org.xml.sax.InputSource(new java.io.StringReader('<employee id="101"><name>toto</name><title>tata</title></employee>'))).getElementsByTagName('name').item(0).getTextContent()) ): will assign the value 'toto' to the variable var1.the SpEL expression must return a boolean value, this is why in the above expressions we use the t(expression)function to perform affectations and return a booleantruevalue.

Dynamic form example using SpEL

Using SpEL expressions, it is possible to show or hide variables based on the values of other variables. Thus, it allows to create dynamic forms.

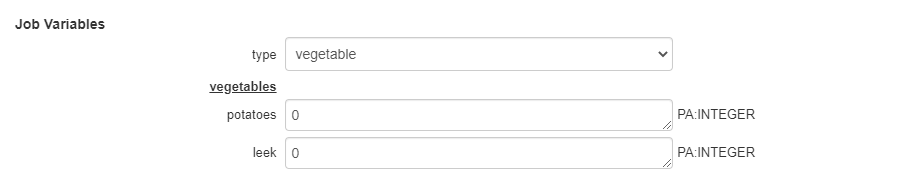

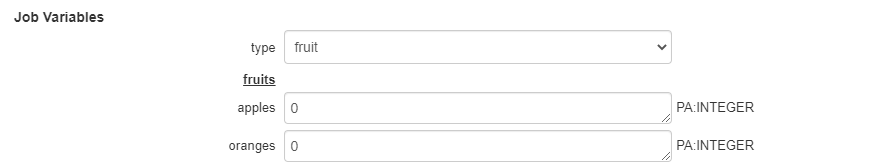

Consider the following variables definition (attributes xml escaping has been removed for clarity):

<variables>

<variable name="type" value="vegetable" model="PA:LIST(vegetable,fruit)" description="" group="" advanced="false" hidden="false"/>

<variable name="potatoes" value="0" model="PA:INTEGER" description="Amount of potatoes to order (in kilograms)" group="vegetables" advanced="false" hidden="false"/>

<variable name="leek" value="0" model="PA:INTEGER" description="Amount of leek to order (in kilograms)" group="vegetables" advanced="false" hidden="false"/>

<variable name="apples" value="0" model="PA:INTEGER" description="Amount of apples to order (in kilograms)" group="fruits" advanced="false" hidden="true"/>

<variable name="oranges" value="0" model="PA:INTEGER" description="Amount of oranges to order (in kilograms)" group="fruits" advanced="false" hidden="true"/>

<variable name="type_handler" value="" model="PA:SPEL(variables['type'] == 'vegetable' ? showGroup('vegetables') && hideGroup('fruits') : showGroup('fruits') && hideGroup('vegetables'))" description="" group="" advanced="false" hidden="true"/>

</variables>The first variable type presents a choice to the user : select fruits or vegetables.

The last variable type_handler, which is hidden to the user, analyses this choice and displays either variables belonging to the fruits group or the vegetables group.

The SpEL model associated with type_handler performs this operation:

PA:SPEL(variables['type'] == 'vegetable' ? showGroup('vegetables') && hideGroup('fruits') : showGroup('fruits') && hideGroup('vegetables'))